Improving One-stage Visual Grounding by Recursive Sub-query Construction

by Zhengyuan Yang, Tianlang Chen, Liwei Wang, and Jiebo Luo

European Conference on Computer Vision (ECCV), 2020

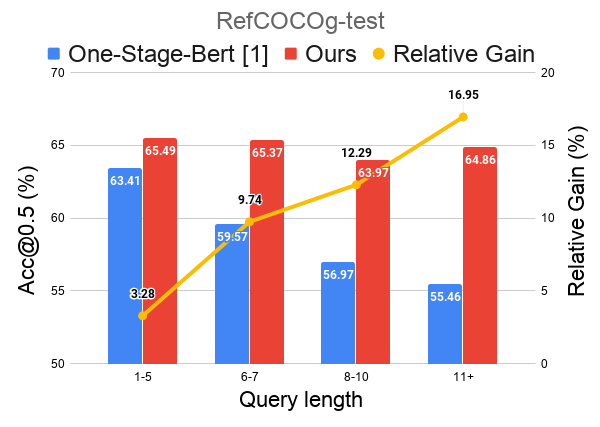

We propose a recursive sub-query construction framework to address previous one-stage visual grounding methods' limitations on grounding long and complex queries. For more details, please refer to our paper.

[1] Yang, Zhengyuan, et al. "A fast and accurate one-stage approach to visual grounding". ICCV 2019.

- Python 3.6 (3.5 tested)

- Pytorch 0.4.1 and 1.4.0 tested (other versions in between should work)

- Others (Pytorch-Bert, etc.) Check requirements.txt for reference.

-

Clone the repository

git clone https://github.com/zyang-ur/ReSC.git -

Prepare the submodules and associated data

- RefCOCO, RefCOCO+, RefCOCOg, ReferItGame Dataset: place the data or the soft link of dataset folder under

./ln_data/. We follow dataset structure DMS. To accomplish this, thedownload_dataset.shbash script from DMS can be used.bash ln_data/download_data.sh --path ./ln_data

-

Data index: download the generated index files and place them as the

./datafolder. Availble at [Gdrive], [One Drive].rm -r data tar xf data.tar -

Model weights: download the pretrained model of Yolov3 and place the file in

./saved_models.sh saved_models/yolov3_weights.sh

More pretrained models are availble in the performance table [Gdrive], [One Drive] and should also be placed in ./saved_models.

-

Train the model, run the code under main folder. Using flag

--largeto access the ReSC-large model. ReSC-base is the default.python train.py --data_root ./ln_data/ --dataset referit \ --gpu gpu_id --resume saved_models/ReSC_base_referit.pth.tar -

Evaluate the model, run the code under main folder. Using flag

--testto access test mode.python train.py --data_root ./ln_data/ --dataset referit \ --gpu gpu_id --resume saved_models/ReSC_base_referit.pth.tar --test

We train 100 epoches with batch size 8 on all datasets expect RefCOCOg, where we find training 20/40 epoches have the best performance. We fix the bert weights during training as the default. The language encoder can be finetuned with the flag --tunebert. We observe a small improvenment on some datasets (e.g. RefCOCOg). Please check other experiment settings in our paper.

Pre-trained models are availble in [Gdrive], [One Drive].

| Dataset | Ours-base ([email protected]) | Ours-large ([email protected]) |

|---|---|---|

| RefCOCO | val: 76.74 | val: 78.09 |

| testA: 78.61 | testA: 80.89 | |

| testB: 71.85 | testB: 72.97 | |

| RefCOCO+ | val: 63.21 | val: 62.97 |

| testA: 65.94 | testA: 67.13 | |

| testB: 56.08 | testB: 55.43 | |

| RefCOCOg | val-g: 61.12 | val-g: 62.22 |

| val-umd: 64.89 | val-umd: 67.50 | |

| test-umd: 64.01 | test-umd: 66.55 | |

| ReferItGame | val: 66.78 | val: 67.15 |

| test: 64.33 | test: 64.70 |

@inproceedings{yang2020improving,

title={Improving One-stage Visual Grounding by Recursive Sub-query Construction},

author={Yang, Zhengyuan and Chen, Tianlang and Wang, Liwei and Luo, Jiebo},

booktitle={ECCV},

year={2020}

}

@inproceedings{yang2019fast,

title={A Fast and Accurate One-Stage Approach to Visual Grounding},

author={Yang, Zhengyuan and Gong, Boqing and Wang, Liwei and Huang

, Wenbing and Yu, Dong and Luo, Jiebo},

booktitle={ICCV},

year={2019}

}

Our code is built on Onestage-VG.

Part of the code or models are from DMS, film, MAttNet, Yolov3 and Pytorch-yolov3.