At the core of our mission is the desire to create a harmonious space where conservation scientists from all over the globe can unite. Where they're able to share, grow, use datasets and deep learning architectures for wildlife conservation. We've been inspired by the potential and capabilities of Megadetector, and we deeply value its contributions to the community. As we forge ahead with Pytorch-Wildlife, under which Megadetector now resides, please know that we remain committed to supporting, maintaining, and developing Megadetector, ensuring its continued relevance, expansion, and utility.

Pytorch-Wildlife is pip installable:

pip install PytorchWildlife

To use the newest version of MegaDetector with all the existing functionalities, you can use our Hugging Face interface or simply load the model with Pytorch-Wildlife. The weights will be automatically downloaded:

from PytorchWildlife.models import detection as pw_detection

detection_model = pw_detection.MegaDetectorV6()For those interested in accessing the previous MegaDetector repository, which utilizes the same MegaDetectorV5 model weights and was primarily developed by Dan Morris during his time at Microsoft, please visit the archive directory, or you can visit this forked repository that Dan Morris is actively maintaining.

Tip

If you have any questions regarding MegaDetector and Pytorch-Wildlife, please email us or join us in our discord channel:

- In this version of Pytorch-Wildlife, we are happy to release our detection fine-tuning module, with which users can fine-tune their own detection model from any released pre-trained MegaDetectorV6 models. Besides, this module also has functionalities that help users to prepare their datasets for the fine-tuning, just as our classification fine-tuning modules. For more details, please check the readme. Currently the fine-tuning is based on Ultralytics with AGPL. We will release MIT versions in the future. Here is the release page.

- We have also released additional MegaDetectorV6 models based on Yolo-v10 and RtDetr. We have skipped Yolo-v11 models because of limited performance and architectural gains. Most of the MIT and Apache versions have also finished training but are waiting for internal review before they can be released.

- We have also updated our AI4G-Amazon model with bigger datasets and it has a better performance compared to previous iterations. Please feel free to test it or fine-tune on it.

- We will also make a new roadmap for 2025 in the next couple of updates.

We have officially released our 6th version of MegaDetector, MegaDetectorV6! In the next generation of MegaDetector, we are focusing on computational efficiency, performance, mordernizing of model architectures, and licensing. We have trained multiple new models using different model architectures, including Yolo-v9, Yolo-v10, and RT-Detr for maximum user flexibility. We have a rolling release schedule for different versions of MegaDetectorV6.

MegaDetectorV6 models are based on architectures optimized for performance and low-budget devices. For example, the MegaDetectorV6-Ultralytics-YoloV10-Compact (MDV6-yolov10-c) model only have 2% of the parameters of the previous MegaDetectorV5 and still exhibits 4% higher animal detection recall on our validation datasets. In the following figure, we can see the Performance to Parameter metric of each released MegaDetector model. All of the V6 models, extra large or compact, have at least 50% less parameters compared to MegaDetectorV5 but with much higher animal detection performance.

Tip

From now on, we encourage our users to use MegaDetectorV6 as their default animal detection model and choose whichever model that fits the project needs. To reduce potential confusion, we have also standardized the model names into MDV6-Compact and MDV6-Extra for two model sizes using the same architecture. Learn how to use MegaDetectorV6 in our image demo and video demo.

The Pytorch-Wildlife package is under MIT, however some of the models in the model zoo are not. For example, MegaDetectorV5, which is trained using the Ultralytics package, is under AGPL-3.0, and is not for closed-source comercial uses.

Important

THIS IS TRUE TO ALL EXISTING MEGADETECTORV5 MODELS IN ALL EXISTING FORKS THAT ARE TRAINED USING YOLOV5, AN ULTRALYTICS-DEVELOPED MODEL.

We want to make Pytorch-Wildlife a platform where different models with different licenses can be hosted and want to enable different use cases. To reduce user confusions, in our model zoo section, we list all existing and planed future models in our model zoo, their corresponding license, and release schedules.

In addition, since the Pytorch-Wildlife package is under MIT, all the utility functions, including data pre-/post-processing functions and model fine-tuning functions in this packages are under MIT as well.

| Models | Version Names | Licence | Release | Parameters (M) | mAPval 50-95 |

Animal Recall |

|---|---|---|---|---|---|---|

| MegaDetectorV5 | - | AGPL-3.0 | Released | 121 | 74.7 | 74.9 |

| MegaDetectorV6-Ultralytics-YoloV9-Compact | MDV6-yolov9-c | AGPL-3.0 | Released | 25.5 | 73.8 | 82.6 |

| MegaDetectorV6-Ultralytics-YoloV9-Extra | MDV6-yolov9-e | AGPL-3.0 | Released | 58.1 | 80.2 | 87.1 |

| MegaDetectorV6-Ultralytics-YoloV10-Compact (even smaller and no NMS) | MDV6-yolov10-c | AGPL-3.0 | Released | 2.3 | 71.8 | 78.8 |

| MegaDetectorV6-Ultralytics-YoloV10-Extra (extra large model and no NMS) | MDV6-yolov10-e | AGPL-3.0 | Released | 29.5 | 79.9 | 85.2 |

| MegaDetectorV6-Ultralytics-RtDetr-Compact | MDV6-rtdetr-c | AGPL-3.0 | Released | 31.9 | 73.9 | 83.4 |

| MegaDetectorV6-Ultralytics-YoloV11-Compact | - | AGPL-3.0 | Will Not Release | 2.6 | 71.9 | 79.8 |

| MegaDetectorV6-Ultralytics-YoloV11-Extra | - | AGPL-3.0 | Will Not Release | 56.9 | 79.3 | 86.0 |

| MegaDetectorV6-MIT-YoloV9-Compact | MDV6-mit-yolov9-c | MIT | February 2025 | 9.7 | 73.8 | - |

| MegaDetectorV6-MIT-YoloV9-Extra | MDV6-mit-yolov9-e | MIT | February 2025 | 51 | 75.2 | - |

| MegaDetectorV6-Apache-RTDetr-Compact | MDV6-apa-rtdetr-c | Apache | February 2025 | 20 | 76.3 | - |

| MegaDetectorV6-Apache-RTDetr-Extra | MDV6-apa-rtdetr-e | Apache | February 2025 | 76 | 80.8 | - |

| Models | Version Names | Licence | Release | Reference |

|---|---|---|---|---|

| HerdNet-general | general | CC BY-NC-SA-4.0 | Released | Alexandre et. al. 2023 |

| HerdNet-ennedi | ennedi | CC BY-NC-SA-4.0 | Released | Alexandre et. al. 2023 |

| MegaDetector-Overhead | - | MIT | Mid 2025 | - |

| MegaDetector-Bioacoustics | - | MIT | Late 2025 | - |

| Models | Version Names | Licence | Release |

|---|---|---|---|

| AI4G-Oppossum | - | MIT | Released |

| AI4G-Amazon-V1 | v1 | MIT | Released |

| AI4G-Amazon-V2 | v2 | MIT | Released |

| AI4G-Serengeti | - | MIT | Released |

Tip

Some models, such as MegaDetectorV6, HerdNet, and AI4G-Amazon, have different versions, and they are loaded by their corresponding version names. Here is an example: detection_model = pw_detection.MegaDetectorV6(version="MDV6-yolov10-e").

PyTorch-Wildlife is a platform to create, modify, and share powerful AI conservation models. These models can be used for a variety of applications, including camera trap images, overhead images, underwater images, or bioacoustics. Your engagement with our work is greatly appreciated, and we eagerly await any feedback you may have.

The Pytorch-Wildlife library allows users to directly load the MegaDetector model weights for animal detection. We've fully refactored our codebase, prioritizing ease of use in model deployment and expansion. In addition to MegaDetector, Pytorch-Wildlife also accommodates a range of classification weights, such as those derived from the Amazon Rainforest dataset and the Opossum classification dataset. Explore the codebase and functionalities of Pytorch-Wildlife through our interactive HuggingFace web app or local demos and notebooks, designed to showcase the practical applications of our enhancements at PyTorchWildlife. You can find more information in our documentation.

👇 Here is a brief example on how to perform detection and classification on a single image using PyTorch-wildlife

import numpy as np

from PytorchWildlife.models import detection as pw_detection

from PytorchWildlife.models import classification as pw_classification

img = np.random.randn(3, 1280, 1280)

# Detection

detection_model = pw_detection.MegaDetectorV6() # Model weights are automatically downloaded.

detection_result = detection_model.single_image_detection(img)

#Classification

classification_model = pw_classification.AI4GAmazonRainforest() # Model weights are automatically downloaded.

classification_results = classification_model.single_image_classification(img)pip install PytorchWildlife

Please refer to our installation guide for more installation information.

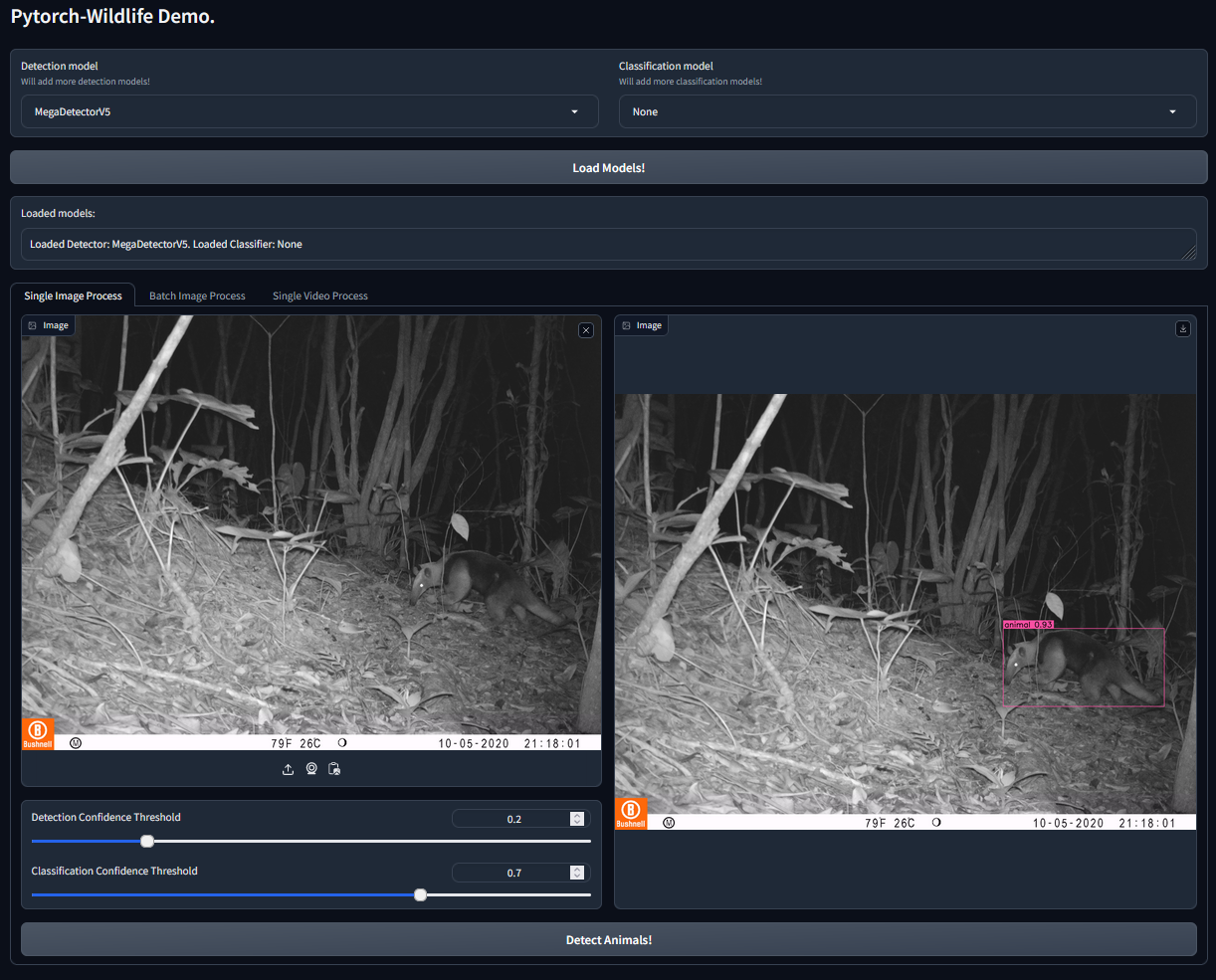

If you want to directly try Pytorch-Wildlife with the AI models available, including MegaDetector, you can use our Gradio interface. This interface allows users to directly load the MegaDetector model weights for animal detection. In addition, Pytorch-Wildlife also has two classification models in our initial version. One is trained from an Amazon Rainforest camera trap dataset and the other from a Galapagos opossum classification dataset (more details of these datasets will be published soon). To start, please follow the installation instructions on how to run the Gradio interface! We also provide multiple Jupyter notebooks for demonstration.

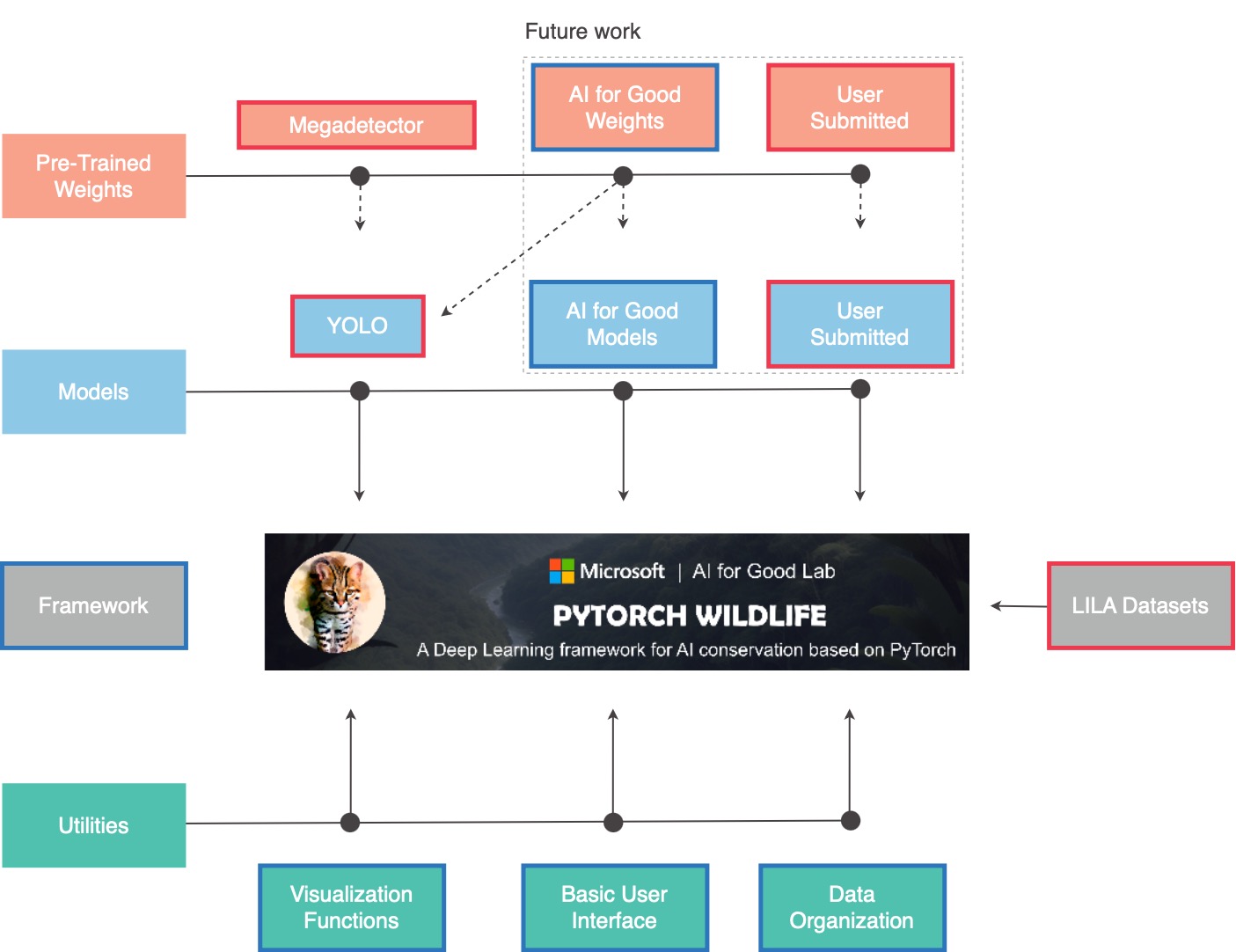

What are the core components of Pytorch-Wildlife?

Pytorch-Wildlife integrates four pivotal elements:

▪ Machine Learning Models

▪ Pre-trained Weights

▪ Datasets

▪ Utilities

In the provided graph, boxes outlined in red represent elements that will be added and remained fixed, while those in blue will be part of our development.

We're kickstarting with YOLO as our first available model, complemented by pre-trained weights from MegaDetector. We have MegaDetectorV5, which is the same MegaDetectorV5 model from the previous repository, and many different versions of MegaDetectorV6 for different usecases.

As we move forward, our platform will welcome new models and pre-trained weights for camera traps and bioacoustic analysis. We're excited to host contributions from global researchers through a dedicated submission platform.

Pytorch-Wildlife will also incorporate the vast datasets hosted on LILA, making it a treasure trove for conservation research.

Our set of utilities spans from visualization tools to task-specific utilities, many inherited from Megadetector.

While we provide a foundational user interface, our platform is designed to inspire. We encourage researchers to craft and share their unique interfaces, and we'll list both existing and new UIs from other collaborators for the community's benefit.

Let's shape the future of wildlife research, together! 🙌

Credits to Universidad de los Andes, Colombia.

Credits to Universidad de los Andes, Colombia.

Credits to the Agency for Regulation and Control of Biosecurity and Quarantine for Galápagos (ABG), Ecuador.

We are thrilled to announce our collaboration with EcoAssist---a powerful user interface software that enables users to directly load models from the PyTorch-Wildlife model zoo for image analysis on local computers. With EcoAssist, you can now utilize MegaDetectorV5 and the classification models---AI4GAmazonRainforest and AI4GOpossum---for automatic animal detection and identification, alongside a comprehensive suite of pre- and post-processing tools. This partnership aims to enhance the overall user experience with PyTorch-Wildlife models for a general audience. We will work closely to bring more features together for more efficient and effective wildlife analysis in the future.

We have recently published a summary paper on Pytorch-Wildlife. The paper has been accepted as an oral presentation at the CV4Animals workshop at this CVPR 2024. Please feel free to cite us!

@misc{hernandez2024pytorchwildlife,

title={Pytorch-Wildlife: A Collaborative Deep Learning Framework for Conservation},

author={Andres Hernandez and Zhongqi Miao and Luisa Vargas and Sara Beery and Rahul Dodhia and Juan Lavista},

year={2024},

eprint={2405.12930},

archivePrefix={arXiv},

}

Also, don't forget to cite our original paper for MegaDetector:

@misc{beery2019efficient,

title={Efficient Pipeline for Camera Trap Image Review},

author={Sara Beery and Dan Morris and Siyu Yang},

year={2019}

eprint={1907.06772},

archivePrefix={arXiv},

}

This project is open to your ideas and contributions. If you want to submit a pull request, we'll have some guidelines available soon.

We have adopted the Microsoft Open Source Code of Conduct. For more information see the Code of Conduct FAQ or contact us with any additional questions or comments.

This repository is licensed with the MIT license.

The extensive collaborative efforts of Megadetector have genuinely inspired us, and we deeply value its significant contributions to the community. As we continue to advance with Pytorch-Wildlife, our commitment to delivering technical support to our existing partners on MegaDetector remains the same.

Here we list a few of the organizations that have used MegaDetector. We're only listing organizations who have given us permission to refer to them here or have posted publicly about their use of MegaDetector.

👉 Full list of organizations

(Newly Added) TerrOïko (OCAPI platform)

Arizona Department of Environmental Quality

Canadian Parks and Wilderness Society (CPAWS) Northern Alberta Chapter

Czech University of Life Sciences Prague

Idaho Department of Fish and Game

SPEA (Portuguese Society for the Study of Birds)

The Nature Conservancy in Wyoming

Upper Yellowstone Watershed Group

Applied Conservation Macro Ecology Lab, University of Victoria

Banff National Park Resource Conservation, Parks Canada(https://www.pc.gc.ca/en/pn-np/ab/banff/nature/conservation)

Blumstein Lab, UCLA

Borderlands Research Institute, Sul Ross State University

Capitol Reef National Park / Utah Valley University

Center for Biodiversity and Conservation, American Museum of Natural History

Centre for Ecosystem Science, UNSW Sydney

Cross-Cultural Ecology Lab, Macquarie University

DC Cat Count, led by the Humane Rescue Alliance

Department of Fish and Wildlife Sciences, University of Idaho

Department of Wildlife Ecology and Conservation, University of Florida

Ecology and Conservation of Amazonian Vertebrates Research Group, Federal University of Amapá

Gola Forest Programma, Royal Society for the Protection of Birds (RSPB)

Graeme Shannon's Research Group, Bangor University

Hamaarag, The Steinhardt Museum of Natural History, Tel Aviv University

Institut des Science de la Forêt Tempérée (ISFORT), Université du Québec en Outaouais

Lab of Dr. Bilal Habib, the Wildlife Institute of India

Mammal Spatial Ecology and Conservation Lab, Washington State University

McLoughlin Lab in Population Ecology, University of Saskatchewan

National Wildlife Refuge System, Southwest Region, U.S. Fish & Wildlife Service

Northern Great Plains Program, Smithsonian

Quantitative Ecology Lab, University of Washington

Santa Monica Mountains Recreation Area, National Park Service

Seattle Urban Carnivore Project, Woodland Park Zoo

Serra dos Órgãos National Park, ICMBio

Snapshot USA, Smithsonian

Wildlife Coexistence Lab, University of British Columbia

Wildlife Research, Oregon Department of Fish and Wildlife

Wildlife Division, Michigan Department of Natural Resources

Department of Ecology, TU Berlin

Ghost Cat Analytics

Protected Areas Unit, Canadian Wildlife Service

School of Natural Sciences, University of Tasmania (story)

Kenai National Wildlife Refuge, U.S. Fish & Wildlife Service (story)

Australian Wildlife Conservancy (blog, blog)

Felidae Conservation Fund (WildePod platform) (blog post)

Alberta Biodiversity Monitoring Institute (ABMI) (WildTrax platform) (blog post)

Shan Shui Conservation Center (blog post) (translated blog post)

Irvine Ranch Conservancy (story)

Wildlife Protection Solutions (story, story)

Road Ecology Center, University of California, Davis (Wildlife Observer Network platform)

Important

If you would like to be added to this list or have any questions regarding MegaDetector and Pytorch-Wildlife, please email us or join us in our Discord channel: