This is the official repository for the publication:

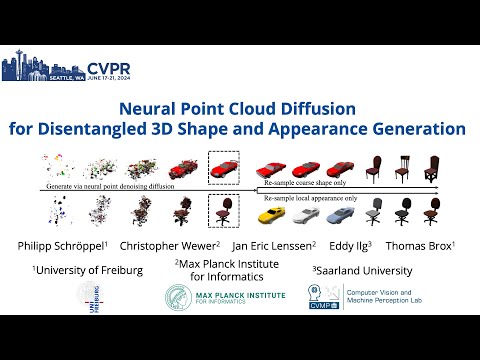

Neural Point Cloud Diffusion for Disentangled 3D Shape and Appearance Generation

Philipp Schröppel, Christopher Wewer, Jan Eric Lenssen, Eddy Ilg, Thomas Brox

CVPR 2024

The code was tested with python 3.10 and PyTorch 1.12 and CUDA 11.6 on Ubuntu 22.04.

To set up the environment, clone this repository and run the following commands from the root directory of this repository:

conda create -y -n npcd python=3.10

conda activate npcd

pip install torch==1.12.0+cu116 torchvision==0.13.0+cu116 torchaudio==0.12.0 --extra-index-url https://download.pytorch.org/whl/cu116

pip install -r requirements.txt

pip install flash-attn==2.4.1 --no-build-isolation

pip install git+https://github.com/janericlenssen/torch_knnquery.git

pip install -U openmim

mim install mmcv-full==1.6

git clone https://github.com/open-mmlab/mmgeneration && cd mmgeneration && git checkout v0.7.2

pip install -v -e .

cd ..Download srn_cars.zip and srn_chairs.zip from here and unzip them to ./data. ./data should now contain the subdirectories: cars_train, cars_val, cars_test, chairs_train, chairs_val, chairs_test. Following that, run the following commands:

cd data

mkdir cars

cd cars

ln -s ../cars_train/* .

ln -s ../cars_val/* .

ln -s ../cars_test/* .

cd ..

mkdir chairs

cd chairs

ln -s ../chairs_train/* .

ln -s ../chairs_val/* .

ln -s ../chairs_test/* .

cd ..

./download_pointclouds.sh

cd ..To download the model weights from the publication, run the following commands:

cd weights

./download_weights.sh

cd ..To run the evaluation of the PointNeRF Autodecoder, run the following command (with choosing a custom output directory if you want):

python eval_pointnerf.py --config configs/npcd_srncars.yaml --weights weights/npcd_srncars.pt --output /tmp/npcd_eval/srn_cars/pointnerfThe result of this evaluation is a PSNR=30.2.

Note that for runtime measurements, you have to add the flag --eval_batch_size 1.

To evaluate the unconditional generation quality, we use a similar codebase as SSDNeRF. In particular, to run the evaluation, you first have to extract the Inception features of the real images, as in the SSDNeRF codebase. For this, please clone the SSDNeRF and set up a separate environment for it, as described in its README. Then, link the data that you downloaded before to /path/to/SSDNeRF/data/shapenet, e.g. by running the following commands:

mkdir /path/to/SSDNeRF/data/

ln -s /path/to/neural-point-cloud-diffusion/data /path/to/SSDNeRF/data/shapenetThen, extract the Inception features as described in the SSDNeRF README (using the inception_stat.py script). Copy or move the resulting files to the neural-point-cloud-diffusion repository, for example as follows:

cp /path/to/SSDNeRF/work_dirs/cache/cars_test_inception_stylegan.pkl /path/to/neural-point-cloud-diffusion/data

cp /path/to/SSDNeRF/work_dirs/cache/inception-2015-12-05.pt /path/to/neural-point-cloud-diffusion/dataTo run the evaluation of the unconditional generation quality, run the following command (with choosing a custom output directory if you want):

python eval_diffusion.py --config configs/npcd_srncars.yaml --weights weights/npcd_srncars.pt --output /tmp/npcd_eval/srn_cars/diffusionThe result of this evaluation is a FID=28.6.

The training consists of two stages:

- PointNeRF autodecoder training

- Diffusion model training

All following commands operate in a output directory at /tmp/npcd_train/srn_cars. Change this to a custom output directory if you want.

To run the training of the PointNeRF Autodecoder, run the following command:

python train_pointnerf.py --config configs/npcd_srncars.yaml --output /tmp/npcd_train/srn_cars/pointnerfTo evaluate the resulting model, run the following command:

python eval_pointnerf.py --config configs/npcd_srncars.yaml --weights /tmp/npcd_train/srn_cars/pointnerf/weights_only_checkpoints_dir/pointnerf-iter-002197500.pt --output /tmp/npcd_train/srn_cars/pointnerf/evalThe result of this evaluation is a PSNR=30.3.

To run the training of the diffusion model on the resulting neural point clouds from the previous stage, run the following command:

python train_diffusion.py --config configs/npcd_srncars.yaml --pointnerf_weights /tmp/npcd_train/srn_cars/pointnerf/weights_only_checkpoints_dir/pointnerf-iter-002197500.pt --output /tmp/npcd_train/srn_cars/diffusionTo run the evaluation of the unconditional generation quality for the resulting model, run the following command:

python eval_diffusion.py --config configs/npcd_srncars.yaml --weights /tmp/npcd_train/srn_cars/diffusion/weights_only_checkpoints_dir/npcd-ema_power1_0min0_9999max0_9999buffers0-iter-001800000.pt --output /tmp/npcd_train/srn_cars/diffusion/evalNote that this requires the same evaluation setup as described above. The result of this evaluation is a FID=27.6.

If you make use of our work, please cite:

@inproceedings{SchroeppelCVPR2024,

Title = {Neural Point Cloud Diffusion for Disentangled 3D Shape and Appearance Generation},

Author = {Philipp Schr\"oppel and Christopher Wewer and Jan Eric Lenssen and Eddy Ilg and Thomas Brox},

Booktitle = {CVPR},

Year = {2024}

}