-

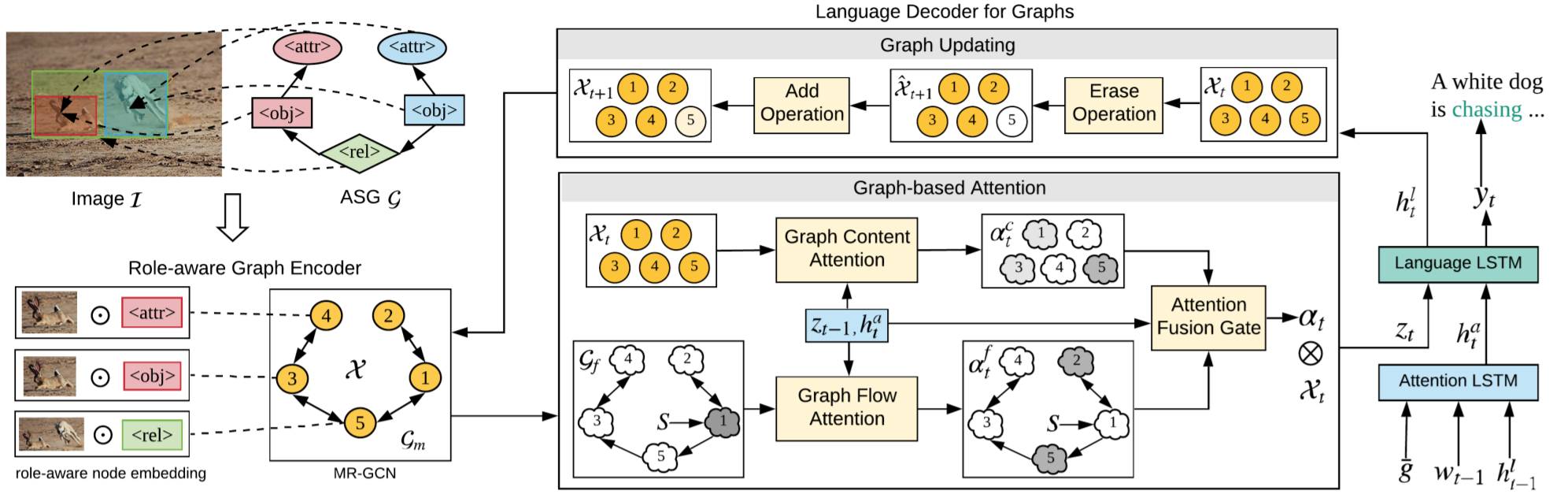

Say As You Wish: Fine-Grained Control of Image Caption Generation With Abstract Scene Graphs

oralShizhe Chen -

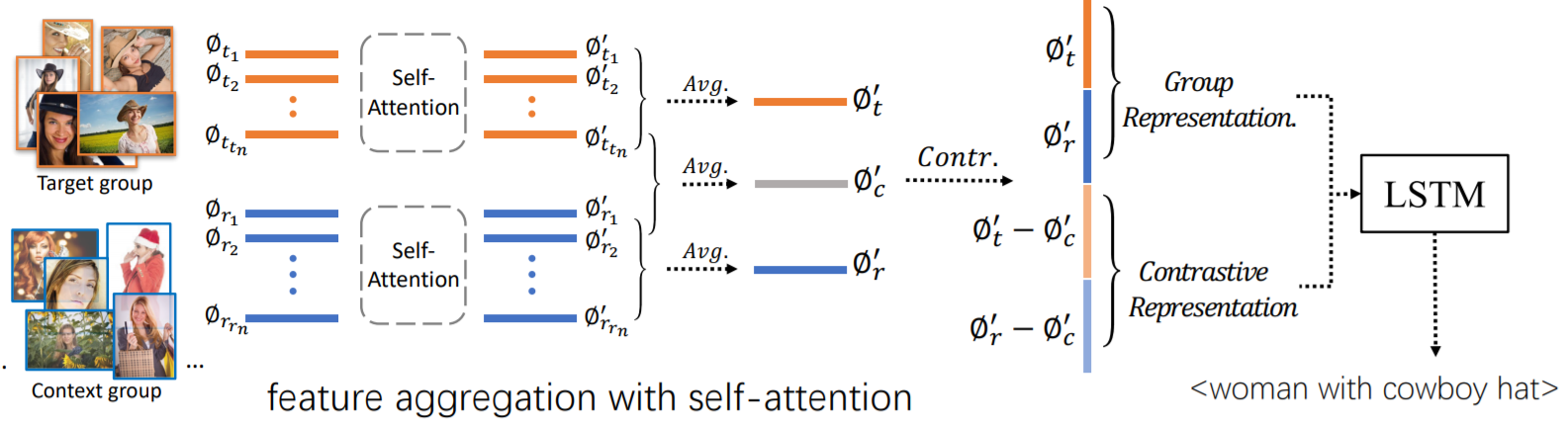

Context-Aware Group Captioning via Self-Attention and Contrastive Features

-

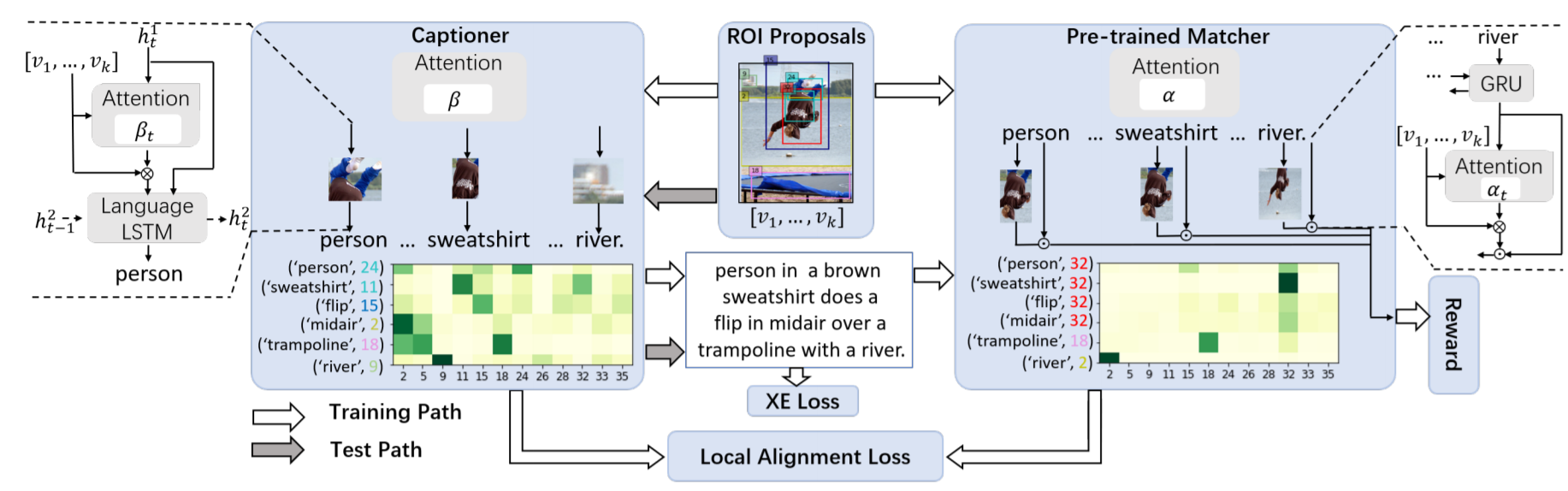

More Grounded Image Captioning by Distilling Image-Text Matching Model

-

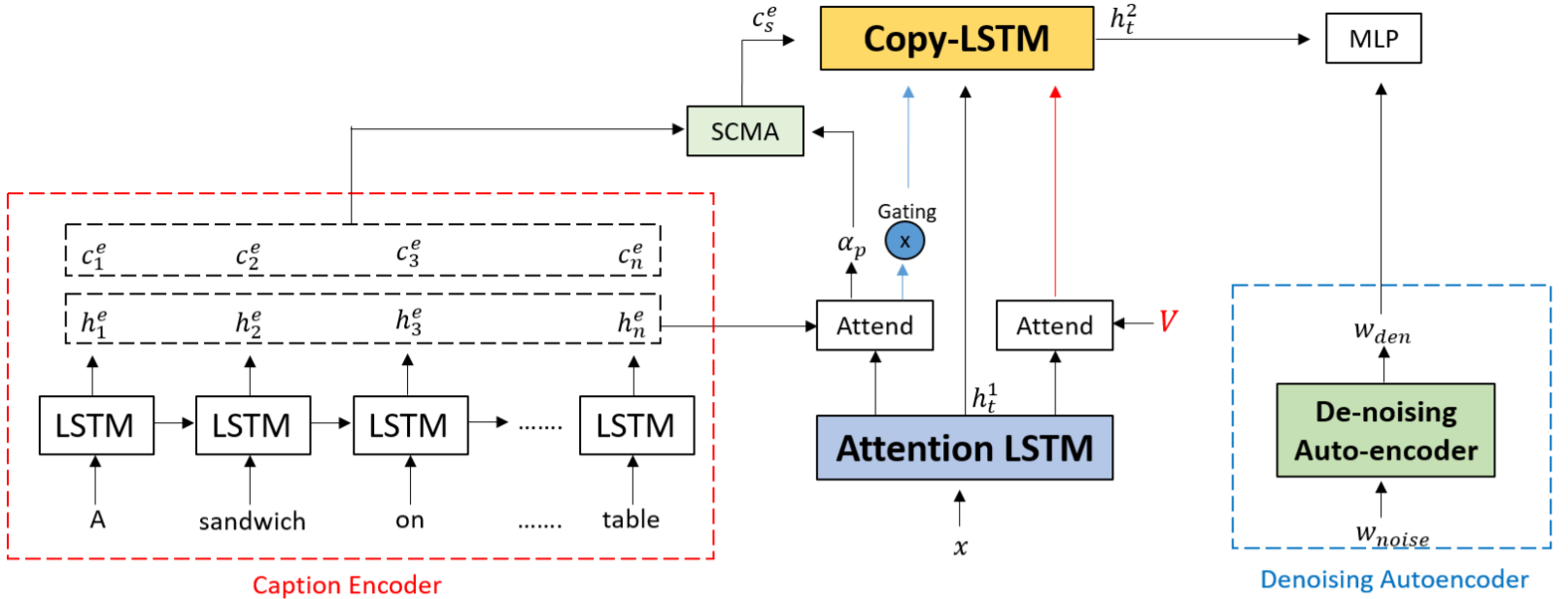

Show, Edit and Tell: A Framework for Editing Image Captions

-

Normalized and Geometry-Aware Self-Attention Network for Image Captioning

-

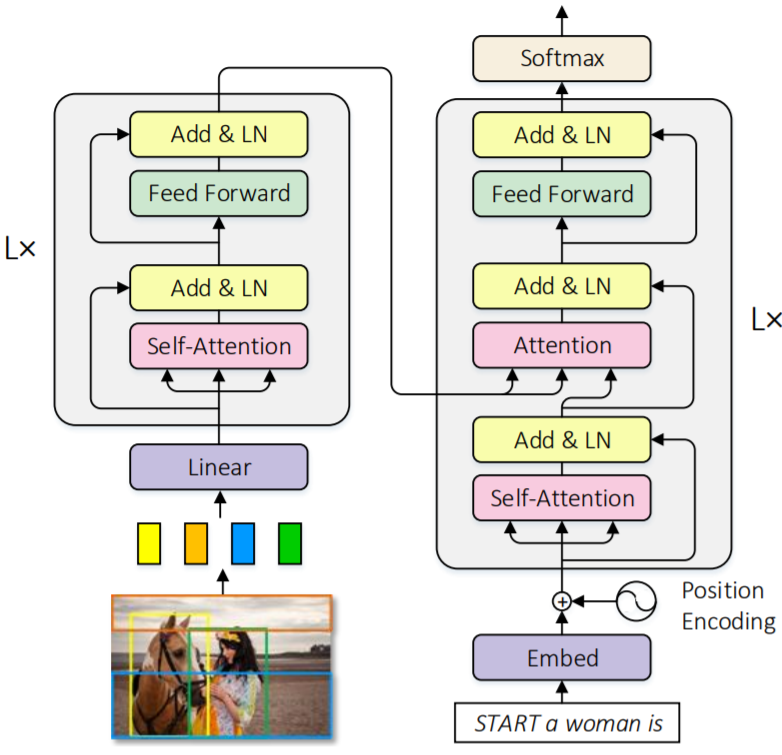

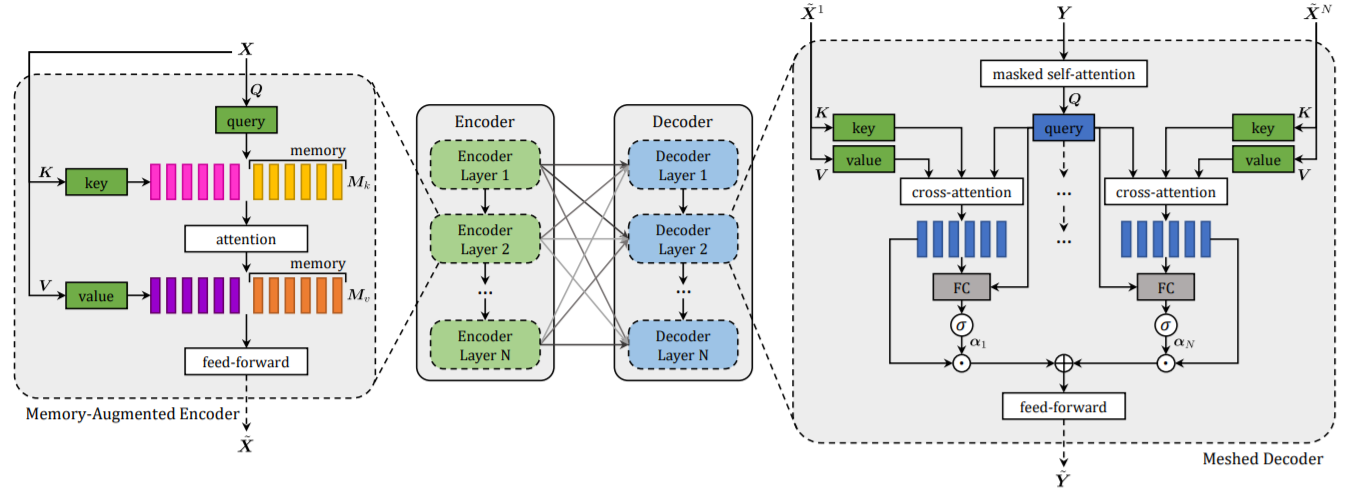

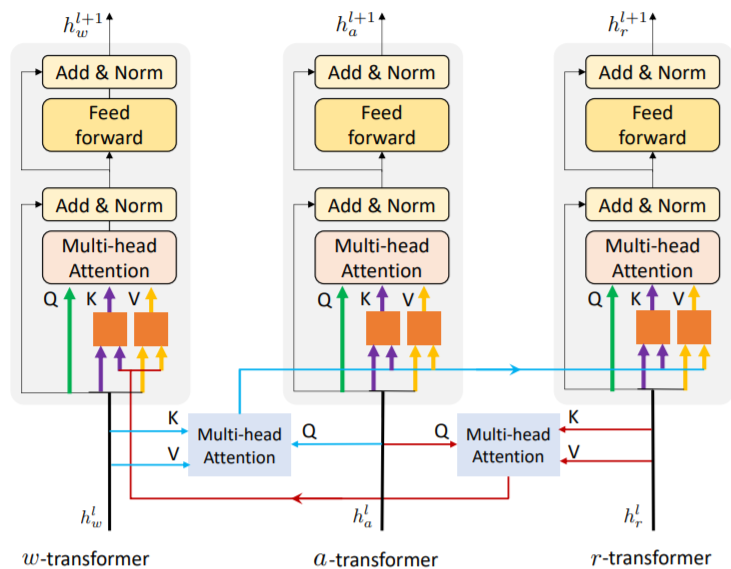

Meshed-Memory Transformer for Image Captioning

-

Better Captioning With Sequence-Level Exploration

JinQindetails

-

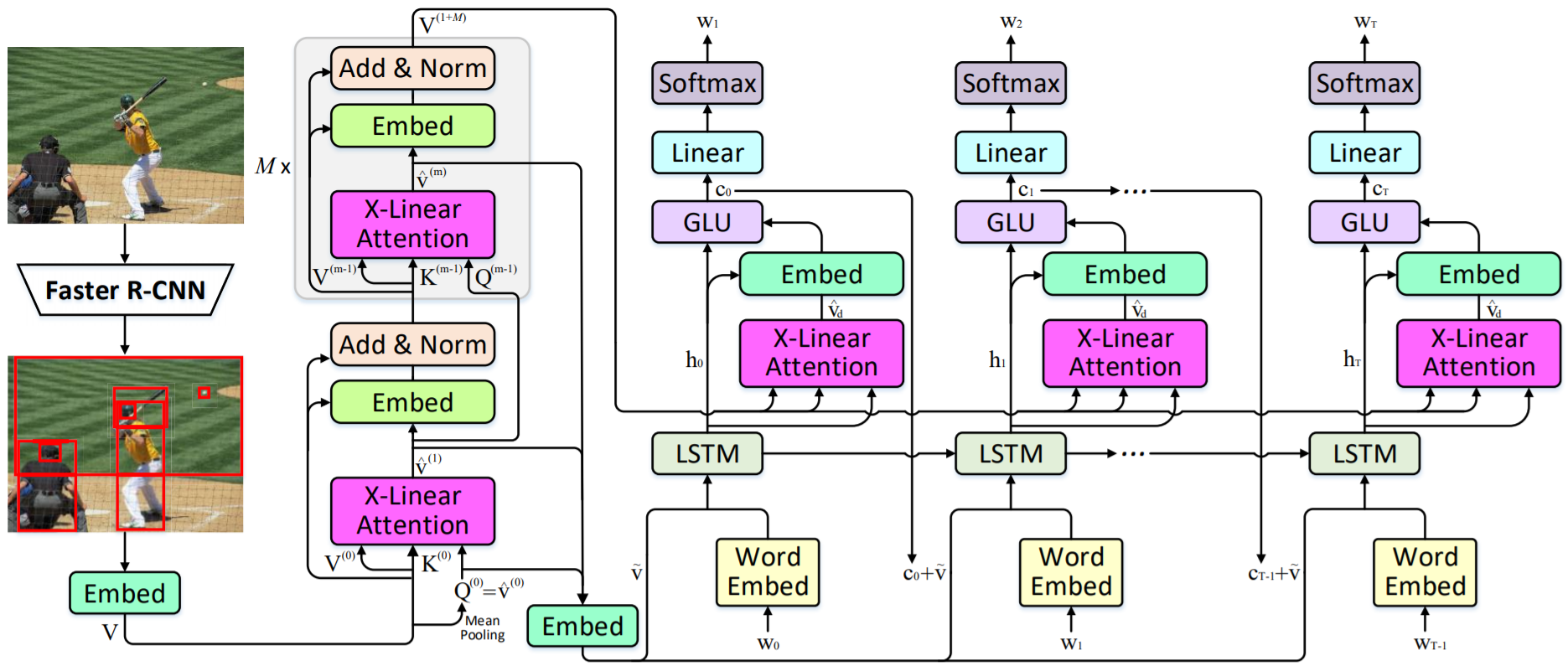

X-Linear Attention Networks for Image Captioning

JD AI -

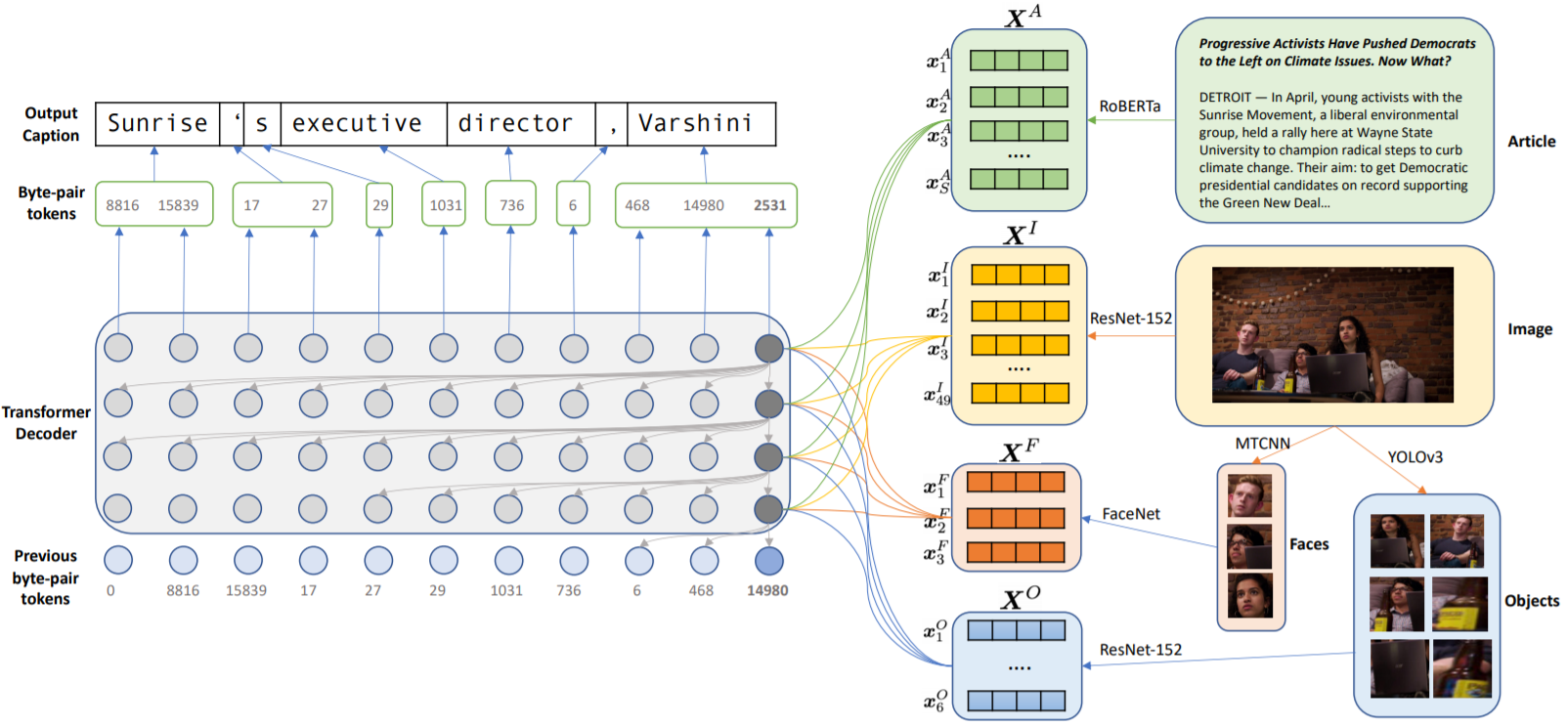

Transform and Tell: Entity-Aware News Image Captioning

-

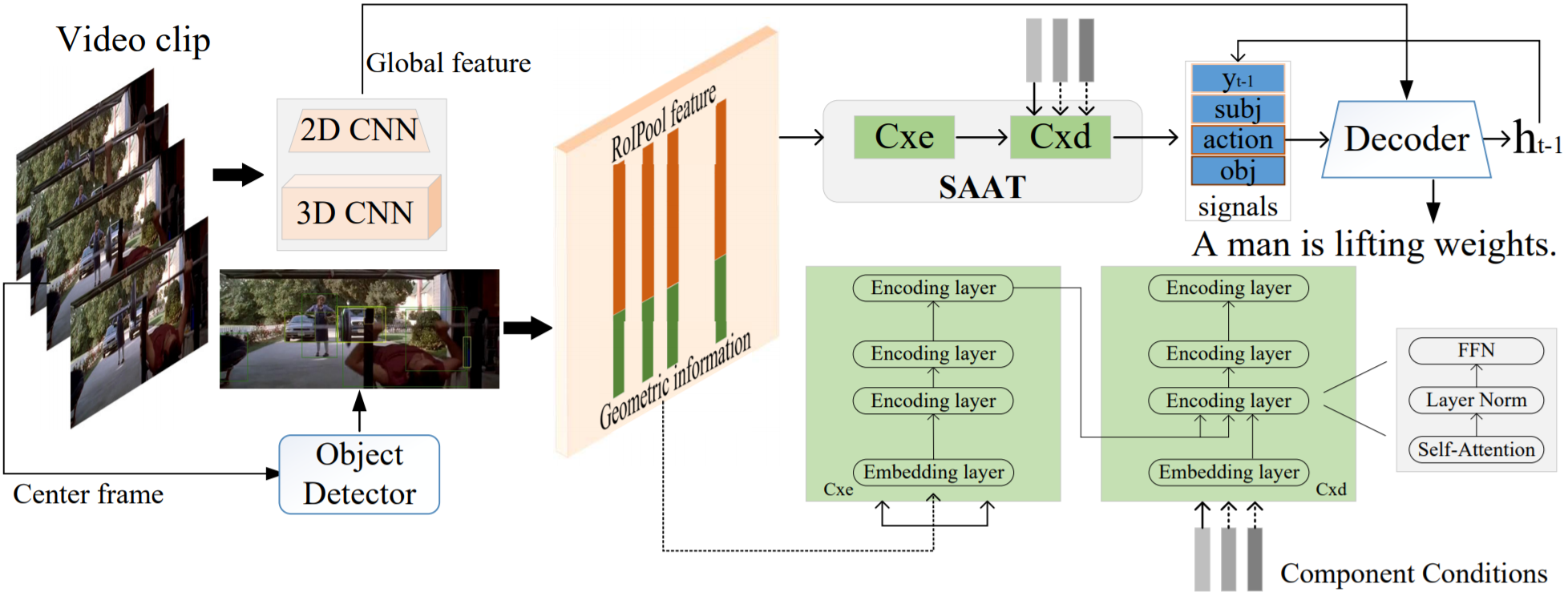

Syntax-Aware Action Targeting for Video Captioning

Dacheng Tao -

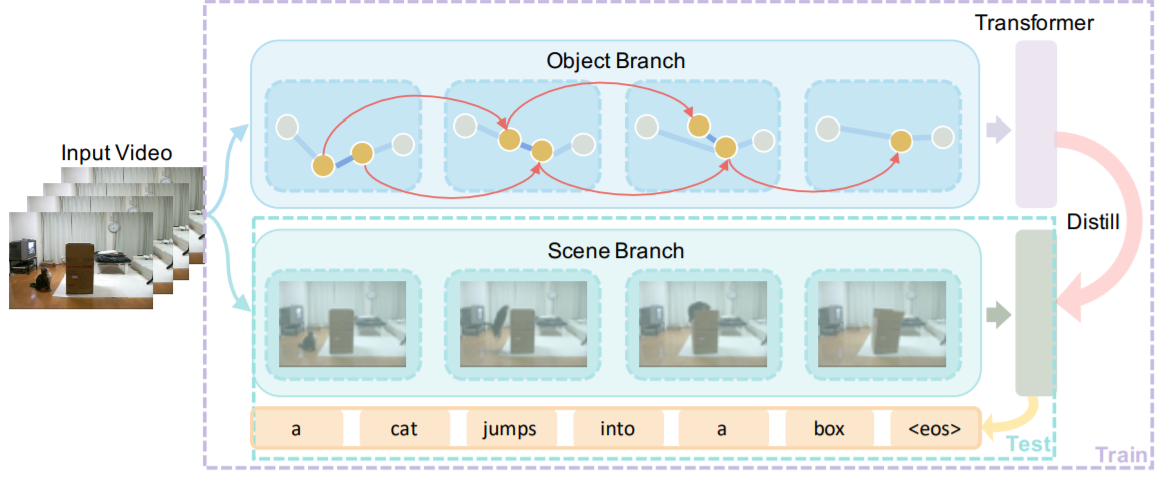

Spatio-Temporal Graph for Video Captioning With Knowledge Distillation

-

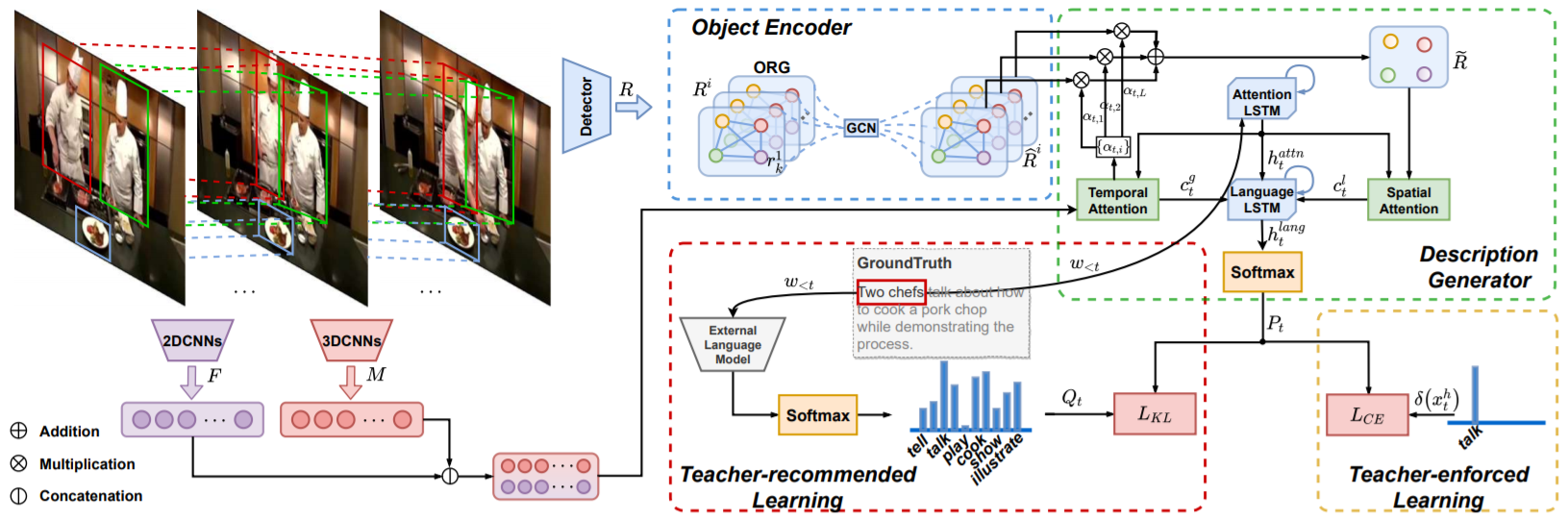

Object Relational Graph With Teacher-Recommended Learning for Video Captioning

-

ActBERT: Learning Global-Local Video-Text Representations

oral -

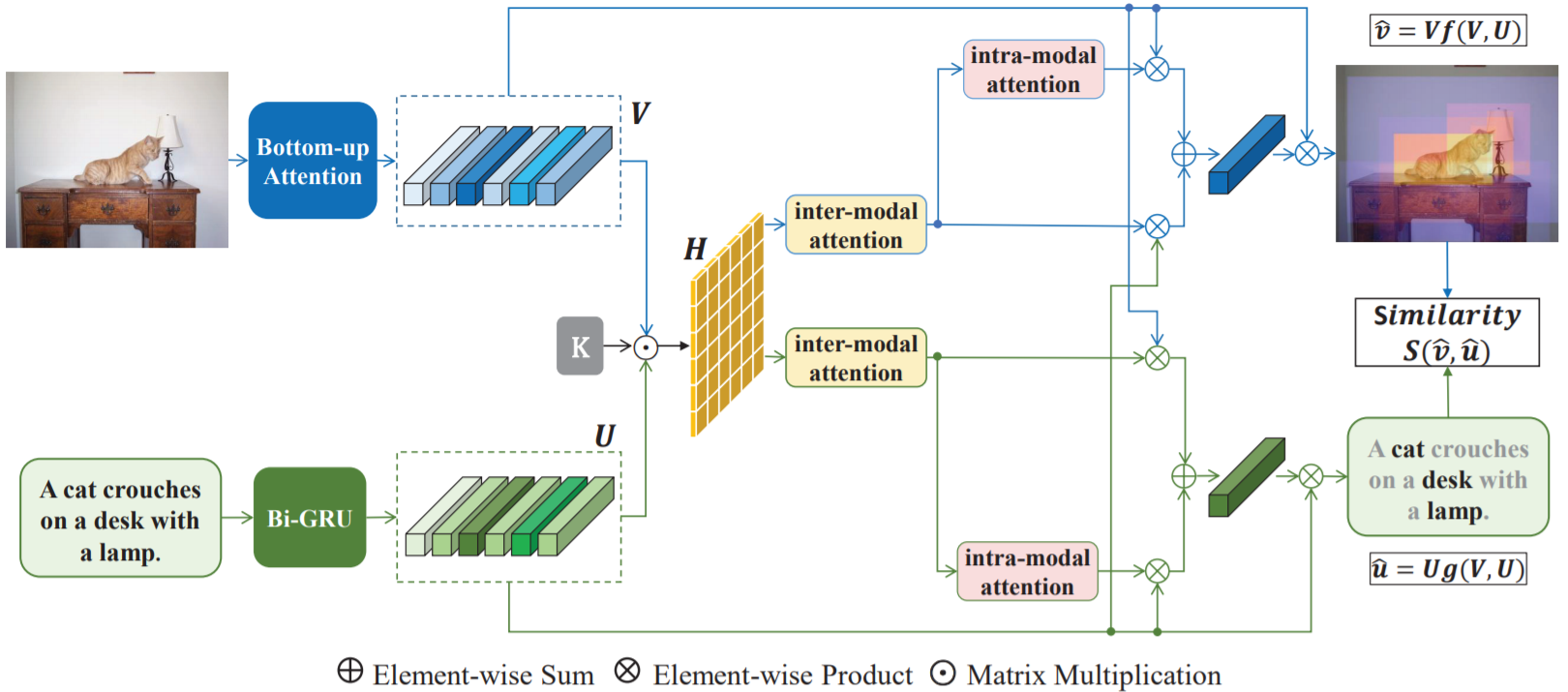

Context-Aware Attention Network for Image-Text Retrieval

-

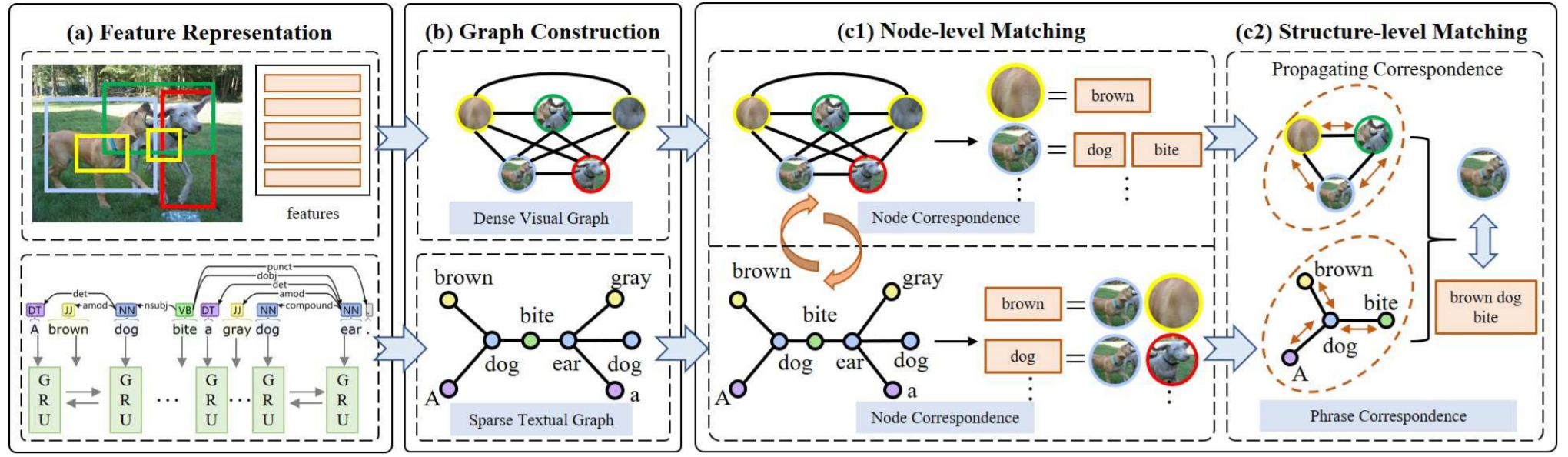

Graph Structured Network for Image-Text Matching

-

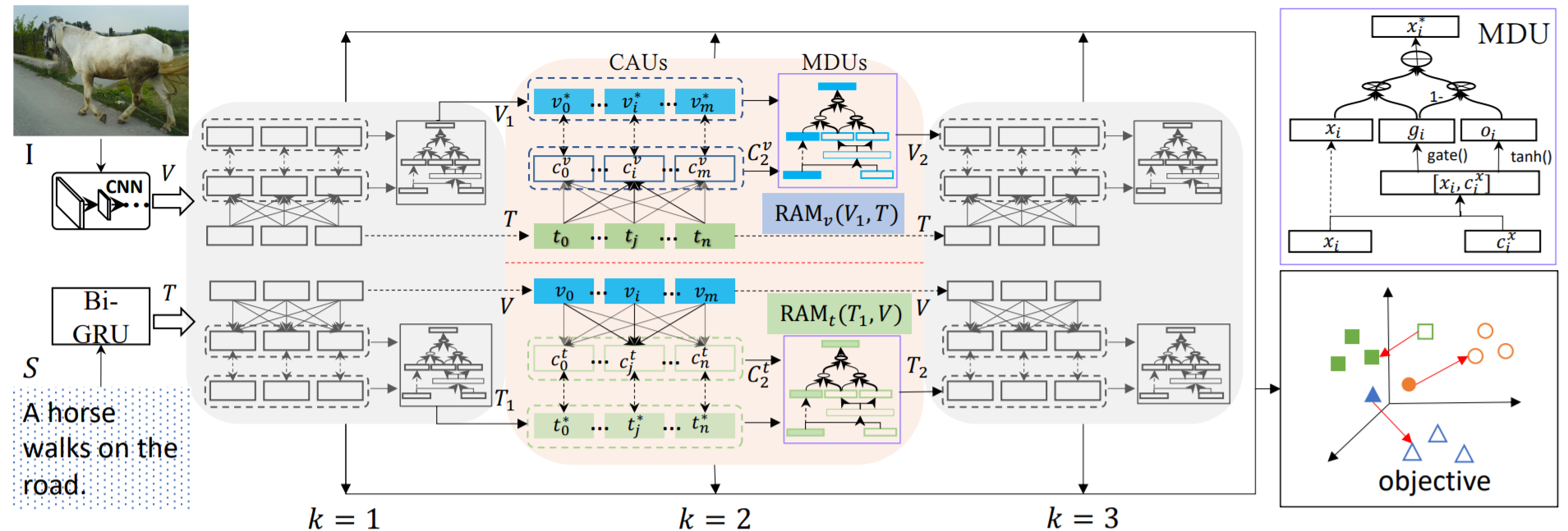

IMRAM: Iterative Matching With Recurrent Attention Memory for Cross-Modal Image-Text Retrieval

-

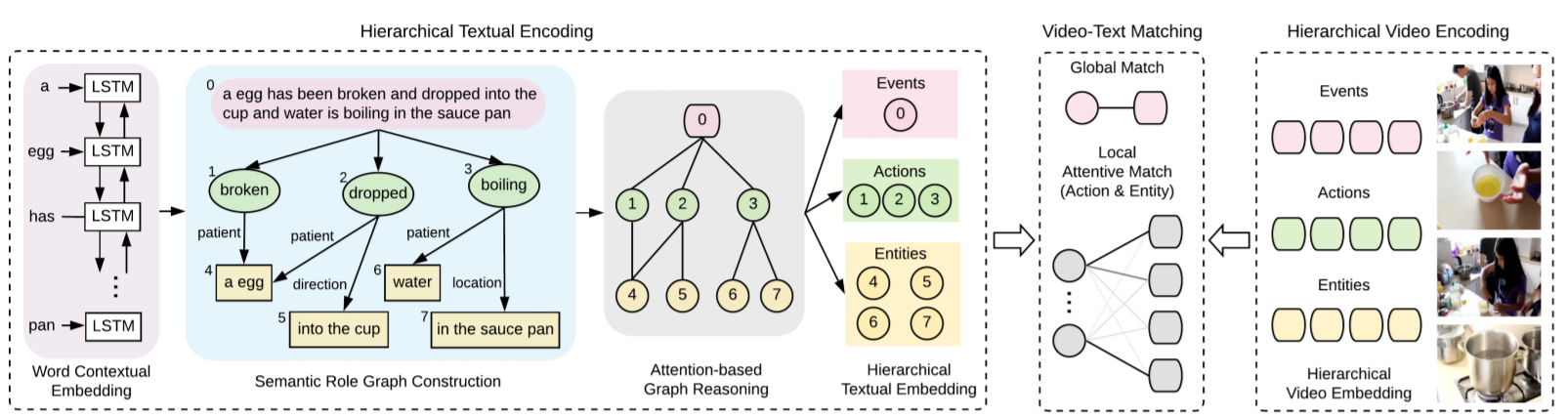

Fine-Grained Video-Text Retrieval With Hierarchical Graph Reasoning

Shizhe Chen -

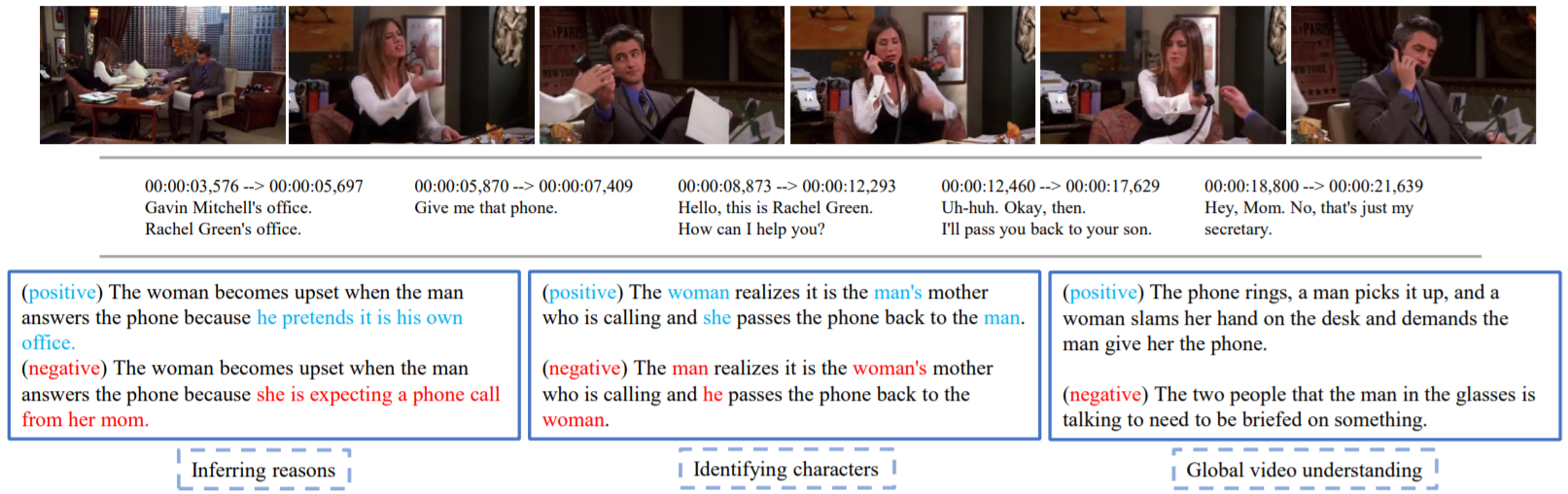

VIOLIN: A Large-Scale Dataset for Video-and-Language Inference

-

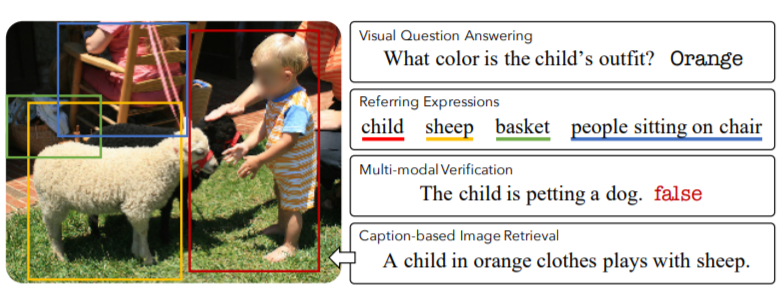

12-in-1: Multi-Task Vision and Language Representation Learning

Jiasen Lu

-

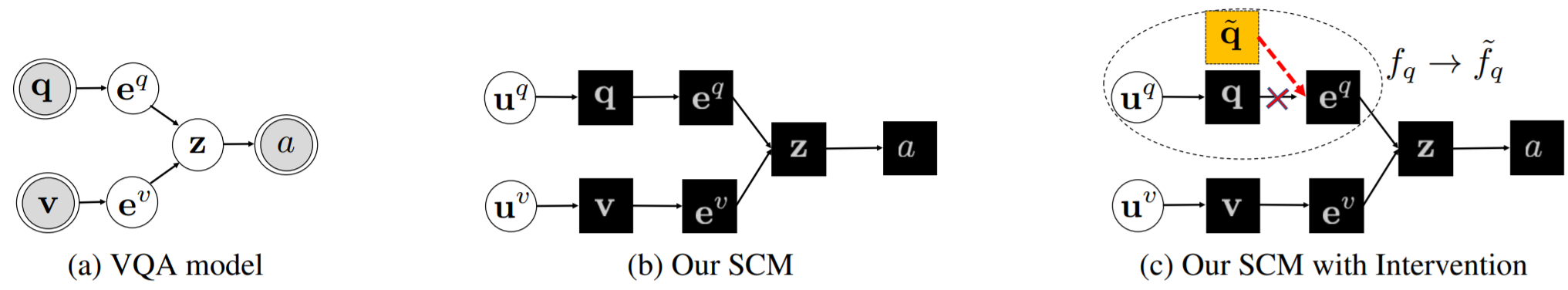

Counterfactual Vision and Language Learning

oral -

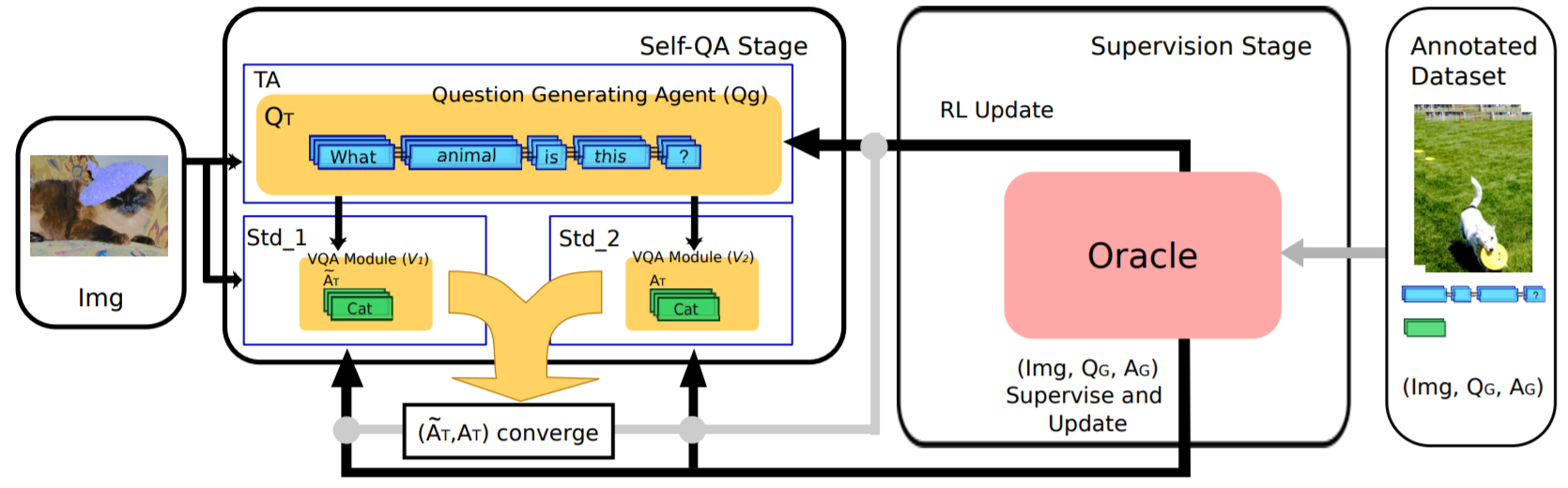

TA-Student VQA: Multi-Agents Training by Self-Questioning

oral -

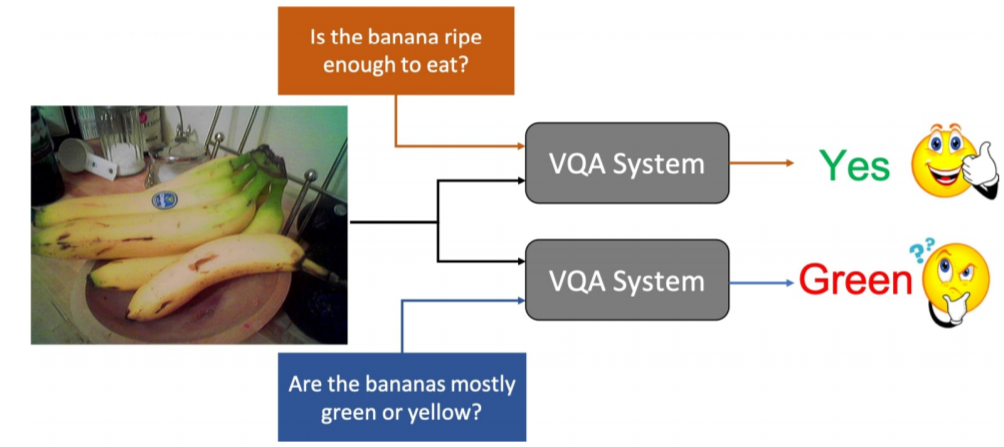

SQuINTing at VQA Models: Introspecting VQA Models With Sub-Questions

oral -

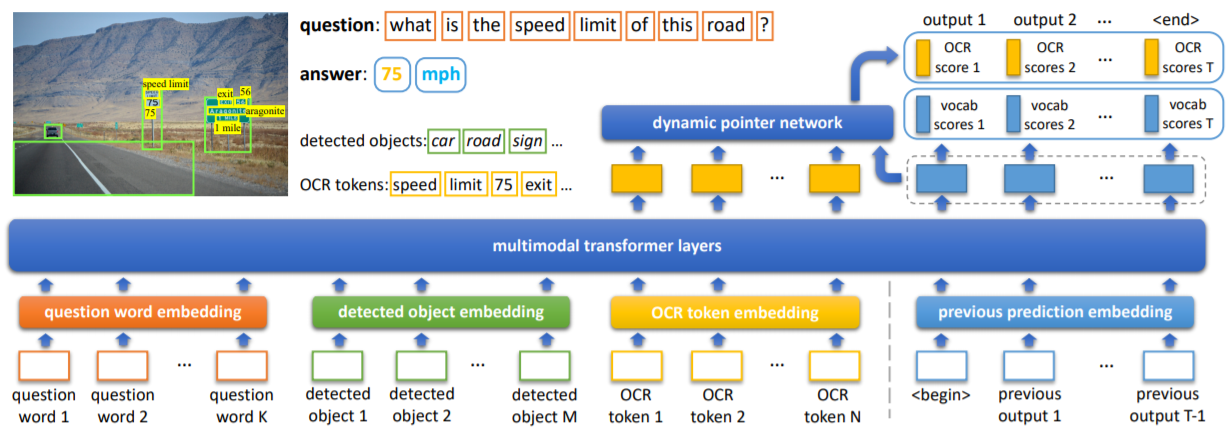

Iterative Answer Prediction With Pointer-Augmented Multimodal Transformers for TextVQA

oral -

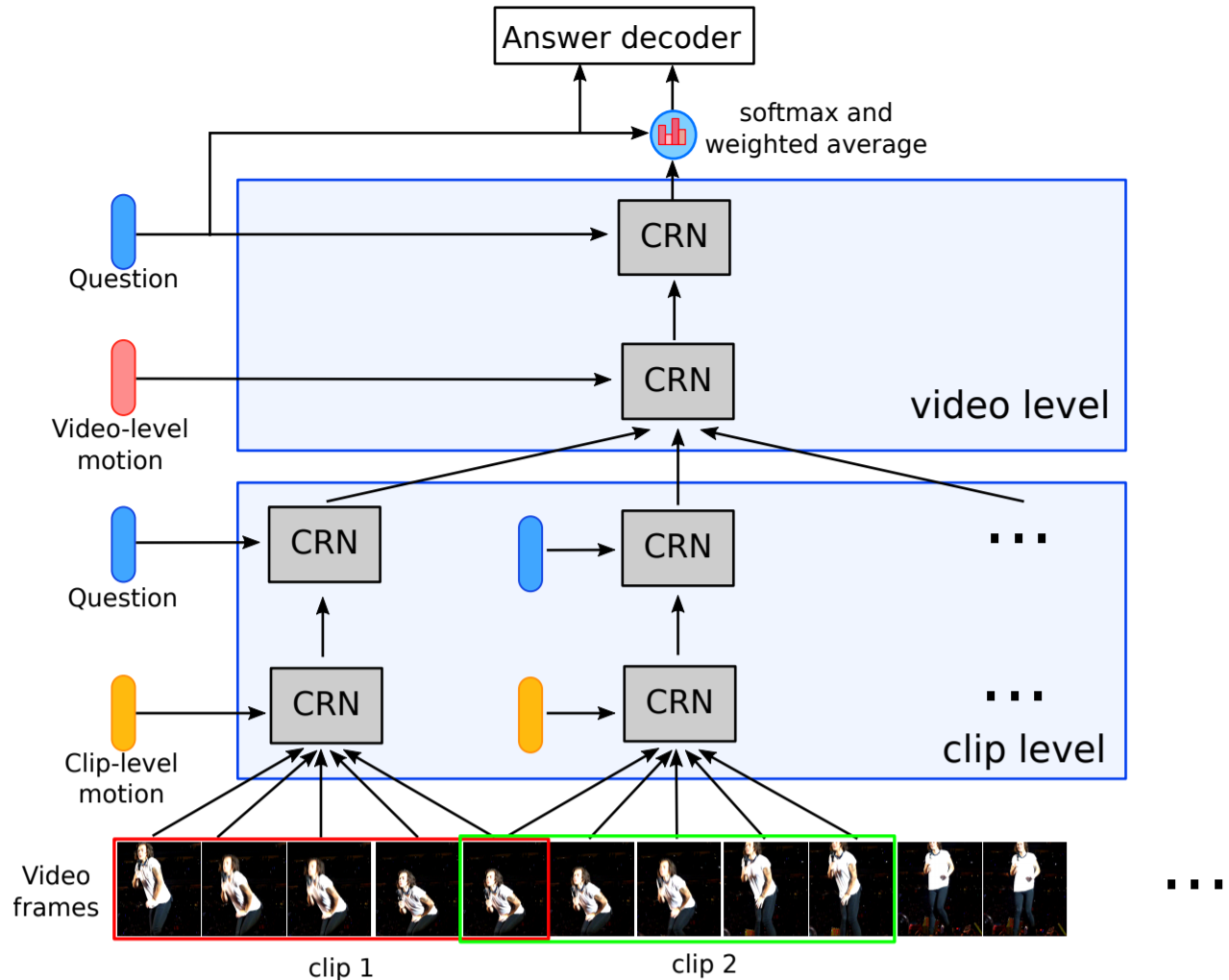

Hierarchical Conditional Relation Networks for Video Question Answering

oral -

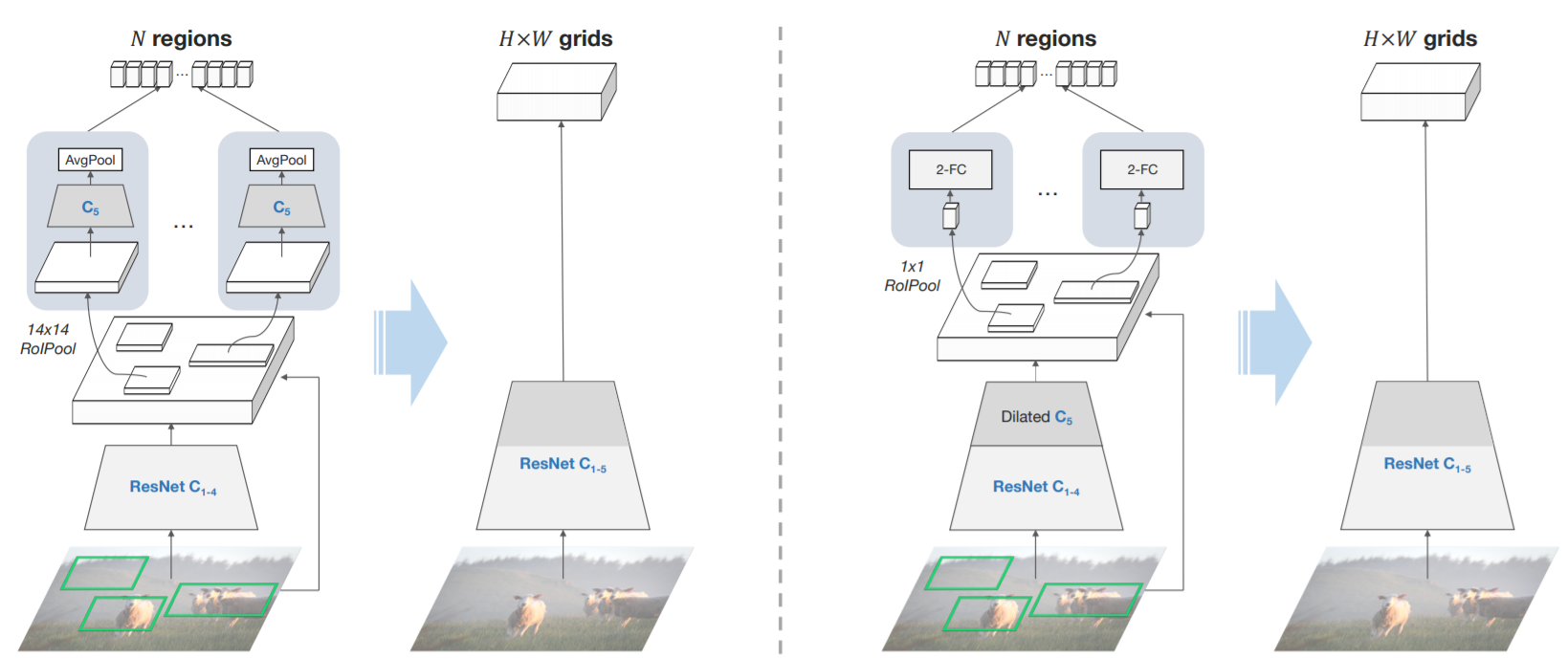

In Defense of Grid Features for Visual Question Answering

-

VQA with No Questions-Answers Training

-

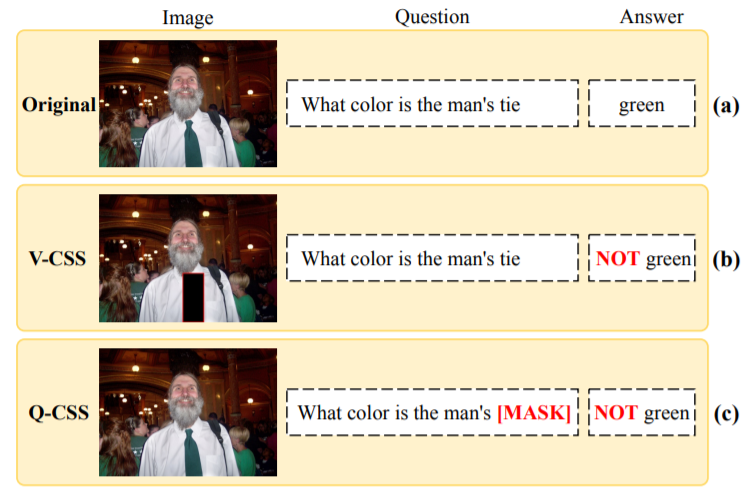

Counterfactual Samples Synthesizing for Robust Visual Question Answering

-

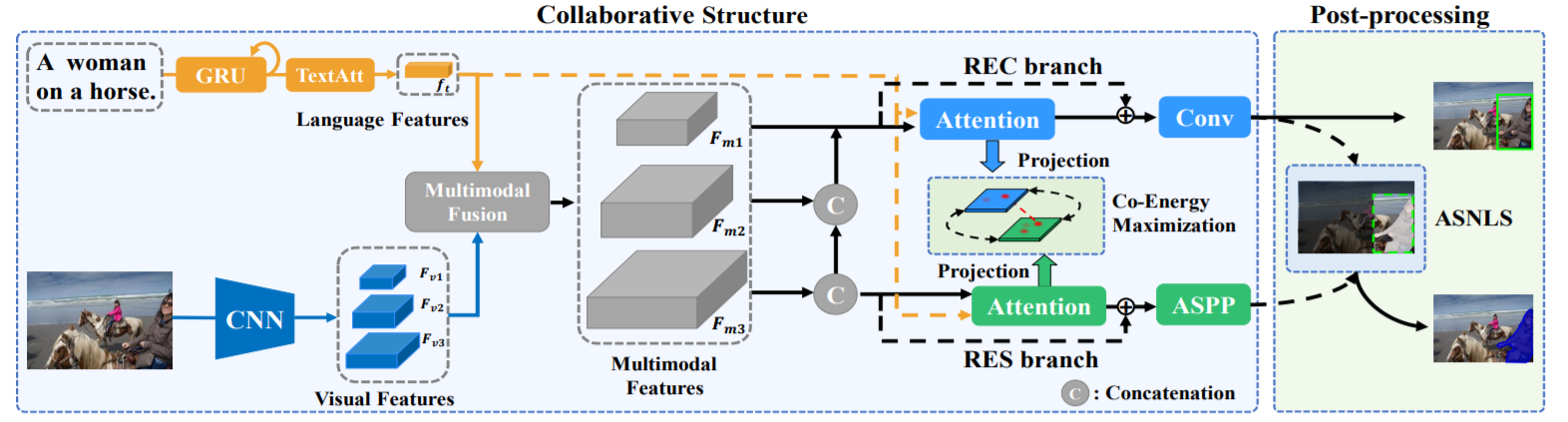

Multi-Task Collaborative Network for Joint Referring Expression Comprehension and Segmentation

oral -

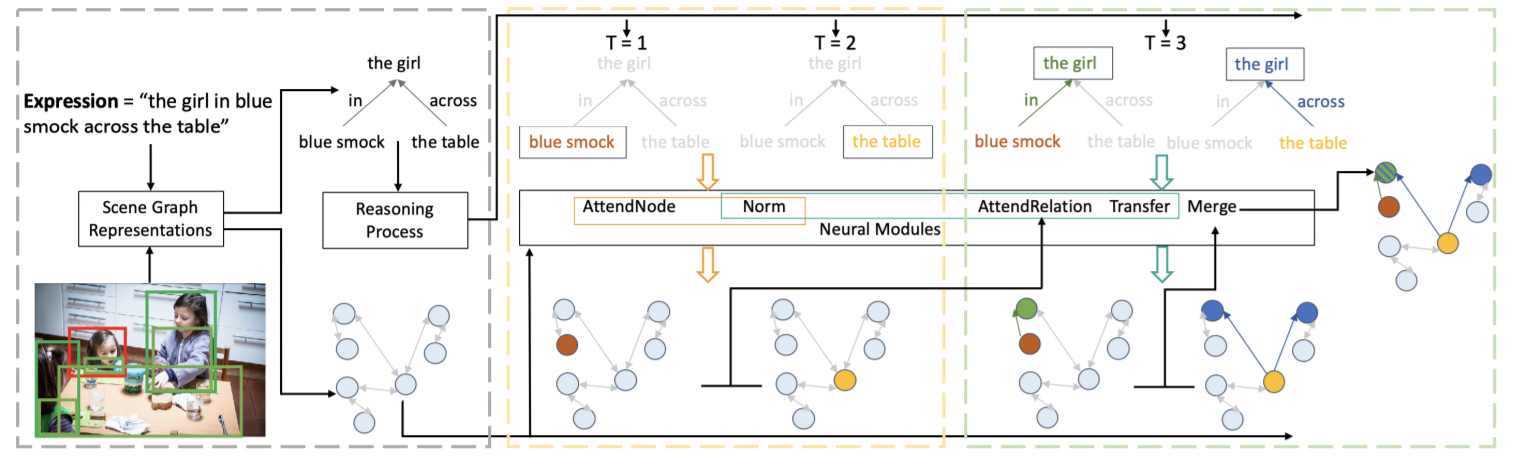

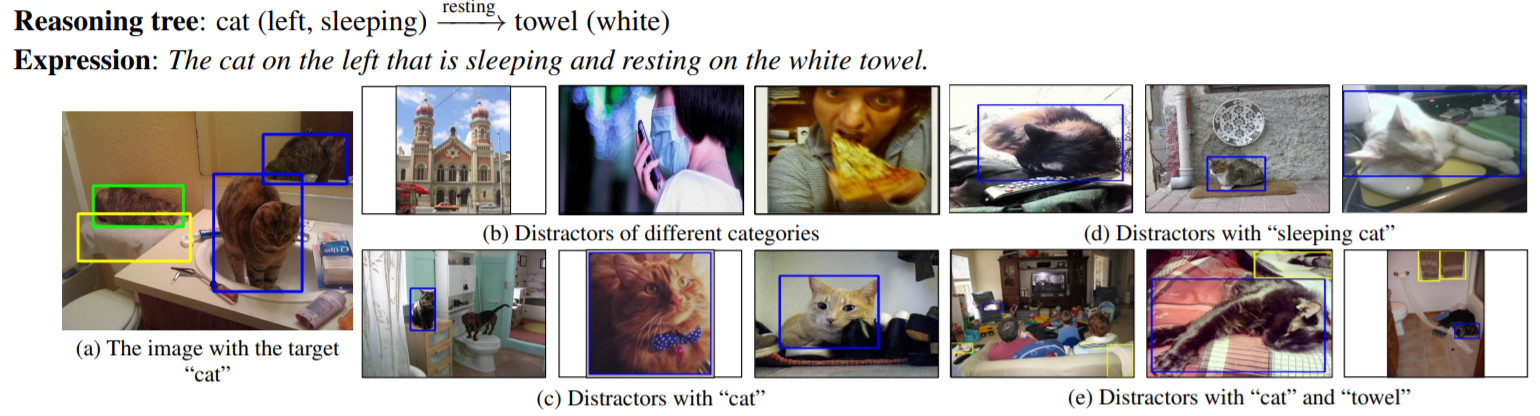

Graph-Structured Referring Expression Reasoning in the Wild

oral -

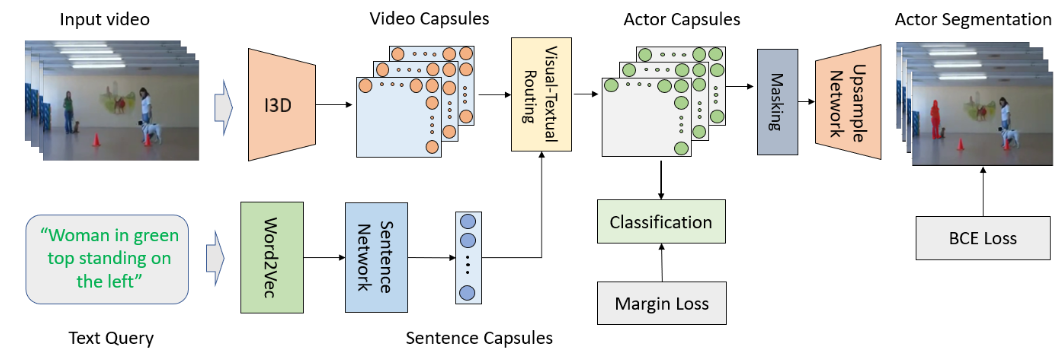

Visual-textual Capsule Routing for Text-based Video Segmentation

oral -

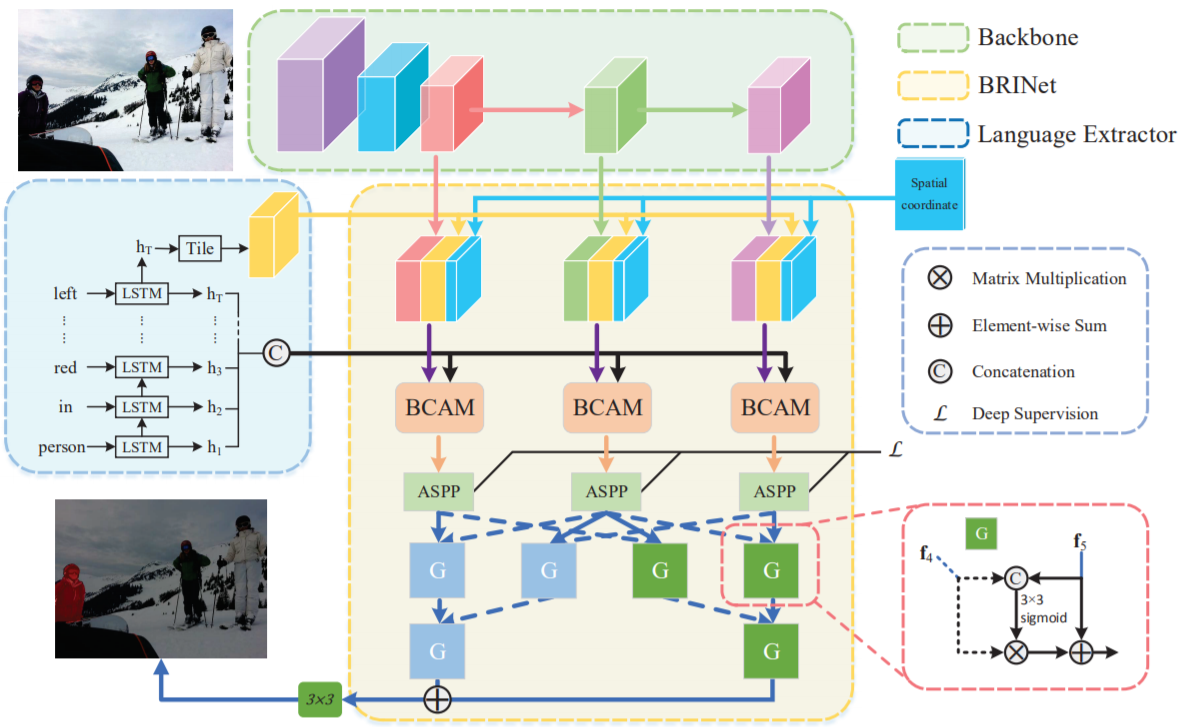

Bi-Directional Relationship Inferring Network for Referring Image Segmentation

Huchuan Lu -

Cops-Ref: A New Dataset and Task on Compositional Referring Expression Comprehension

Qi Wu -

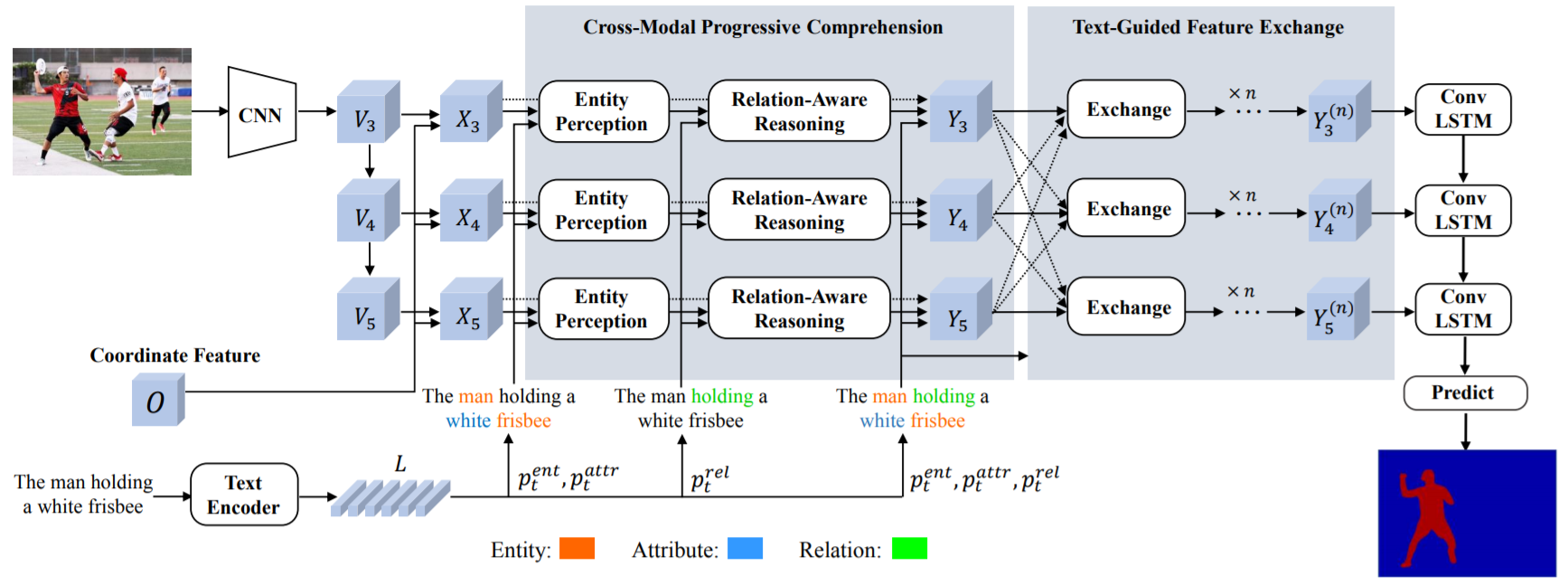

Referring Image Segmentation via Cross-Modal Progressive Comprehension

Si Liu -

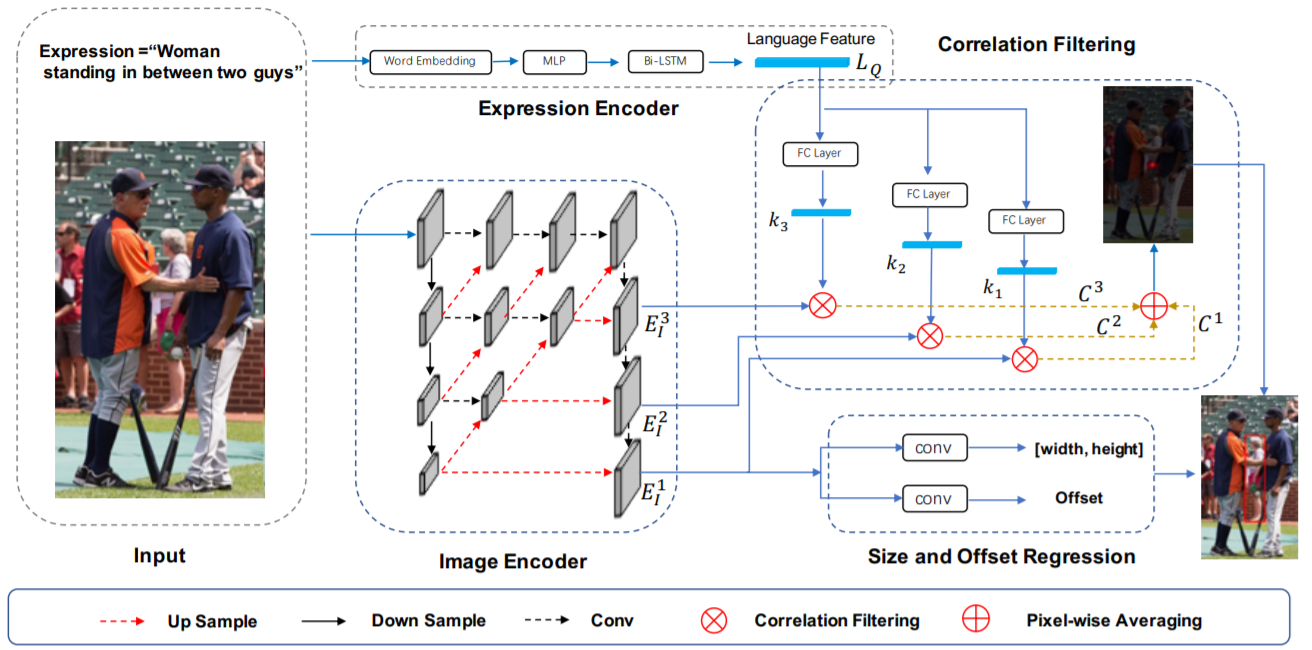

A Real-Time Cross-Modality Correlation Filtering Method for Referring Expression Comprehension

Si Liu -

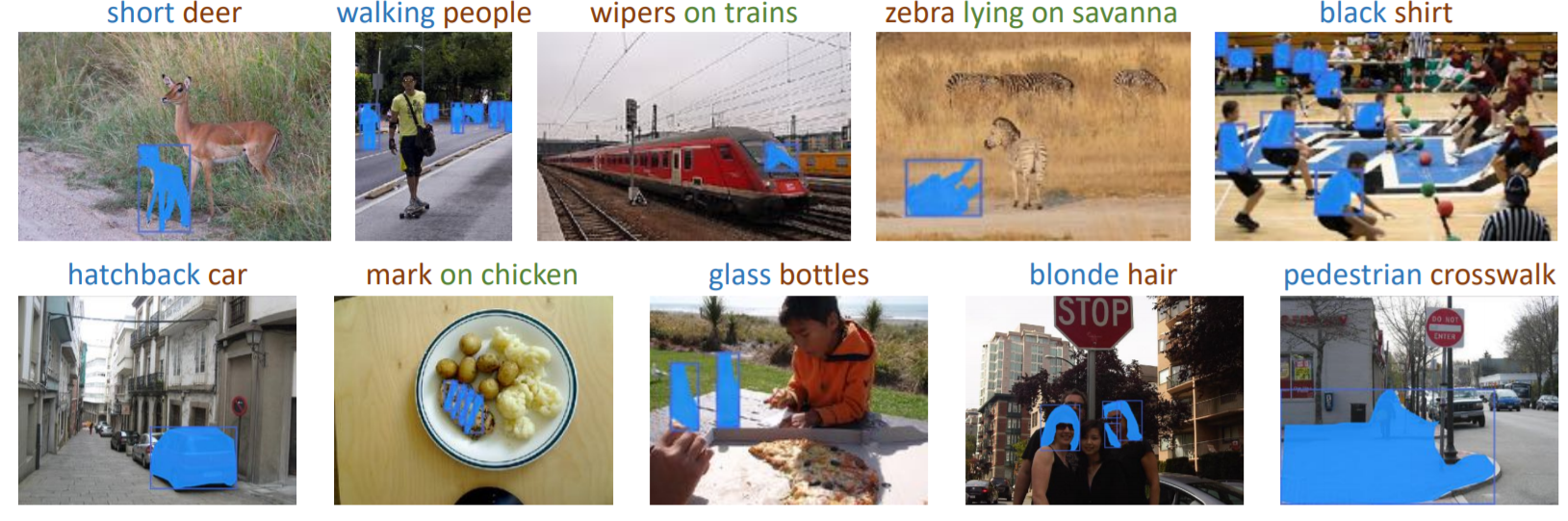

PhraseCut: Language-Based Image Segmentation in the Wild

Adobe

-

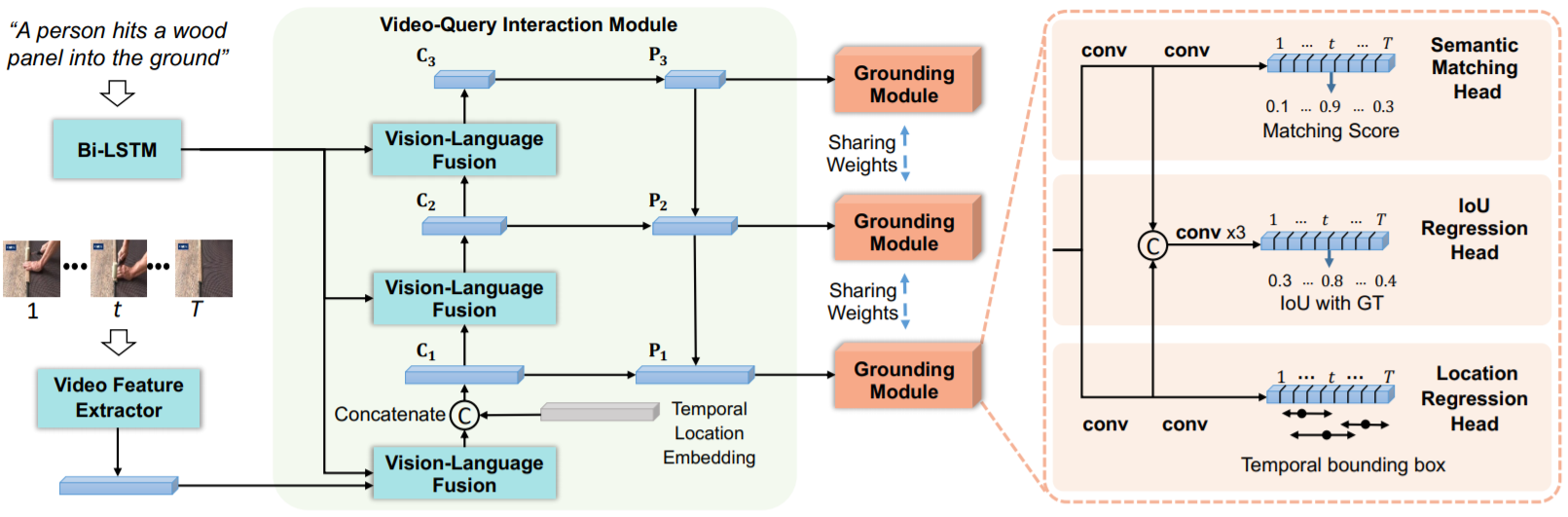

Dense Regression Network for Video Grounding

-

Video Object Grounding using Semantic Roles in Language Description

Arka Sadhu

-

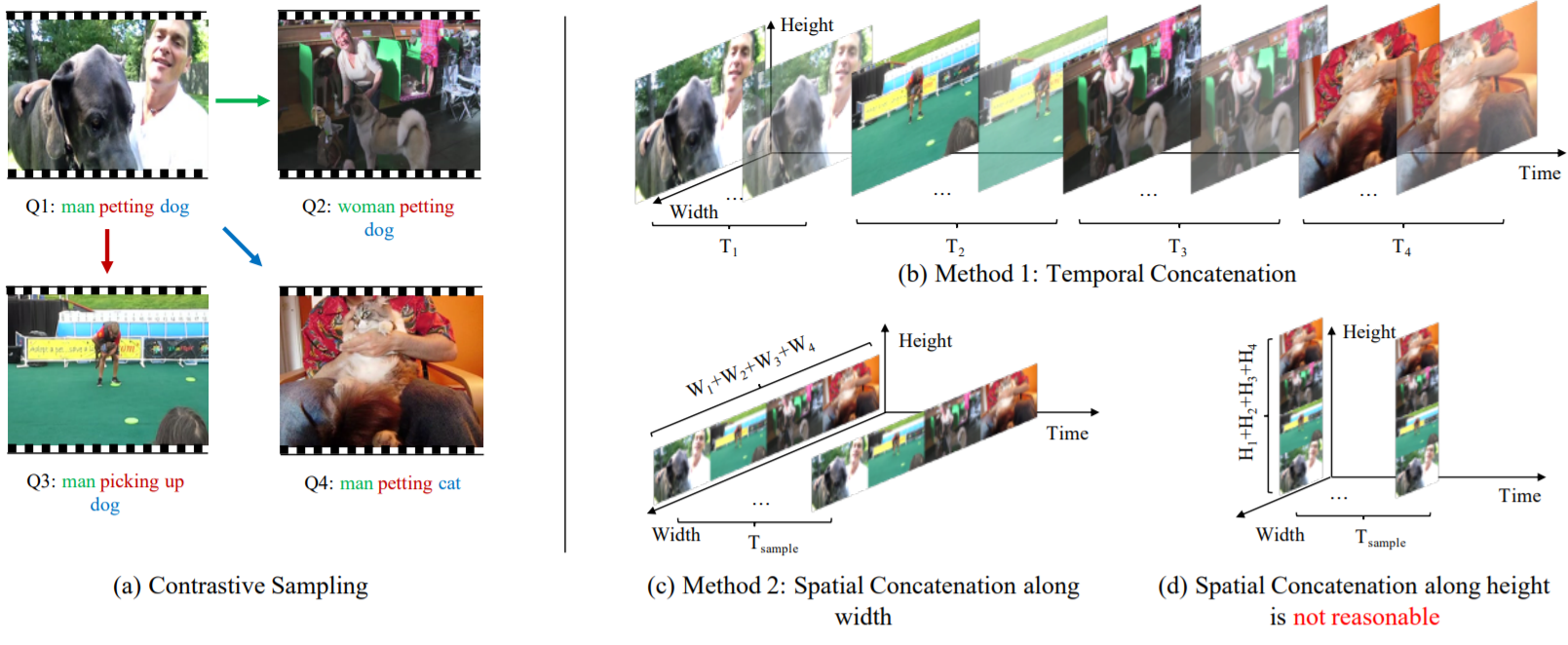

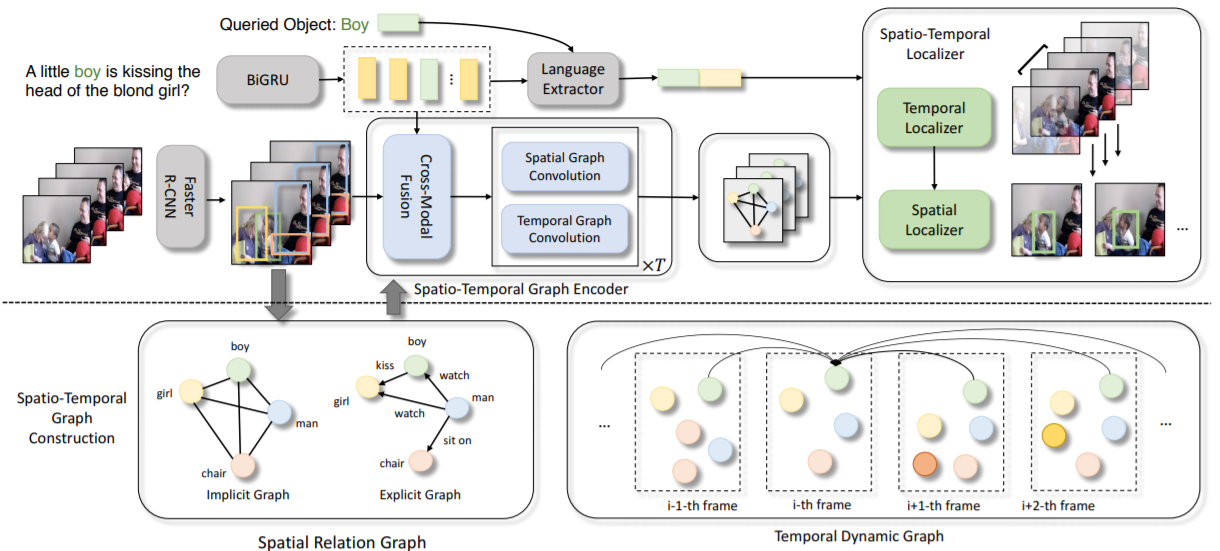

Where Does It Exist: Spatio-Temporal Video Grounding for Multi-Form Sentences

Alibaba

-

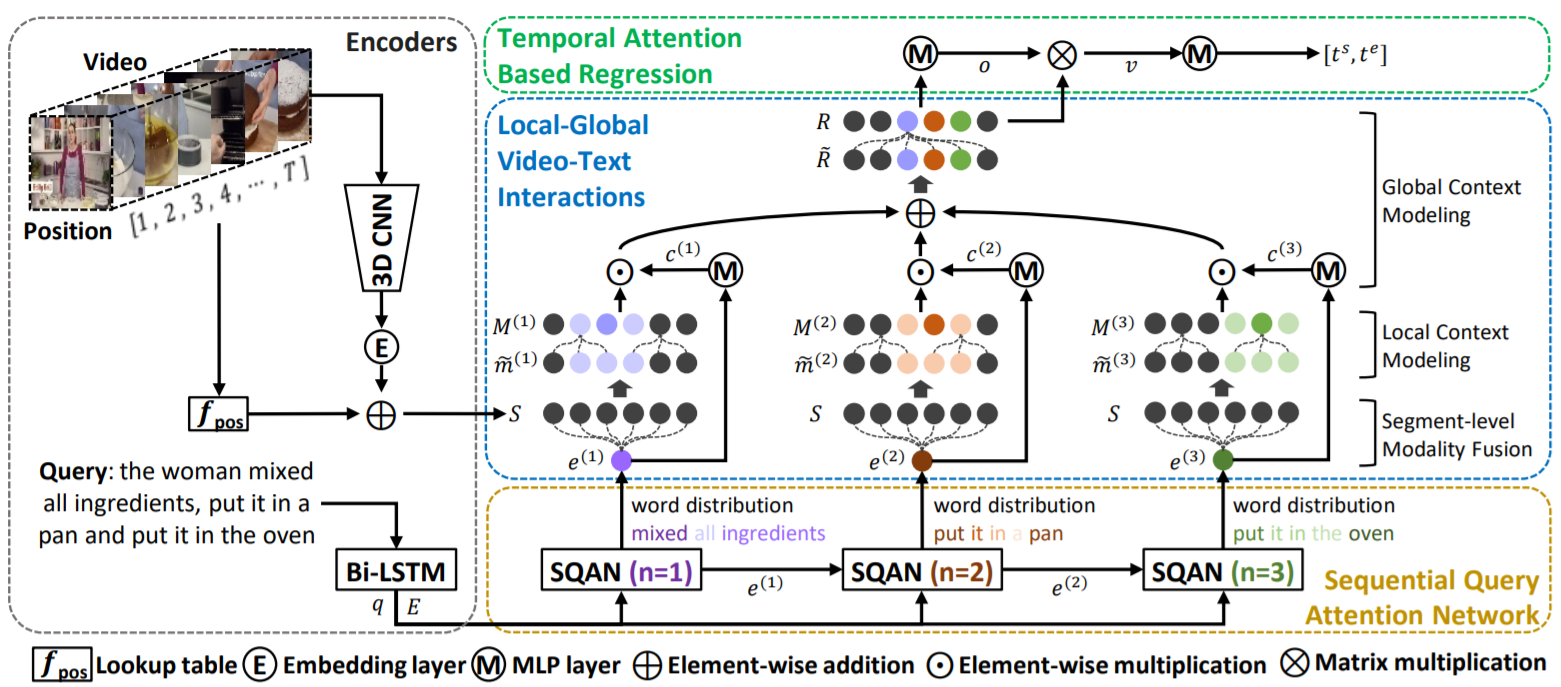

Local-Global Video-Text Interactions for Temporal Grounding

-

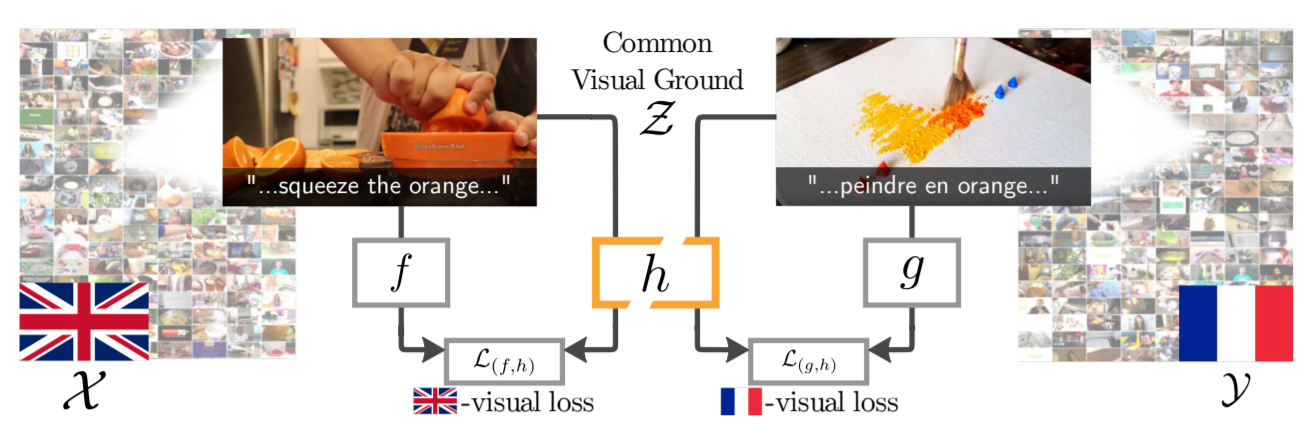

Visual Grounding in Video for Unsupervised Word Translation

-

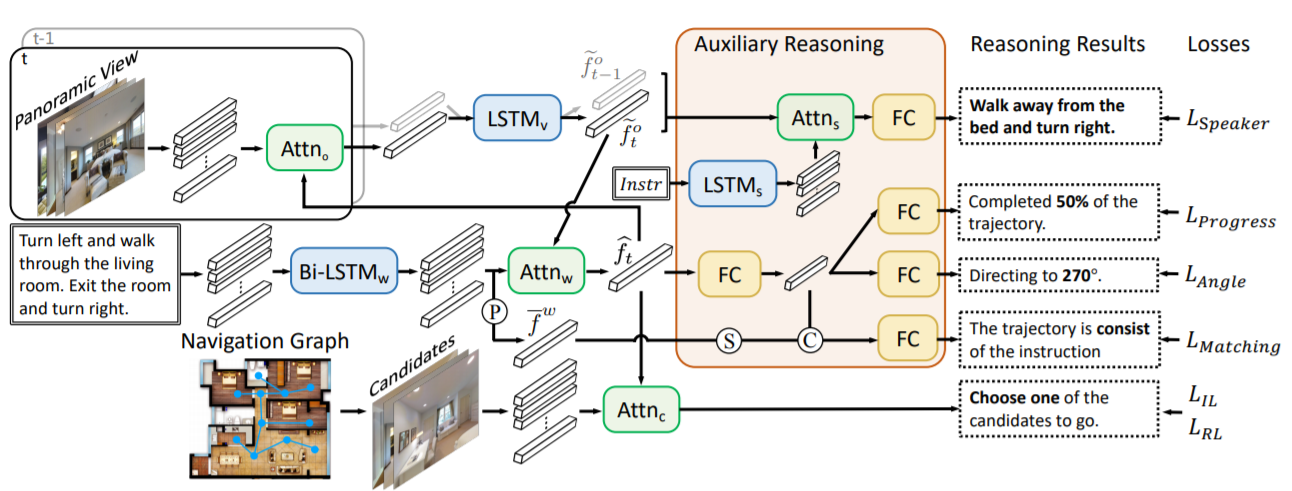

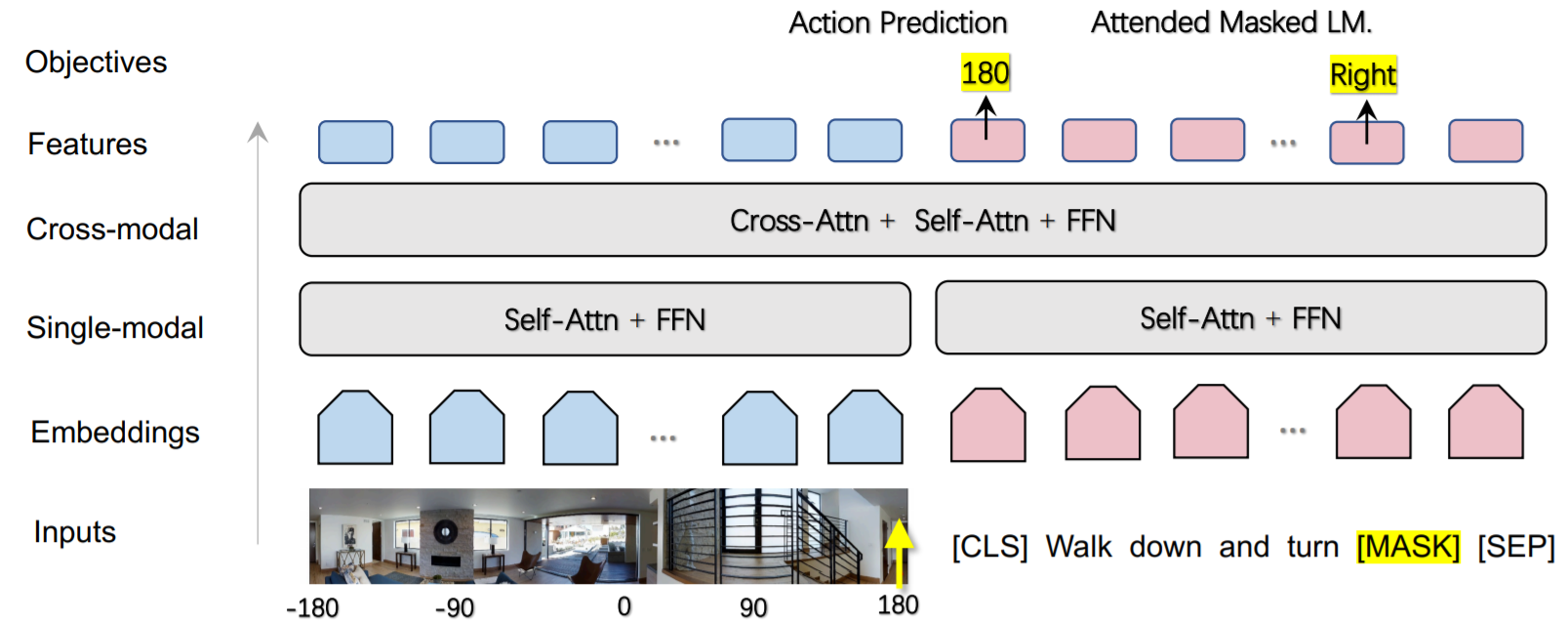

Vision-Language Navigation With Self-Supervised Auxiliary Reasoning Tasks

oral -

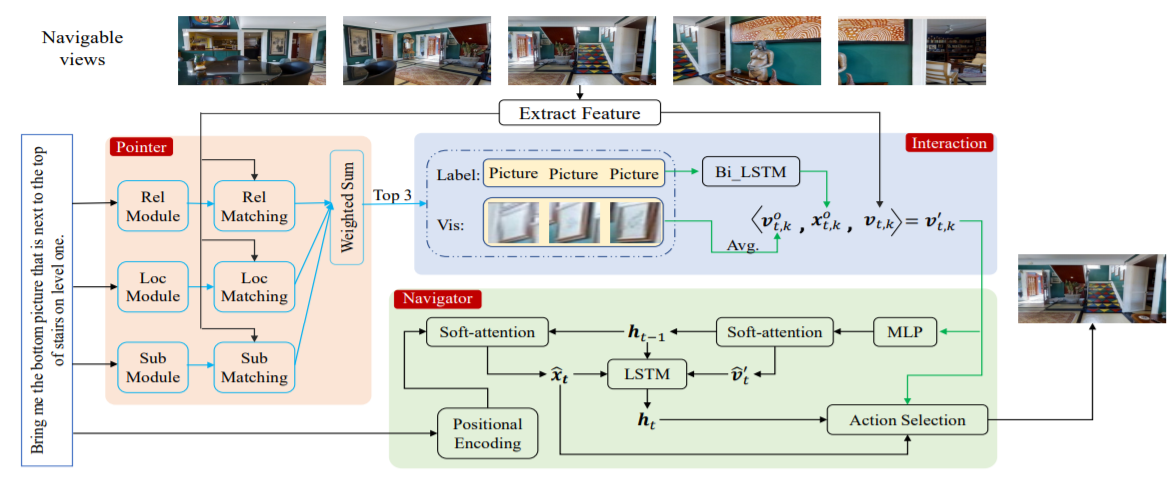

REVERIE: Remote Embodied Visual Referring Expression in Real Indoor Environments

oralPeter Anderson Qi Wu, William Yang Wang -

Towards Learning a Generic Agent for Vision-and-Language Navigation via Pre-training

-

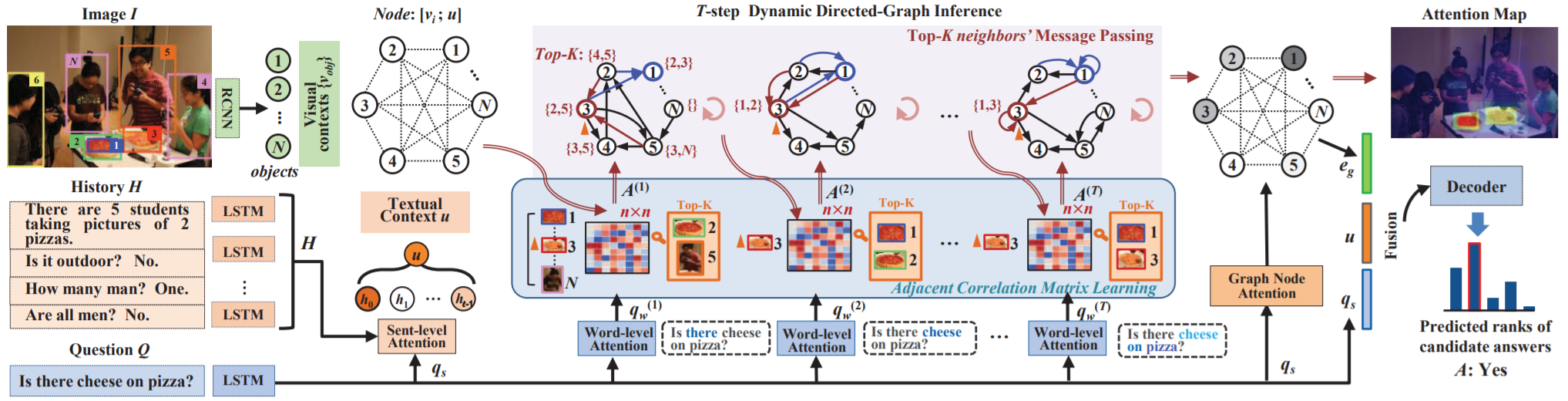

Iterative Context-Aware Graph Inference for Visual Dialog

oralZheng-jun Zha -

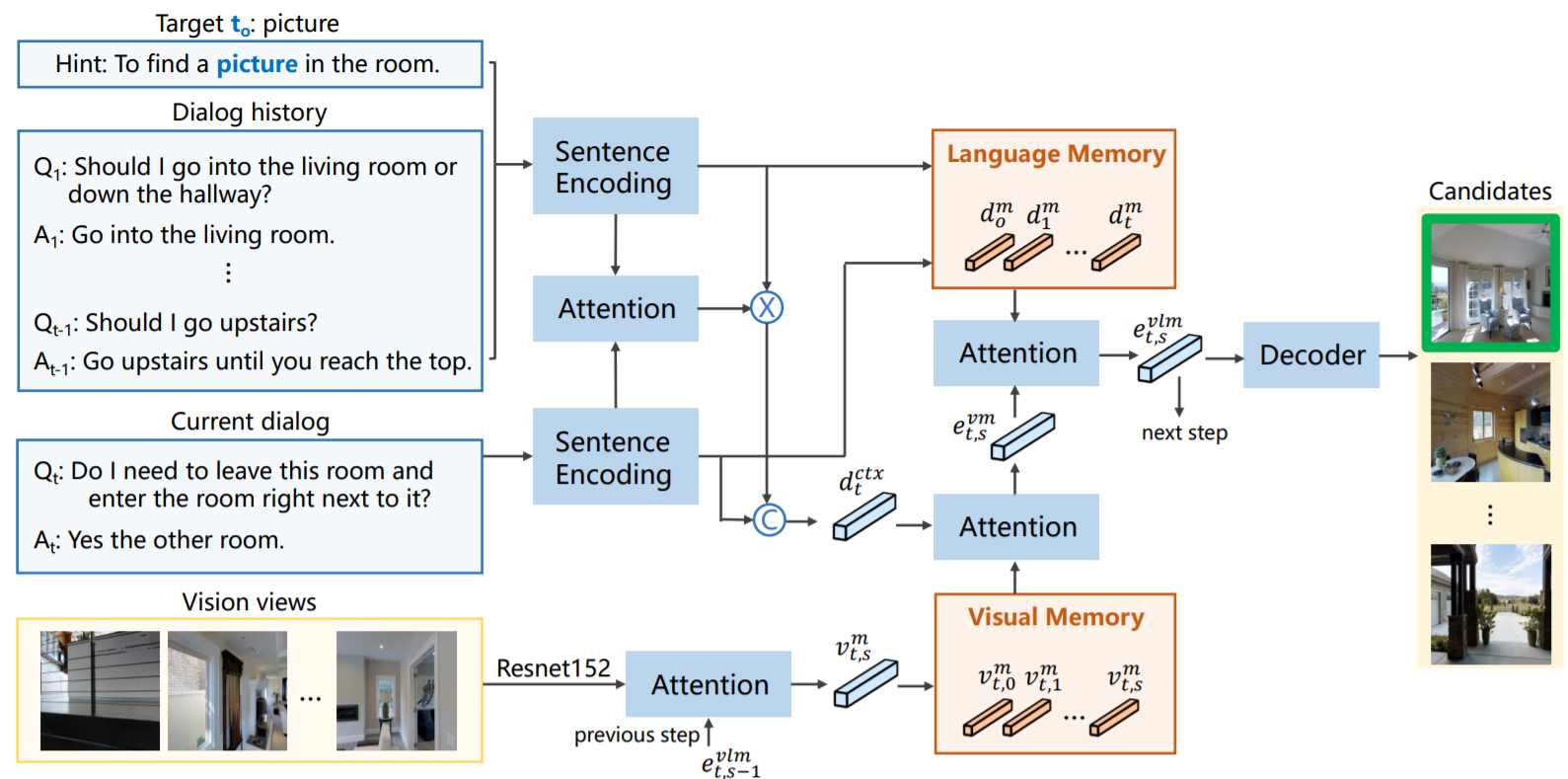

Vision-Dialog Navigation by Exploring Cross-modal Memory

-

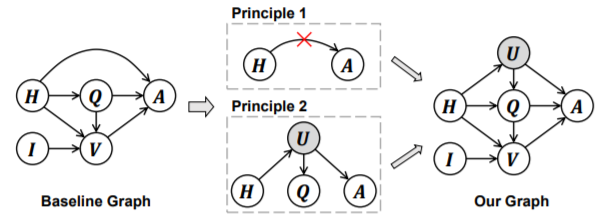

Two Causal Principles for Improving Visual Dialog

Hanwang Zhang

-

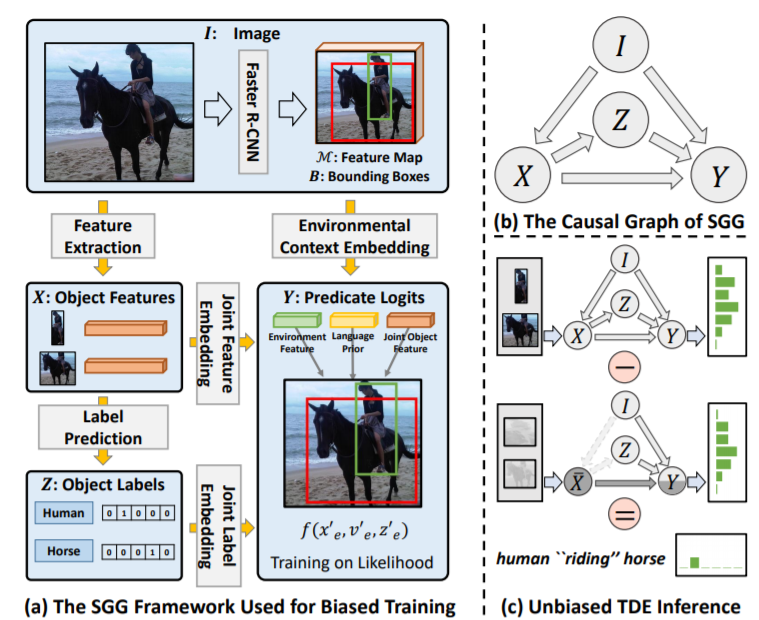

Unbiased Scene Graph Generation From Biased Training

oralHanwang Zhang -

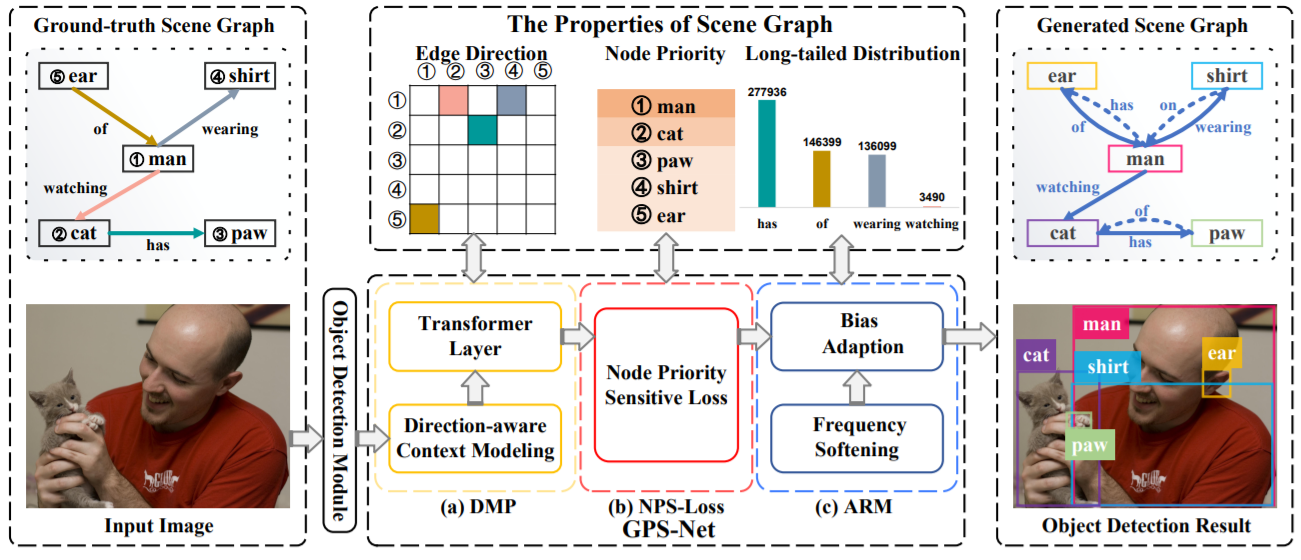

GPS-Net: Graph Property Sensing Network for Scene Graph Generation

oralDacheng Tao -

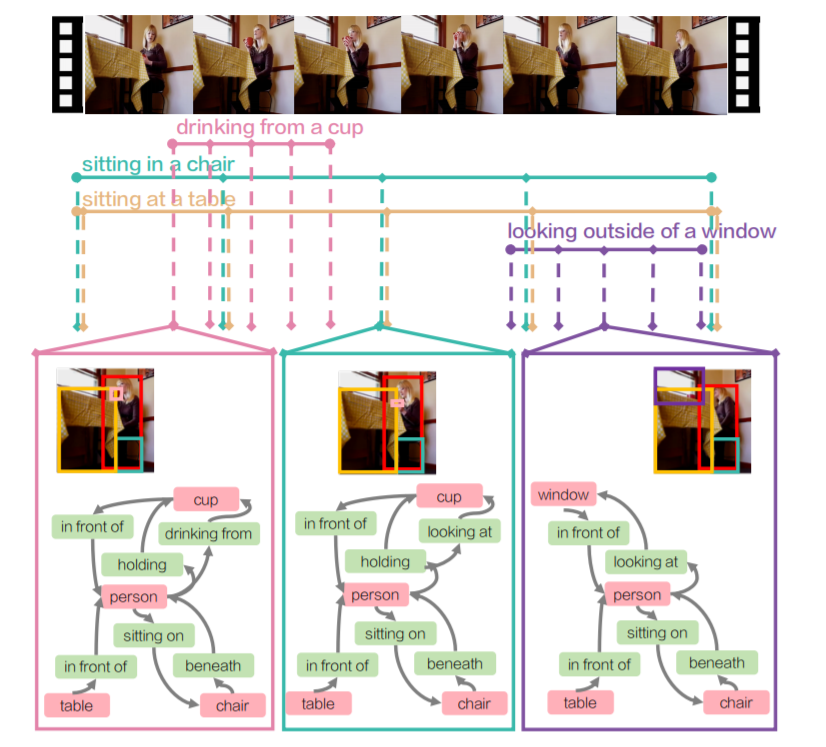

Action Genome: Actions as Composition of Spatio-temporal Scene Graphs

Feifei Li

- SmallBigNet: Integrating Core and Contextual Views for Video Classification

Yu Qiao - 3DV: 3D Dynamic Voxel for Action Recognition in Depth Video

- Video Modeling with Correlation Networks

Facebook AI - X3D: Expanding Architectures for Efficient Video Recognition

Facebook AI - Regularization on Spatio-Temporally Smoothed Feature for Action Recognition

- Listen to Look: Action Recognition by Previewing Audio

- Speech2Action: Cross-modal Supervision for Action Recognition

VGG - Uncertainty-aware Score Distribution Learning for Action Quality Assessment

- FineGym: A Hierarchical Video Dataset for Fine-grained Action Understanding

Dahua Lin - Something-Else: Compositional Action Recognition with Spatial-Temporal Interaction Networks

- TEA: Temporal Excitation and Aggregation for Action Recognition

- Intra- and Inter-Action Understanding via Temporal Action Parsing

Dahua lin - Temporal Pyramid Network for Action Recognition

- Multi-Modal Domain Adaptation for Fine-Grained Action Recognition

- Context Aware Graph Convolution for Skeleton-Based Action Recognition

Dacheng Tao - PREDICT & CLUSTER: Unsupervised Skeleton Based Action Recognition

- Semantics-Guided Neural Networks for Efficient Skeleton-Based Human Action Recognition

MSRA - Skeleton-Based Action Recognition with Shift Graph Convolutional Network

- Disentangling and Unifying Graph Convolutions for Skeleton-Based Action Recognition

Wanli Ouyang

- G-TAD: Sub-Graph Localization for Temporal Action Detection

- Learning Temporal Co-Attention Models for Unsupervised Video Action Localization

- Weakly-Supervised Action Localization by Generative Attention Modeling

- Learning to Discriminate Information for Online Action Detection

- Action Segmentation with Joint Self-Supervised Temporal Domain Adaptation

- SCT: Set Constrained Temporal Transformer for Set Supervised Action Segmentation

- Improving Action Segmentation via Graph Based Temporal Reasoning

- Set-Constrained Viterbi for Set-Supervised Action Segmentation

- Large Scale Video Representation Learning via Relational Graph Clustering

- Screencast Tutorial Video Understanding

- Evolving Losses for Unsupervised Video Representation Learning

- A Multigrid Method for Efficiently Training Video Models

Kaiming He

-

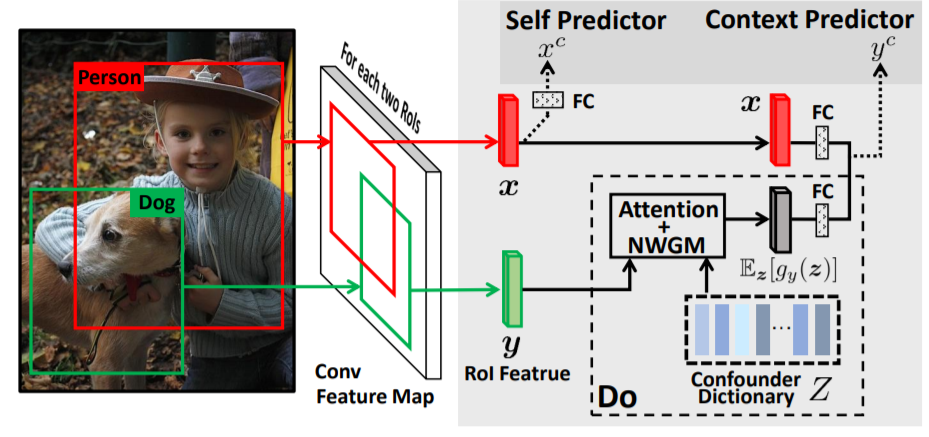

Visual Commonsense R-CNN

Hanwang Zhang -

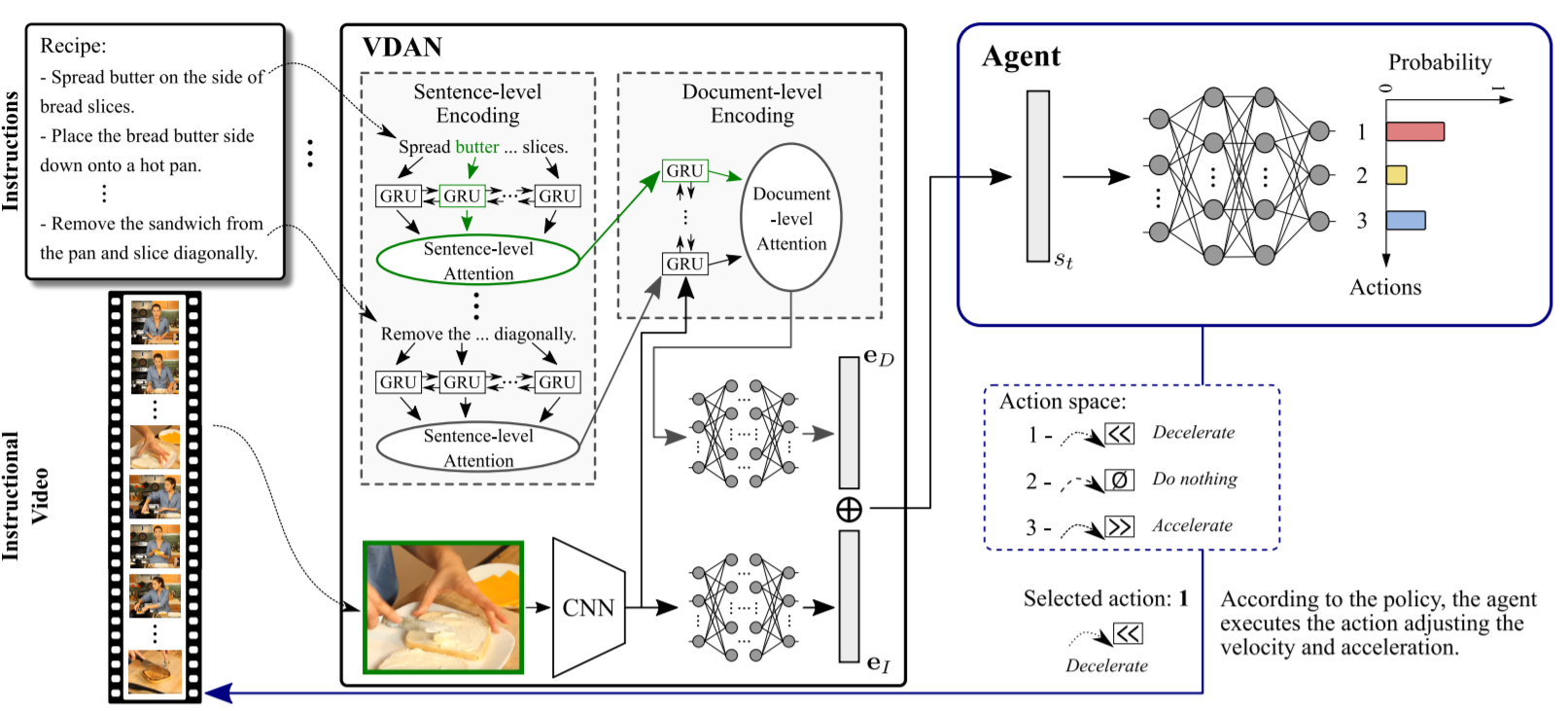

Straight to the Point: Fast-forwarding Videos via Reinforcement Learning Using Textual Data