The Alexa Voice Service (AVS) enables developers to integrate Alexa directly into their products, bringing the convenience of voice control to any connected device. AVS provides developers with access to a suite of resources to quickly and easily build Alexa-enabled products, including APIs, hardware development kits, software development kits, and documentation.

The AVS Device SDK provides C++-based (11 or later) libraries that leverage the AVS API to create device software for Alexa-enabled products. It is modular and abstracted, providing components for handling discrete functions such as speech capture, audio processing, and communications, with each component exposing the APIs that you can use and customize for your integration. It also includes a sample app, which demonstrates the interactions with AVS.

You can set up the SDK on the following platforms:

- Ubuntu Linux

- Raspberry Pi (Raspbian Stretch)

- macOS

- Windows 64-bit

- Generic Linux

- Android

You can also prototype with a third party development kit:

- XMOS VocalFusion 4-Mic Kit - Learn More Here

- Synaptics AudioSmart 2-Mic Dev Kit for Amazon AVS with NXP SoC - Learn More Here

- Intel Speech Enabling Developer Kit - Learn More Here

- Amlogic A113X1 Far-Field Dev Kit for Amazon AVS - Learn More Here

- Allwinner SoC-Only 3-Mic Far-Field Dev Kit for Amazon AVS - Learn More Here

- DSPG HDClear 3-Mic Development Kit for Amazon AVS - Learn More Here

Or if you prefer, you can start with our SDK API Documentation.

Watch this tutorial to learn about the how this SDK works and the set up process.

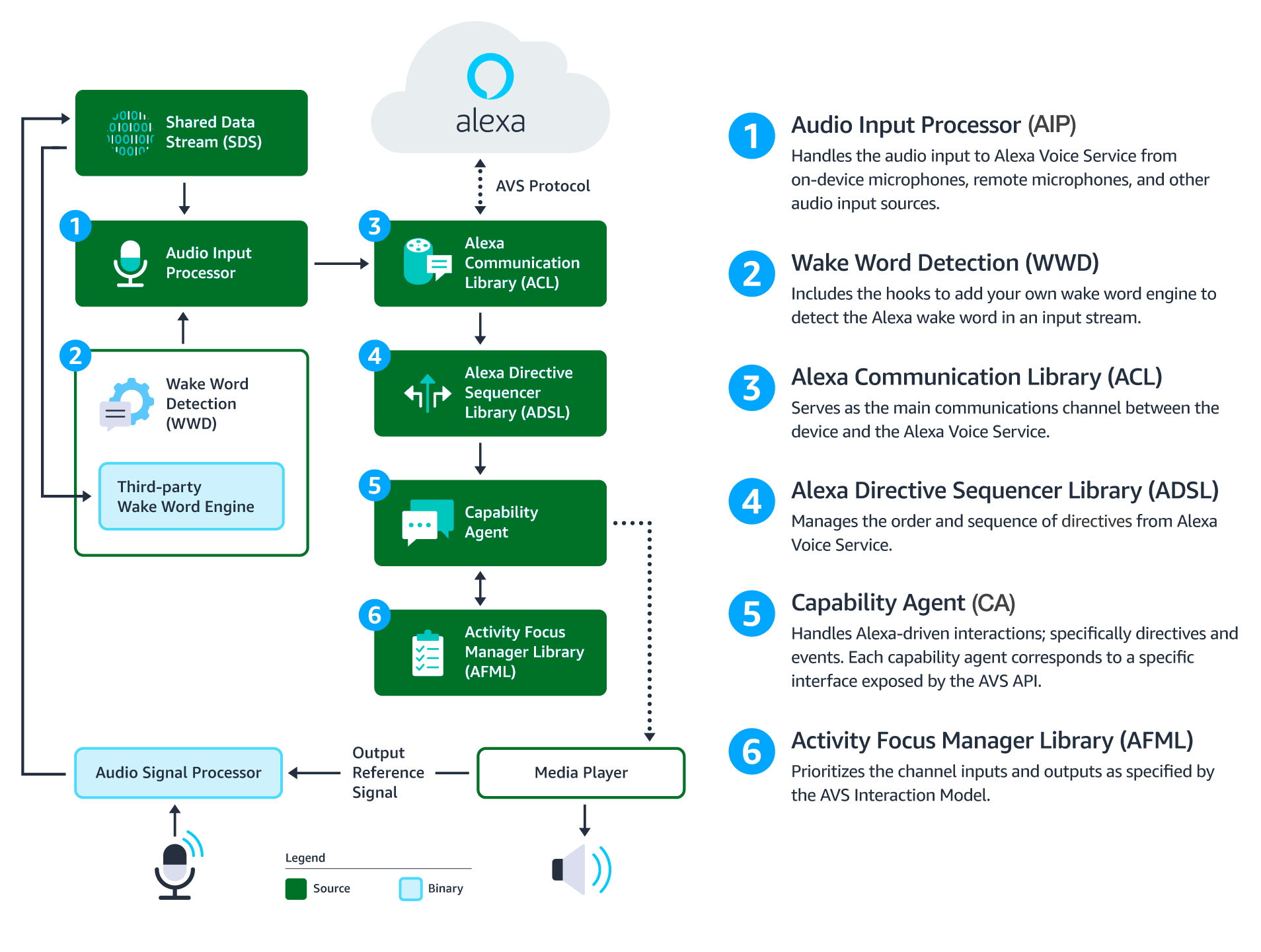

This diagram illustrates the data flows between components that comprise the AVS Device SDK for C++.

Audio Signal Processor (ASP) - Third-party software that applies signal processing algorithms to both input and output audio channels. The applied algorithms are designed to produce clean audio data and include, but are not limited to acoustic echo cancellation (AEC), beam forming (fixed or adaptive), voice activity detection (VAD), and dynamic range compression (DRC). If a multi-microphone array is present, the ASP constructs and outputs a single audio stream for the array.

Shared Data Stream (SDS) - A single producer, multi-consumer buffer that allows for the transport of any type of data between a single writer and one or more readers. SDS performs two key tasks:

- It passes audio data between the audio front end (or Audio Signal Processor), the wake word engine, and the Alexa Communications Library (ACL) before sending to AVS

- It passes data attachments sent by AVS to specific capability agents via the ACL

SDS is implemented atop a ring buffer on a product-specific memory segment (or user-specified), which allows it to be used for in-process or interprocess communication. Keep in mind, the writer and reader(s) may be in different threads or processes.

Wake Word Engine (WWE) - Software that spots wake words in an input stream. It is comprised of two binary interfaces. The first handles wake word spotting (or detection), and the second handles specific wake word models (in this case "Alexa"). Depending on your implementation, the WWE may run on the system on a chip (SOC) or dedicated chip, like a digital signal processor (DSP).

Audio Input Processor (AIP) - Handles audio input that is sent to AVS via the ACL. These include on-device microphones, remote microphones, an other audio input sources.

The AIP also includes the logic to switch between different audio input sources. Only one audio input source can be sent to AVS at a given time.

Alexa Communications Library (ACL) - Serves as the main communications channel between a client and AVS. The ACL performs two key functions:

- Establishes and maintains long-lived persistent connections with AVS. ACL adheres to the messaging specification detailed in Managing an HTTP/2 Connection with AVS.

- Provides message sending and receiving capabilities, which includes support JSON-formatted text, and binary audio content. For additional information, see Structuring an HTTP/2 Request to AVS.

Alexa Directive Sequencer Library (ADSL): Manages the order and sequence of directives from AVS, as detailed in the AVS Interaction Model. This component manages the lifecycle of each directive, and informs the Directive Handler (which may or may not be a Capability Agent) to handle the message.

Activity Focus Manager Library (AFML): Provides centralized management of audiovisual focus for the device. Focus is based on channels, as detailed in the AVS Interaction Model, which are used to govern the prioritization of audiovisual inputs and outputs.

Channels can either be in the foreground or background. At any given time, only one channel can be in the foreground and have focus. If multiple channels are active, you need to respect the following priority order: Dialog > Alerts > Content. When a channel that is in the foreground becomes inactive, the next active channel in the priority order moves into the foreground.

Focus management is not specific to Capability Agents or Directive Handlers, and can be used by non-Alexa related agents as well. This allows all agents using the AFML to have a consistent focus across a device.

Capability Agents: Handle Alexa-driven interactions; specifically directives and events. Each capability agent corresponds to a specific interface exposed by the AVS API. These interfaces include:

- Alerts - The interface for setting, stopping, and deleting timers and alarms.

- AudioPlayer - The interface for managing and controlling audio playback.

- Bluetooth - The interface for managing Bluetooth connections between peer devices and Alexa-enabled products.

- DoNotDisturb - The interface for enabling the do not disturb feature.

- EqualizerController - The interface for adjusting equalizer settings, such as decibel (dB) levels and modes.

- InteractionModel - This interface allows a client to support complex interactions initiated by Alexa, such as Alexa Routines.

- Notifications - The interface for displaying notifications indicators.

- PlaybackController - The interface for navigating a playback queue via GUI or buttons.

- Speaker - The interface for volume control, including mute and unmute.

- SpeechRecognizer - The interface for speech capture.

- SpeechSynthesizer - The interface for Alexa speech output.

- System - The interface for communicating product status/state to AVS.

- TemplateRuntime - The interface for rendering visual metadata.

In addition to adopting the Security Best Practices for Alexa, when building the SDK:

- Protect configuration parameters, such as those found in the AlexaClientSDKCOnfig.json file, from tampering and inspection.

- Protect executable files and processes from tampering and inspection.

- Protect storage of the SDK's persistent states from tampering and inspection.

- Your C++ implementation of AVS Device SDK interfaces must not retain locks, crash, hang, or throw exceptions.

- Use exploit mitigation flags and memory randomization techniques when you compile your source code, in order to prevent vulnerabilities from exploiting buffer overflows and memory corruptions.

- Review the AVS Terms & Agreements.

- The earcons associated with the sample project are for prototyping purposes only. For implementation and design guidance for commercial products, please see Designing for AVS and AVS UX Guidelines.

- Please use the contact information below to-

- Contact Sensory for information on TrulyHandsFree licensing.

- Contact KITT.AI for information on SnowBoy licensing.

- IMPORTANT: The Sensory wake word engine referenced in this document is time-limited: code linked against it will stop working when the library expires. The library included in this repository will, at all times, have an expiration date that is at least 120 days in the future. See Sensory's GitHub page for more information.

Note: Feature enhancements, updates, and resolved issues from previous releases are available to view in CHANGELOG.md.

v1.11.0 released 12/19/2018:

Enhancements

- Added support for the new Alexa DoNotDisturb interface, which enables users to toggle the do not disturb (DND) function on their Alexa built-in products.

- The SDK now supports Opus encoding, which is optional. To enable Opus, you must set the CMake flag to

-DOPUS=ON, and include the libopus library dependency in your build. - The MediaPlayer reference implementation has been expanded to support the SAMPLE-AES and AES-128 encryption methods for HLS streaming.

- AES-128 encryption is dependent on libcrypto, which is part of the required openSSL library, and is enabled by default.

- To enable SAMPLE-AES encryption, you must set the

-DSAMPLE_AES=ONin your CMake command, and include the FFMPEG library dependency in your build.

- A new configuration for deviceSettings has been added.This configuration allows you to specify the location of the device settings database.

- Added locale support for es-MX.

Bug Fixes

- Fixed an issue where music wouldn't resume playback in the Android app.

- Now all equalizer capabilities are fully disabled when equalizer is turned off in configuration file. Previously, devices were unconditionally required to provide support for equalizer in order to run the SDK.

- Issue 1106 - Fixed an issue in which the

CBLAuthDelegatewasn't using the correct timeout during request refresh. - Issue 1128 - Fixed an issue in which the

AudioPlayerinstance persisted at shutdown, due to a shared dependency with theProgressTimer. - Fixed in issue that occurred when a connection to streaming content was interrupted, the SDK did not attempt to resume the connection, and appeared to assume that the content had been fully downloaded. This triggered the next track to be played, assuming it was a playlist.

- Issue 1040 - Fixed an issue where alarms would continue to play after user barge-in.

Known Issues

PlaylistParserandIterativePlaylistParsergenerate two HTTP requests (one to fetch the content type, and one to fetch the audio data) for each audio stream played.- Music playback history isn't being displayed in the Alexa app for certain account and device types.

- On GCC 8+, issues related to

-Wclass-memaccesswill trigger warnings. However, this won't cause the build to fail, and these warnings can be ignored. - In order to use Bluetooth source and sink PulseAudio, you must manually load and unload PulseAudio modules after the SDK starts.

- The

ACLmay encounter issues if audio attachments are received but not consumed. SpeechSynthesizerStatecurrently usesGAINING_FOCUSandLOSING_FOCUSas a workaround for handling intermediate state. These states may be removed in a future release.- The Alexa app doesn't always indicate when a device is successfully connected via Bluetooth.

- Connecting a product to streaming media via Bluetooth will sometimes stop media playback within the source application. Resuming playback through the source application or toggling next/previous will correct playback.

- When a source device is streaming silence via Bluetooth, the Alexa app indicates that audio content is streaming.

- The Bluetooth agent assumes that the Bluetooth adapter is always connected to a power source. Disconnecting from a power source during operation is not yet supported.

- On some products, interrupted Bluetooth playback may not resume if other content is locally streamed.

make integrationis currently not available for Android. In order to run integration tests on Android, you'll need to manually upload the test binary file along with any input file. At that point, the adb can be used to run the integration tests.- On Raspberry Pi running Android Things with HDMI output audio, beginning of speech is truncated when Alexa responds to user TTS.

- When the sample app is restarted and network connection is lost, alerts don't play.

- When network connection is lost, lost connection status is not returned via local Text-to Speech (TTS).