- What is this?

- What's new?

- Getting started

- Examples

- Features

- Keyframed parameter values with scriptable interpolation

- Interpolation expressions

- Working with time & beats (audio synchronisation)

- Time series for arbtrary data sequences

- Camera movement preview

- Deforum integration features

- Prompt manipulation

- Using multiple prompts

- Subseed control for seed travelling

- Delta values (aka absolute vs relative motion parameters)

- Working with large number of frames (performance tips)

- Development & running locally

- Credits and support

You can jump straight into Parseq here: https://sd-parseq.web.app/ .

To provide some context:

- Stable Diffusion is an AI image generation tool.

- Deforum is a notebook-based UI for Stable Diffusion that is geared towards creating videos.

- AUTOMATIC1111/stable-diffusion-webui is a Web UI for Stable Diffusion (but not for Deforum).

- The Deforum extension for Automatic1111 is an extention to the Automatic1111 Web UI that integrates the functionality of the Deforum notebook.

Parseq (this tool) is a parameter sequencer for the Deforum extension for Automatic1111. You can use it to generate animations with tight control and flexible interpolation over many Stable Diffusion parameters (such as seed, scale, prompt weights, noise, image strength...), as well as input processing parameter (such as zoom, pan, 3D rotation...).

For now, Parseq is almost entirely front-end and stores all state in browser local storage by default. All processing, including audio processing, is done in the browser. Signed-in users can optionally upload their work from the UI for easier sharing.

Using Parseq, you can:

See the Parseq change log on the project wiki.

- Start by ensuring you have a working installation of Automatic1111's Stable Diffusion UI

- Next, install the Deforum extension.

– You should now see a

Parseqsection right at the bottom for theInittab under theDeforumextension (click to expand it).

The best way to get your head around Parseq's capabilities and core concepts is to watch the following tutorials:

| Part 1 | Part 2 | Part 3 |

|---|---|---|

|

|

|

In summary, there are 2 steps to perform:

Step 1: Create your parameter manifest

- Go to https://sd-parseq.web.app/ (or run the UI yourself from this repo with

npm start) - Edit the table at the top to specify your keyframes and parameter values at those keyframes. See below for more information about what you can do here.

- Copy the contents of the "Output" textbox at the bottom. If you are signed in (via the button at the top right), you can choose to upload the output instead, and then copy the resulting URL. All subsequent changes will be pushed to the same URL, so you won't need to copy & paste again.

Step 2: Generate the video

- Head to the SD web UI go to the Deforum tab and then the Init tab.

- Paste the JSON or URL you copied in step 1 into the Parseq section at the bottom of the page.

- Fiddle with any other Deforum / Stable Diffusion settings you want to tweak. Rember in particular to check the animation mode, the FPS and the total number of frames to make sure they match Parseq.

- Click generate.

Here are some examples showing what can do. Most of these were generated at 20fps then smoothed to 60fps with ffmpeg minterpolate or FILM. See the also the Parseq Example Library for a range of simple examples and all required settings to recreate them.

| Example | Description |

|---|---|

Parseq.example_.prompt.influenced.by.audio.pitch.and.amplitude.mp4 |

Audio-controlled prompt: the amplitude affects the facial expression, and the pitch affects the pastel/vector effect. See the video on Youtube for higher quality and all settings. |

Video.made.in.Parseq.Tutorial.3.rendered.with.Euler.80.steps.1024x1024.mp4 |

Audio synchronisation example from Parseq Tutorial 3. Watch on Youtube for higher quality. |

5vdvjo.mp4 |

Another example of advanced audio synchronisation. A detailed description of how this was created is available here. The music is an excerpt of The Prodigy - Smack My Bitch Up (Noisia Remix). |

20230330142257_FILM_x4.1.mp4 |

Combining 3D y-axis rotation with x-axis pan to rotate around a subject. See the Parseq Example Library for more information. |

20230308140805.mp4_Upscaled_x4.mp4 |

Seed travelling example featured in Parseq Tutorial 2. |

20230306232431_FILM_x3.mp4_Upscaled_x4.mp4 |

- Prompt manipulation example featured in Parseq Tutorial 2. |

20221029004637.mp4-60fps-smooth.mp4 |

Oscillating between a few famous faces with some 3d movement and occasional denoising spikes to reset the context. |

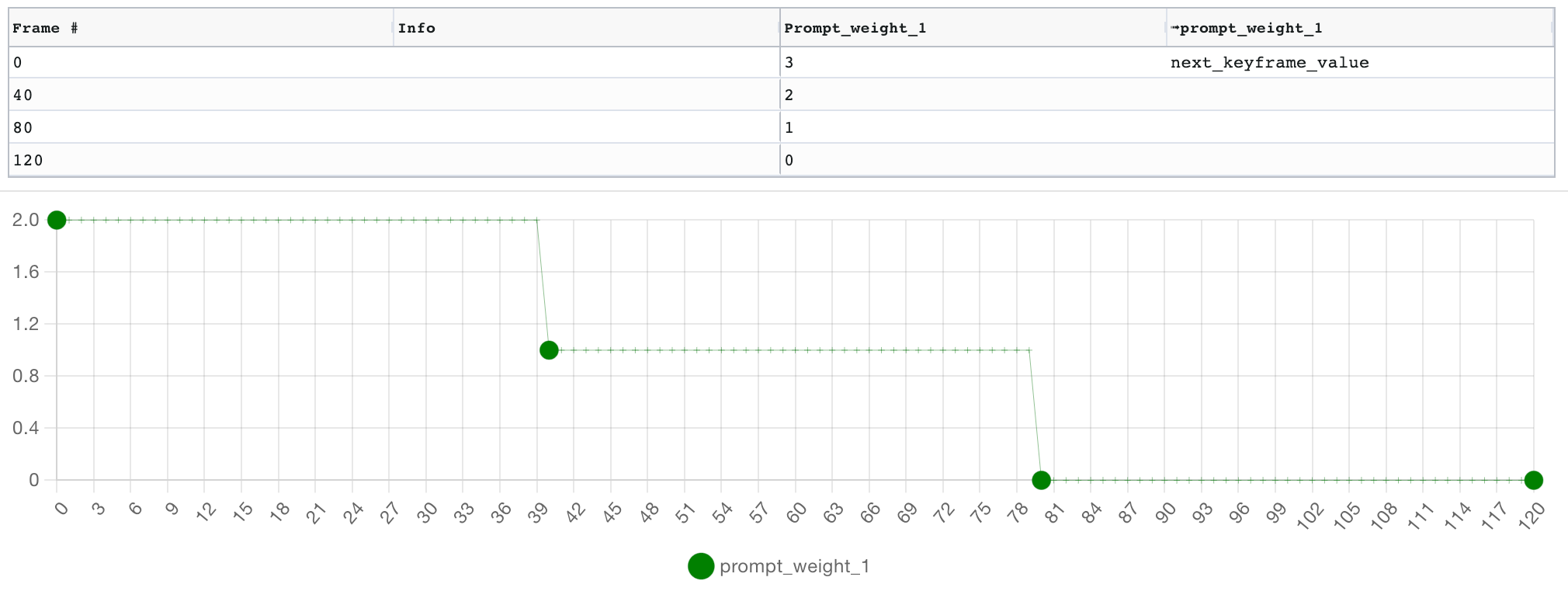

Parseq's main feature is advanced control over parameter values, using keyframing with interesting interpolation mechanisms.

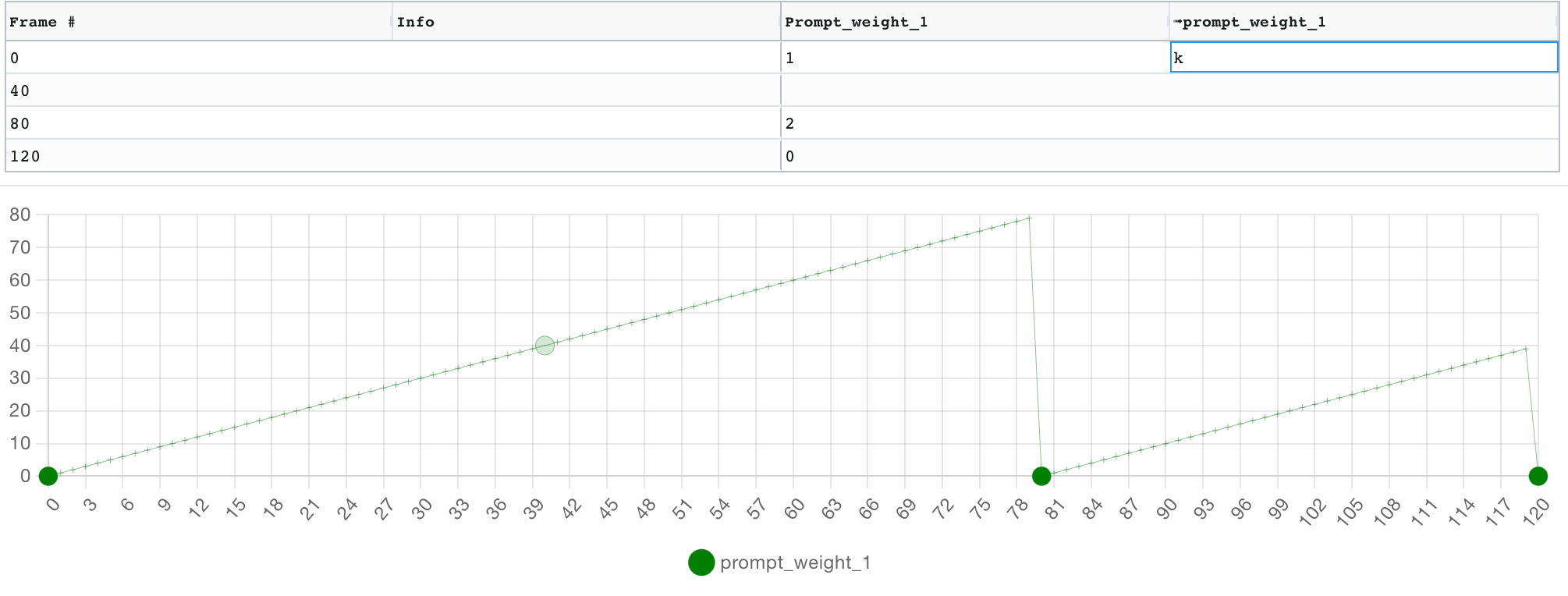

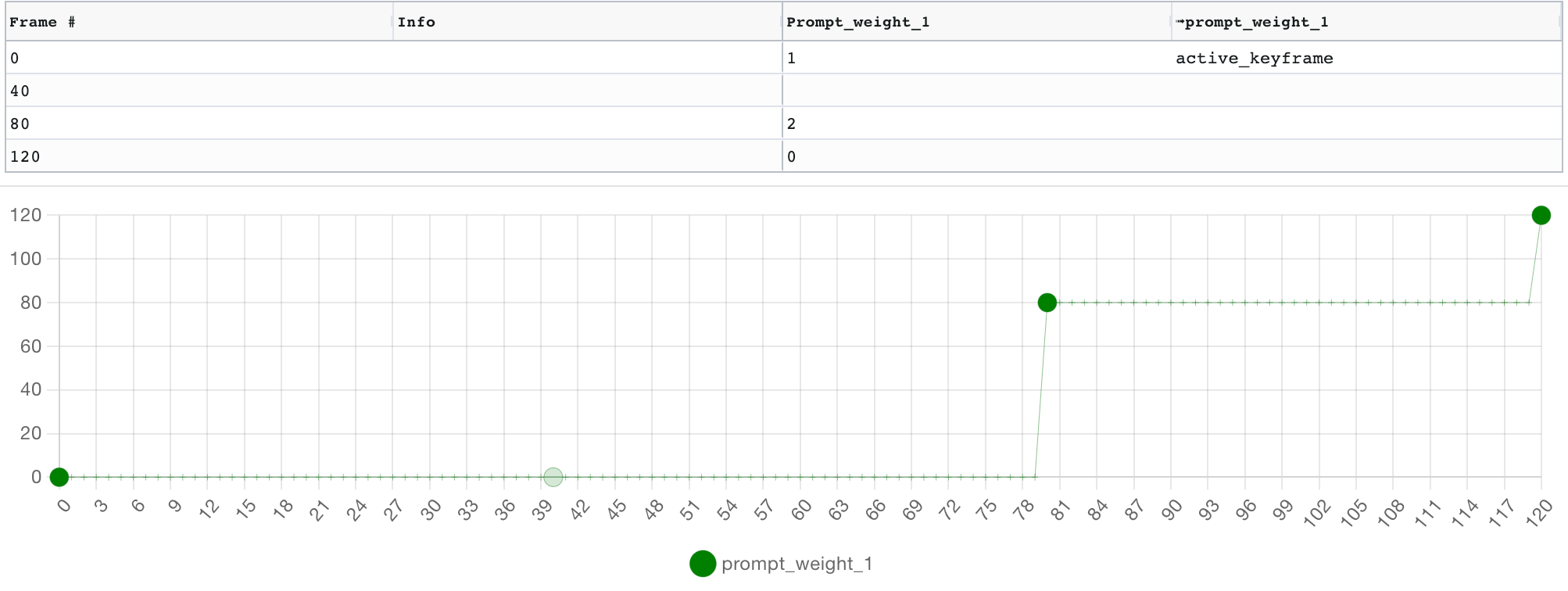

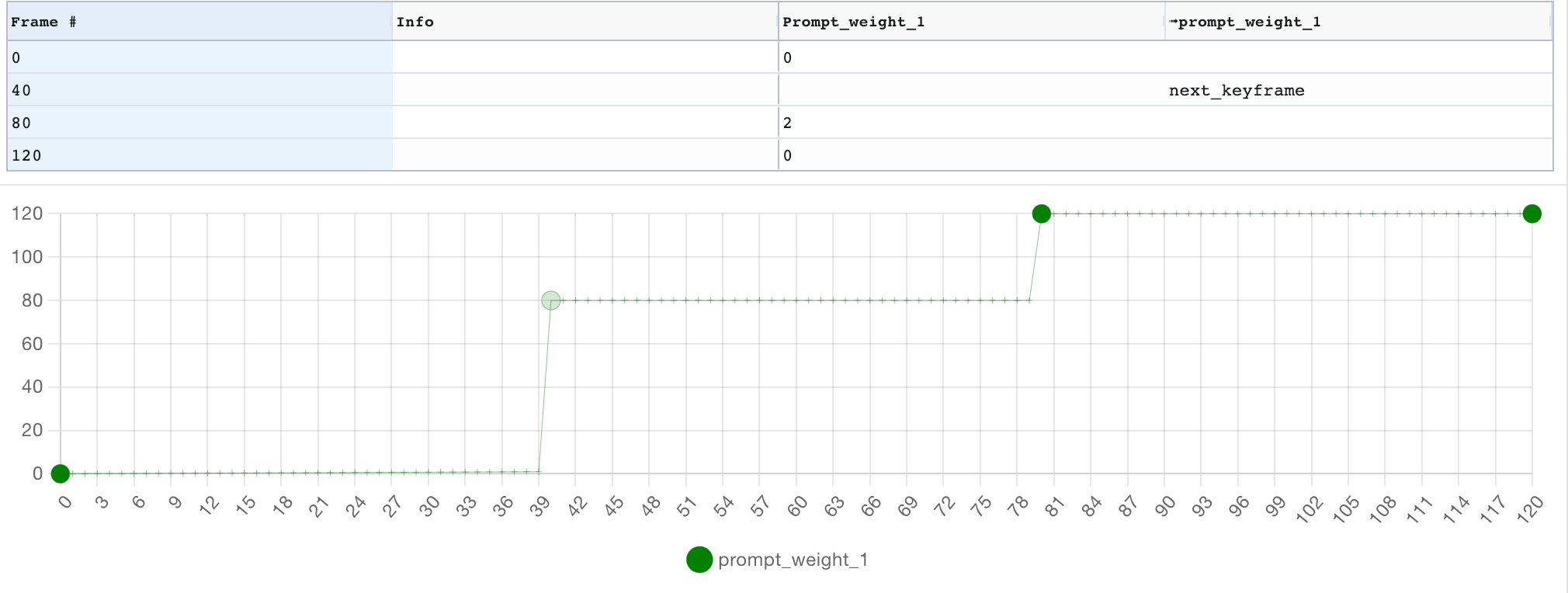

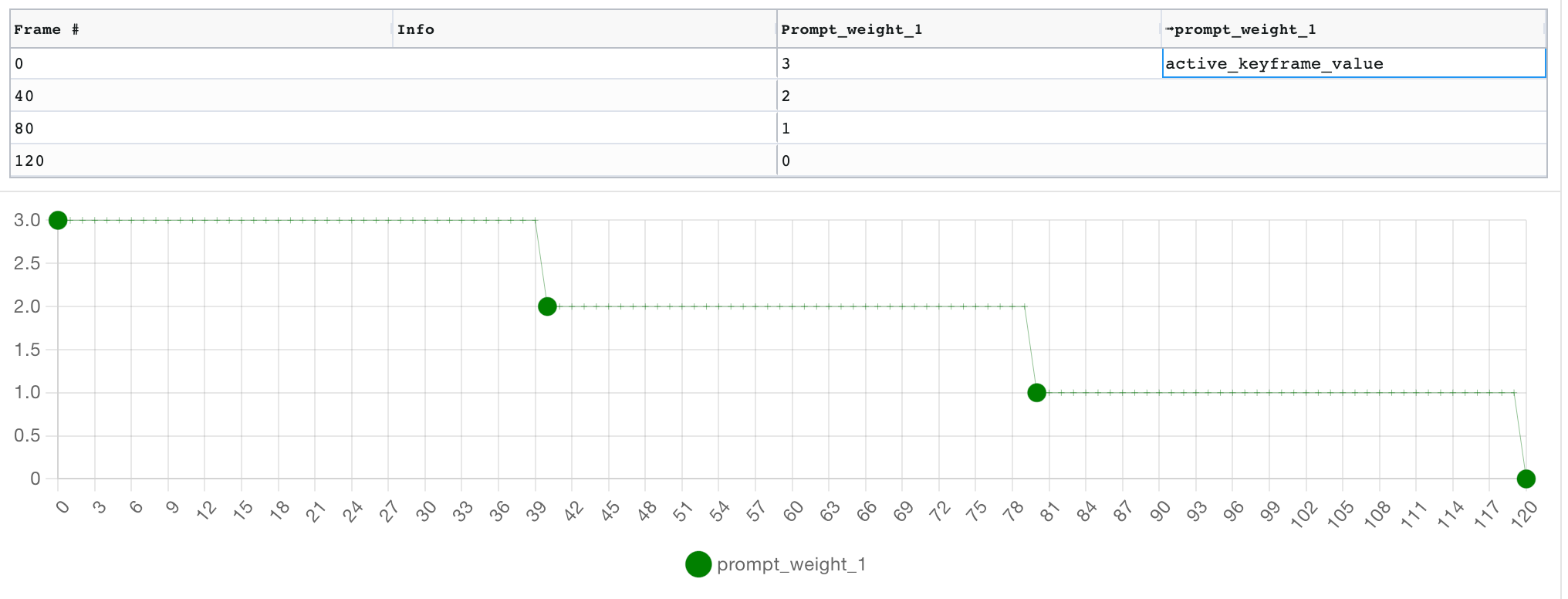

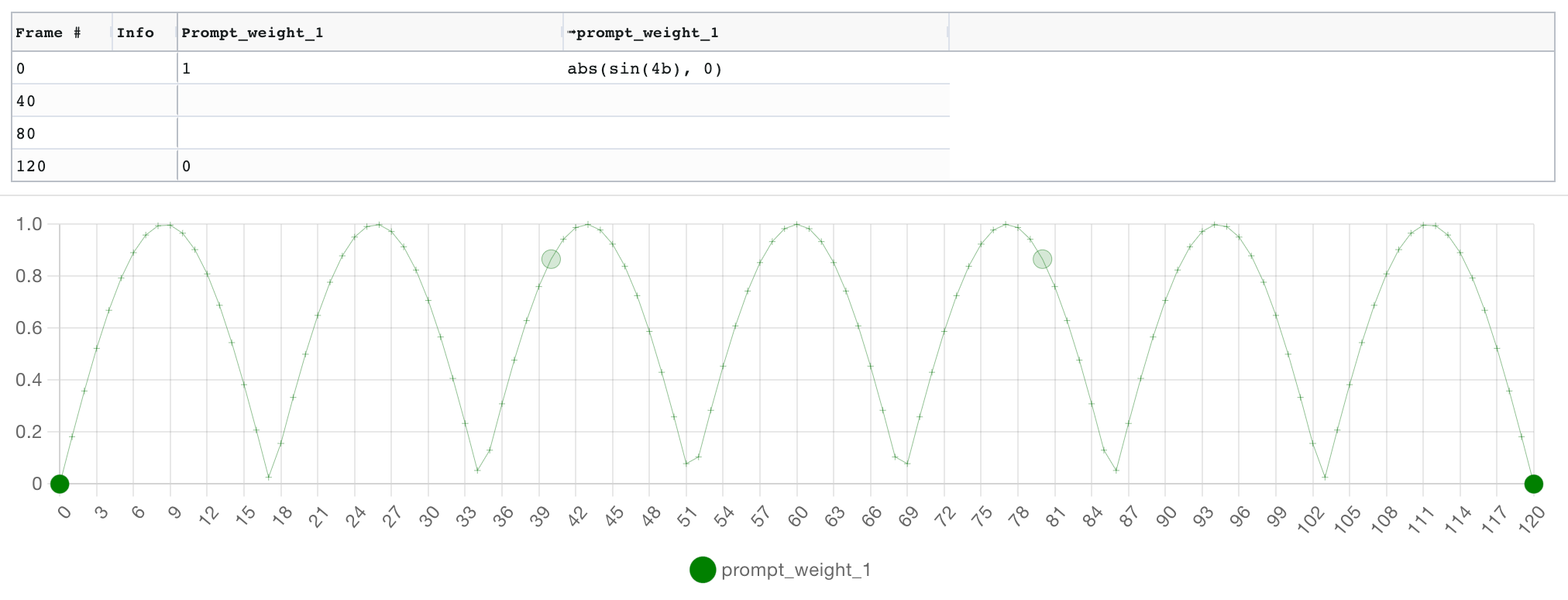

The keyframe grid is the central UI concept in Parseq. Each row in the grid represents a keyframe. Each parameter has a pair of columns: the first takes an explicit value for that field, and the second takes an interpolation formula, which defines how the value will "travel" to the next keyframe's value. If no interpolation formula is specified on a given keyframe, the formula from the previous keyframe continues to be used. The default interpolation algorithm is linear interpolation.

Below the grid, a graph allows you to see the result of the interpolation (and edit keyframe values by dragging nodes):

The interpolation formula can be an arbitrarily complex mathematical expression, and can use a range of built-in functions and values, including oscillators and helpers to synchronise them to timestamps or beats:

Interpolation expressions define how the value for a given field should be computed at each frame. Expressions can return numbers or strings. String literals must be enclosed in double quotes ("").

Here are the operators, values, constants and functions you can use in Parseq expressions.

💡 You can visualise and play with all interpolation logic using Parseq's live documentation. 💡

Your expression runs in a context that provides access to a number of useful variables:

| Constant | description | example |

|---|---|---|

PI |

Constant pi. | |

E |

Constant e. | |

SQRT2 |

Square root of 2. | |

SQRT1_2 |

Square root of 2. | |

LN2 |

Natural logarithm of 2. | |

LN10 |

Natural logarithm of 10. | |

LOG2E |

Base 2 logarithm of e. | |

LOG10E |

Base 10 logarithm of e. |

Units can be appended to numerical constants to convert from frame to beats/seconds using the document's FPS and BPM. This is particularly useful when specifying the period of an oscillator (or anything else representing a time period).

| unit | description | example |

|---|---|---|

f |

(default) frames | sin(p=10f) (equivalent to sin(p=10)) |

s |

seconds | sin(p=2s) |

b |

beats | sin(p=4b) |

Use these functions to convert between frames, beats and seconds:

| unit | function | example |

|---|---|---|

f2b(x) |

Frames to beats | |

b2f(x) |

Beats to frames | |

f2s(x) |

Frames to seconds | |

s2f(x) |

Seconds to frames |

All functions can be called either with unnamed args (e.g. sin(10,2)) or named args (e.g. sin(period=10, amplitude=2)). Most arguments have long and short names (e.g. sin(p=10, a=2)).

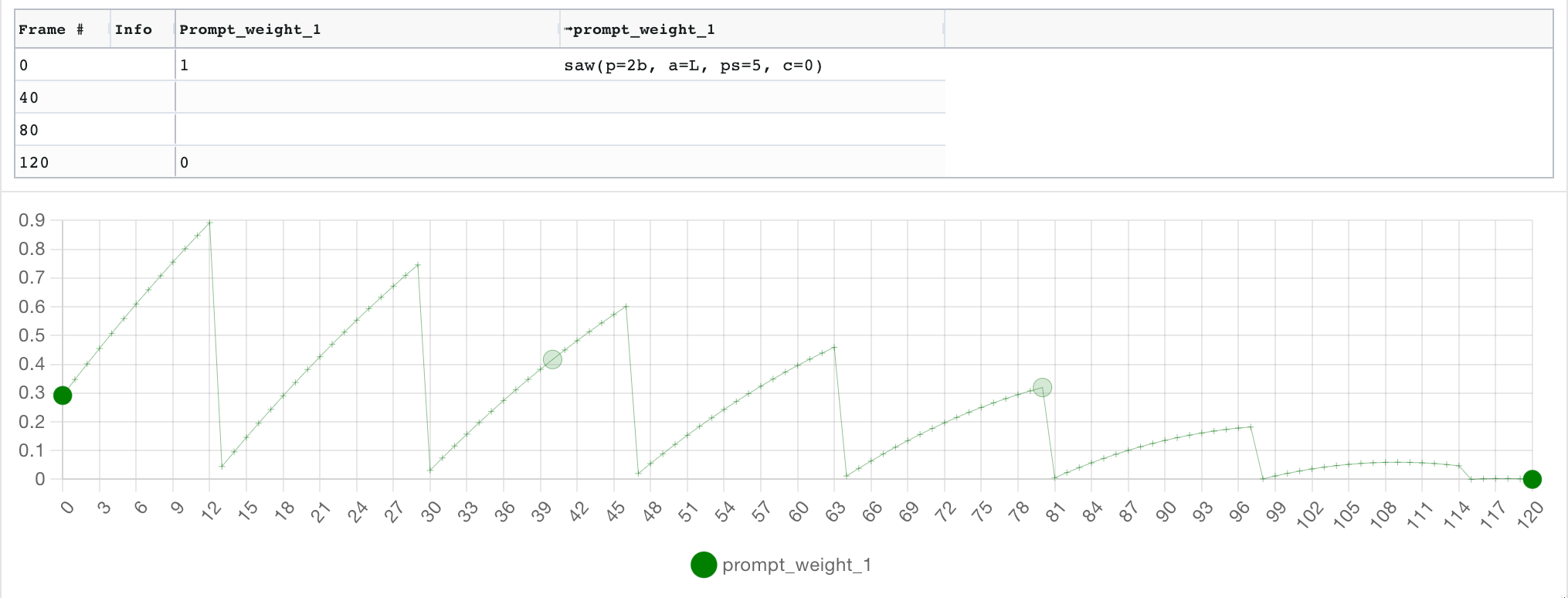

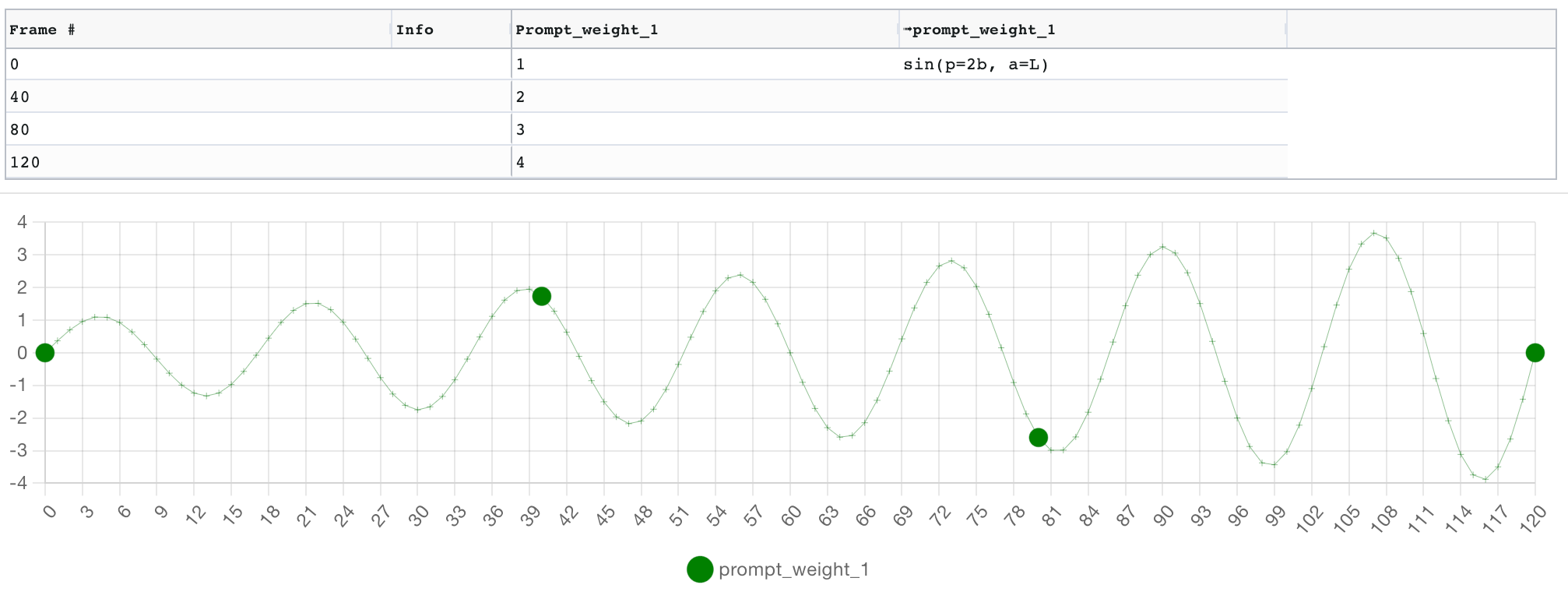

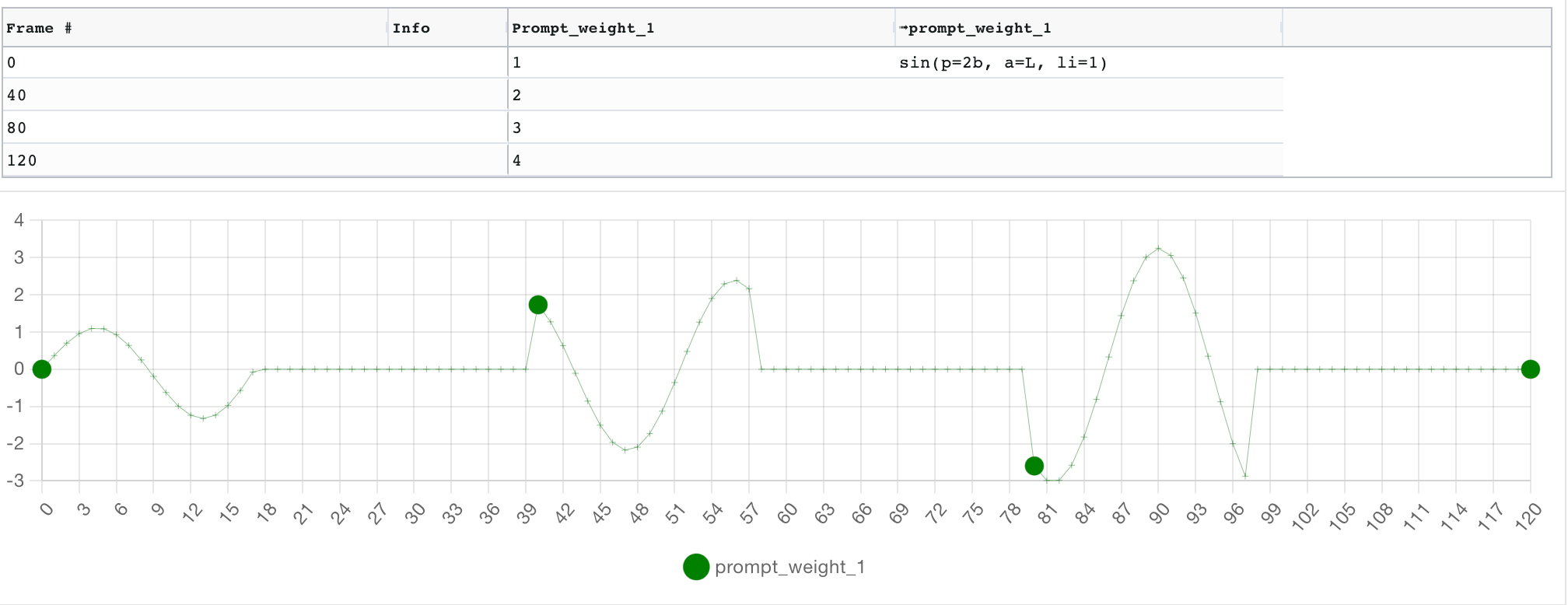

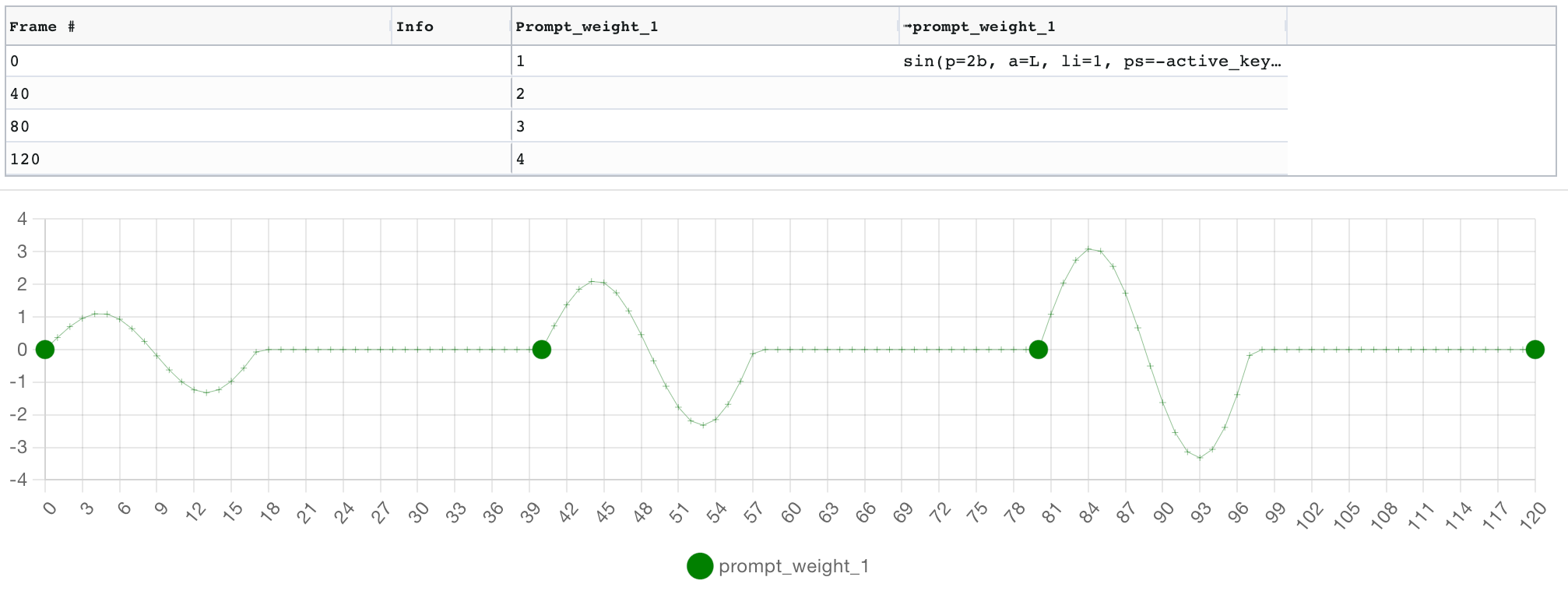

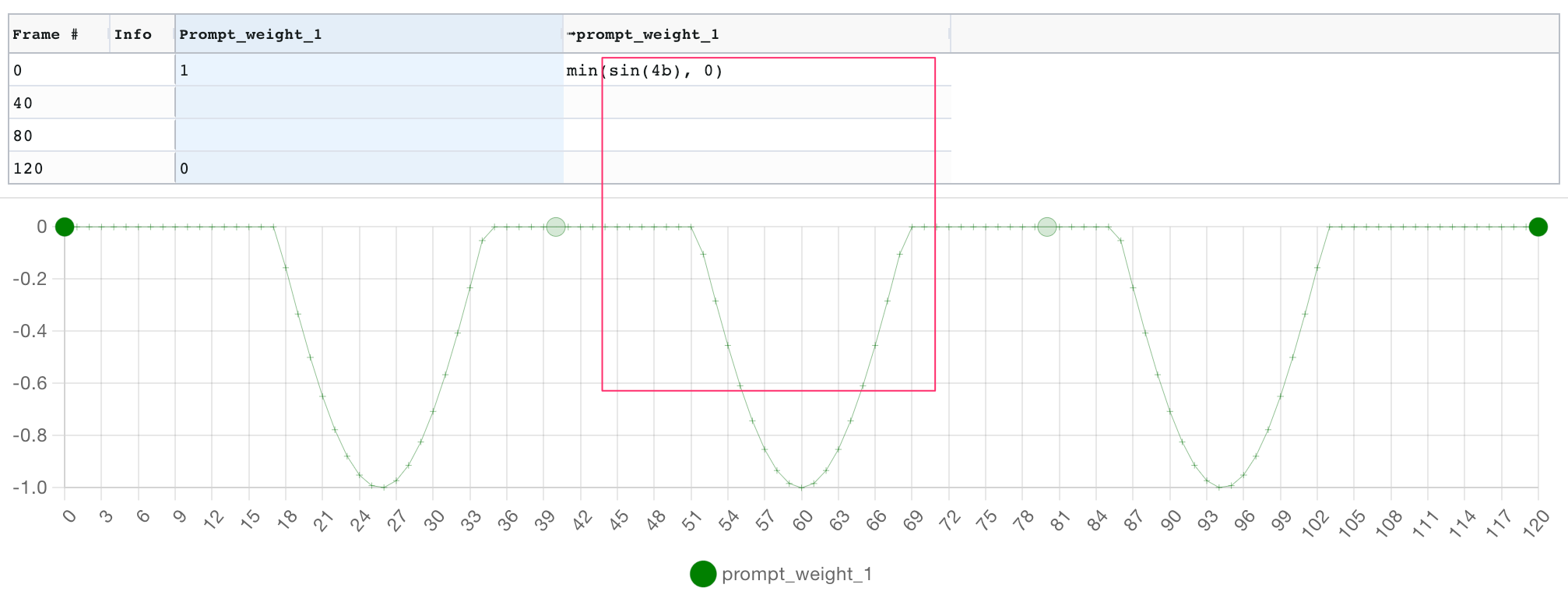

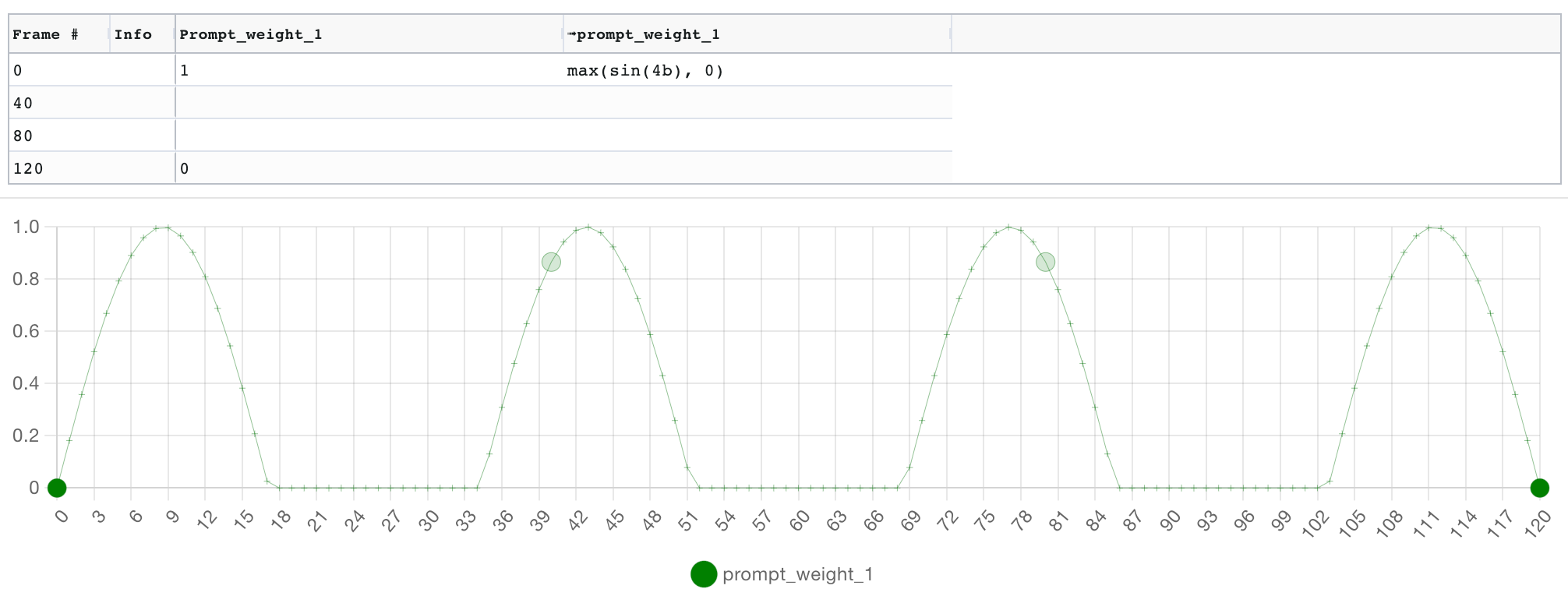

In the examples below, note how the oscillators' amplitude is set to the linearly interpolated value of the field (L), from its initial value of 1 on frame 0, to value 0 on frame 120. This is why the amplitude of the oscillator decreases over time.

Oscillator arguments:

- Period

prequired: The period of the oscillation. By default the unit is frames, but you can specify seconds or beats by appending the appropriate suffix (e.g.sin(p=4b)orsin(p=5s)). - Amplitude

a(default:1): The amplitude of the oscillation.sin(p=4b, a=2)is equivalent tosin(p=4b)*2. - Phase shift

ps(default:0): The x-axis offset of the oscillation, i.e. how much to subtract from the frame number to get the frame's oscillation x position. A useful value is-active_keyframe, which will make the period start from the keyframe position. See below for an illustration. - Centre

c(default:0): The y-axis offset of the oscillation.sin(p=4b, c=2)is equivalent tosin(p=4b)+2 - Limit

li(default:0): If >0, limits the number of periods repeated - Pulse width

pw(default:5): pulse() function only The pulse width.

Examples:

| function | description | example |

|---|---|---|

| `bez()` | Bezier curve between previous and next keyframe. All arguments are optional:

|

|

| `slide()` | Linear slide over a fixed time period. Requires at least one of `to` or `from` parameters (frame value will be used if missing), and `in` parameter defines how long the slide should last. Optional value `os` behaves like offset for `bez()`. See image for 3 examples. |  |

A range of methods from the Javascript Math object are exposed as follows, with a _ prefix.

Note that unlike the sin() oscillator above, these functions are not oscillators: they are simple functions.

| function | description | example |

|---|---|---|

_acos() |

Equivalent to Javascript's Math.acos() | |

_acosh() |

Equivalent to Javascript's Math.acosh() | |

_asin() |

Equivalent to Javascript's Math.asin() | |

_asinh() |

Equivalent to Javascript's Math.asinh() | |

_atan() |

Equivalent to Javascript's Math.atan() | |

_atanh() |

Equivalent to Javascript's Math.atanh() | |

_cbrt() |

Equivalent to Javascript's Math.cbrt() | |

_clz32() |

Equivalent to Javascript's Math.clz32() | |

_cos() |

Equivalent to Javascript's Math.cos() | |

_cosh() |

Equivalent to Javascript's Math.cosh() | |

_exp() |

Equivalent to Javascript's Math.exp() | |

_expm1() |

Equivalent to Javascript's Math.expm1() | |

_log() |

Equivalent to Javascript's Math.log() | |

_log10() |

Equivalent to Javascript's Math.log10() | |

_log1p() |

Equivalent to Javascript's Math.log1p() | |

_log2() |

Equivalent to Javascript's Math.log2() | |

_sign() |

Equivalent to Javascript's Math.sign() | |

_sinh() |

Equivalent to Javascript's Math.sinh() | |

_sqrt() |

Equivalent to Javascript's Math.sqrt() | |

_tan() |

Equivalent to Javascript's Math.tan() | |

_tanh() |

Equivalent to Javascript's Math.tanh() | |

_sin() |

Equivalent to Javascript's Math.sin() |

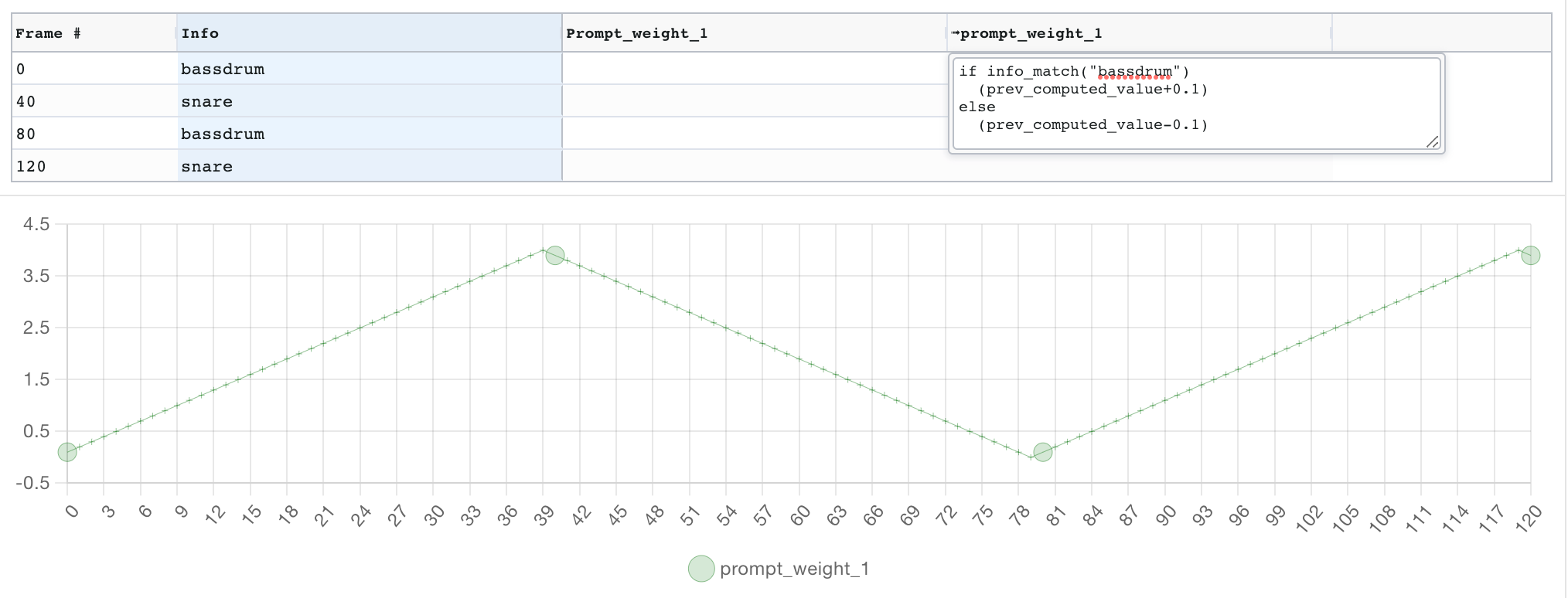

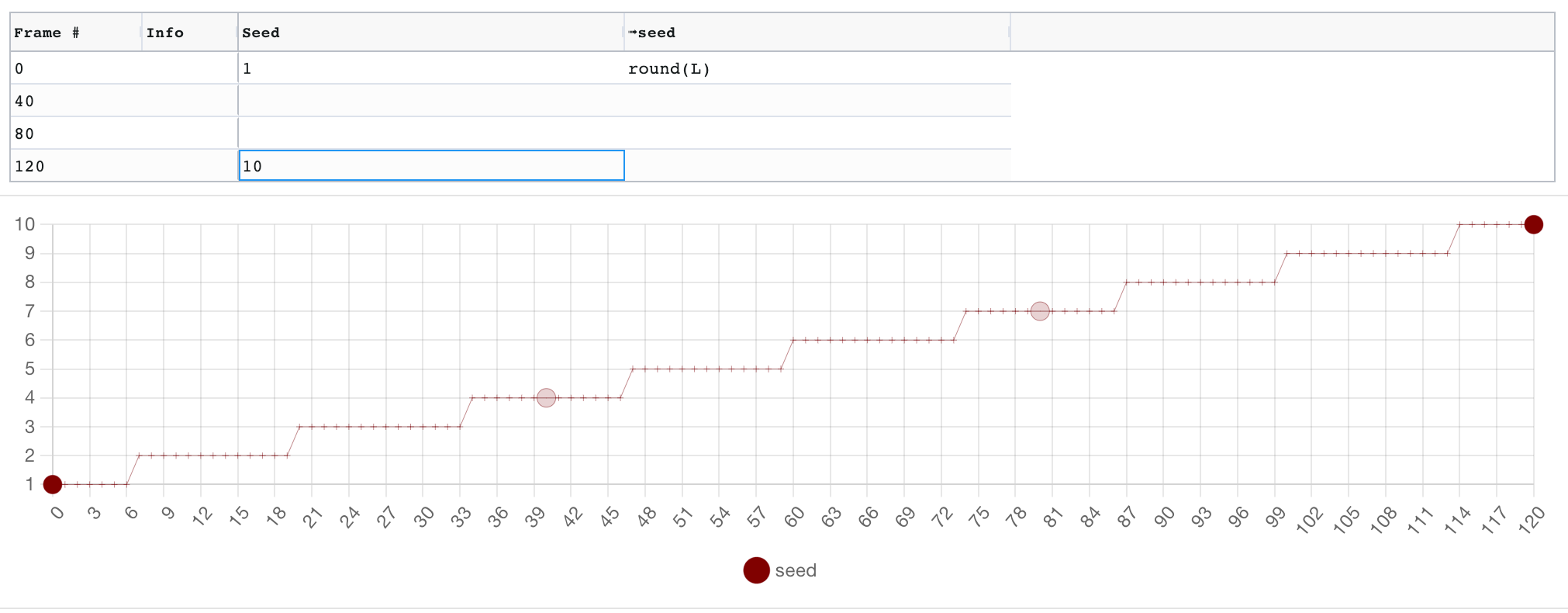

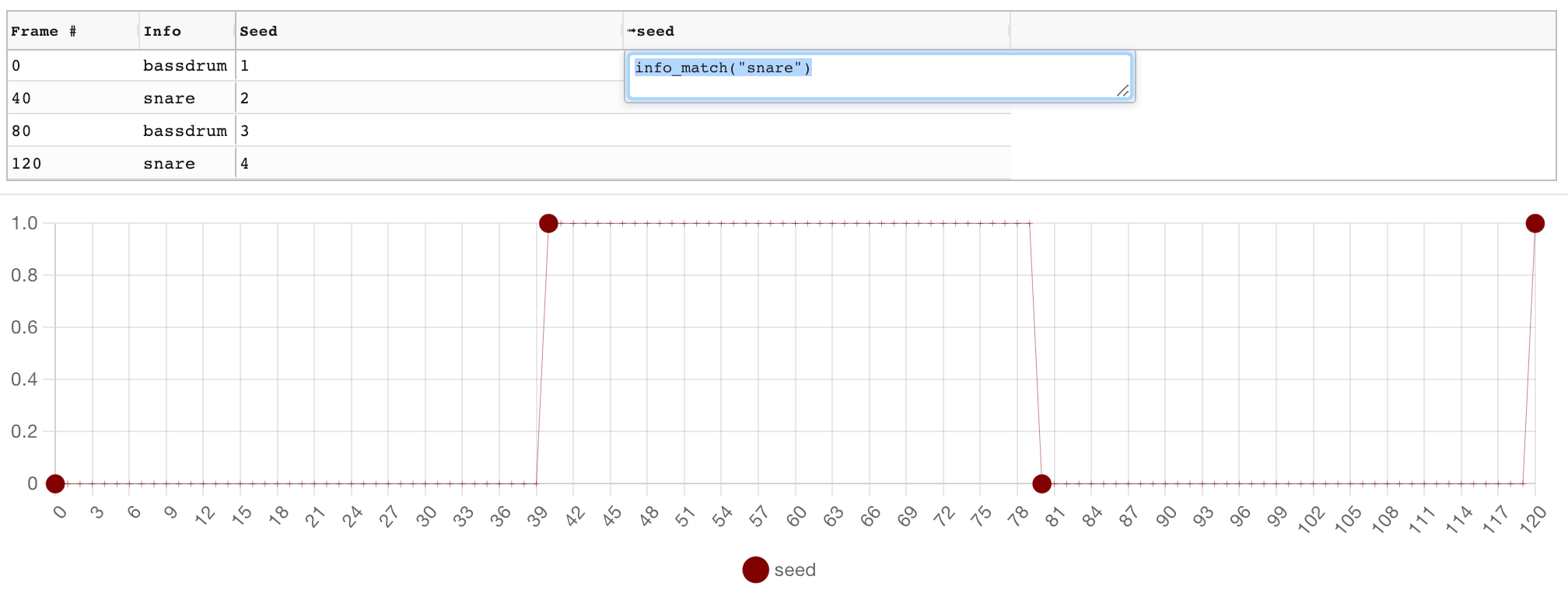

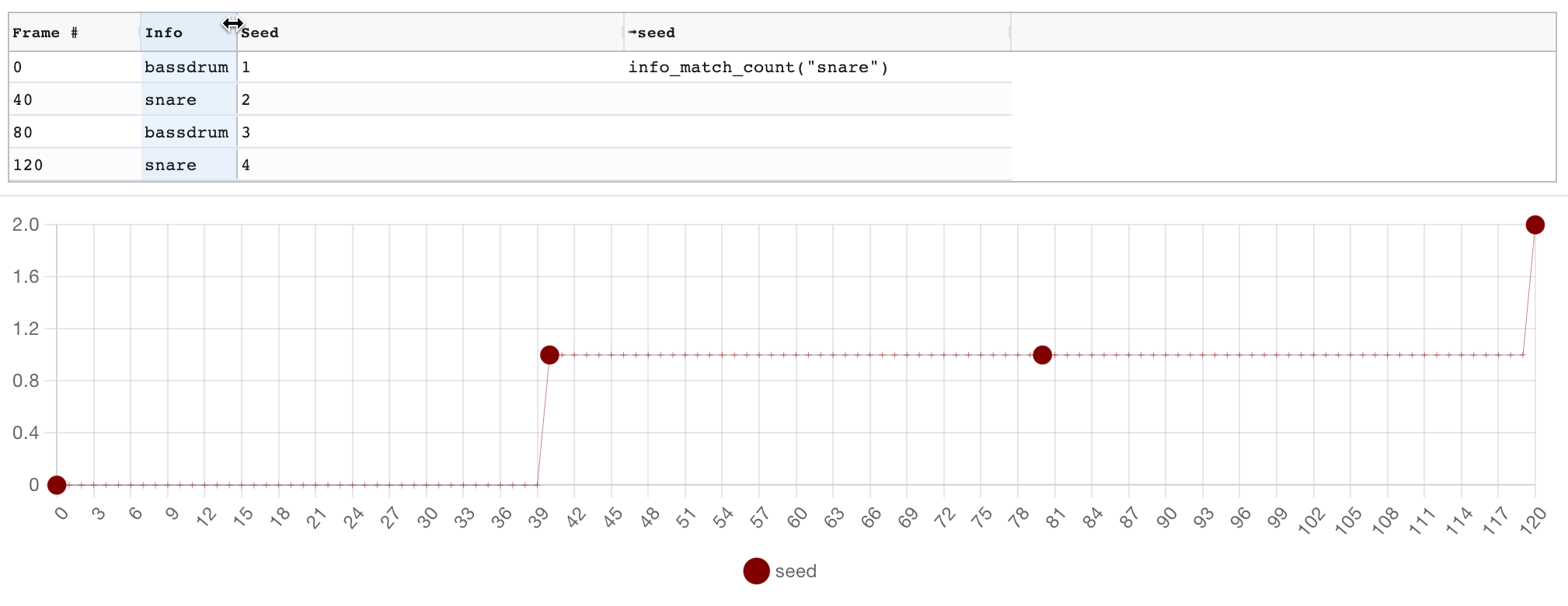

All keyframes have an optional "info" field which can hold an arbitrary string. You can query these from your expressions. For example, you can use functions to check whether the text of the current keyframe matches a regex, or to count how many past keyframes contained a given substring, or look forwards to when the next keyframe with a given string will occur.

Prompts can include any Parseq expression. For example, the following is a valid prompt using a conditional, the prompt weight operator : and string concatenation +:

A painting of

${

if (f<10)

"a cat":prompt_weight_1

else

"a dog":prompt_weight_2 + " with floppy ears"

}

, highly detailedOn frames less than 10, it will produce a rendered prompt like A painting of (a cat:0.45), highly detailed, and on frames 10 and above, it will yield A painting of (a dog:0.45) with floppy ears, highly detailed.

Note that if you want to ensure something does not appear in your generated image, giving it a negative weight in the positive prompt is generally not sufficient: you need to put the term in the negative prompt. Therefore, if you want something to appear then disappear, it becomes necessary to "move" it between the positive and negative prompts. This can be done with conditionals, but the following functions make it easier.

| function | description | example |

|---|---|---|

posneg(<term>, <weight>) |

<term> must evaluate to a string and <weight> to a number. Automatically shuffle a term between the positive and negative prompt depending on the weight. For example, if posneg("cat", prompt_weight_1) is in the positive prompt, frames on which prompt_weight_1 are positive will have (cat:abs(prompt_weight_1)) in the positive prompt, and frames on which prompt_weight_1 is negative will have (cat:abs(prompt_weight_1)) in the negative prompt. |

See this example video on Youtube. The video description includes a link to the parseq document. |

posneg_lora(<lora_name>, <weight>) |

Same as above but for loras. posneg("Smoke", prompt_weight_1) evaluates to <"lora":"Smoke":prompt_weight_1> |

See this example video on Youtube. The video description includes a link to the parseq document. |

See also the : operator above.

Parseq has a range of features to help you create animations with precisely-timed parameter fluctuations, for example for music synchronisation.

Parseq allows you to specify Frames per second (FPS) and beats per minute (BPM) which are used to map frame numbers to time and beat offsets. For example, if you set FPS to 10 and BPM to 120, a tooltip when you hover over frame 40 (in the grid or the graph) will show that this frame will occur 4 seconds or 8 beats into the video.

Furthermore, your interpolation formulae can reference beats and seconds by using the b and s suffixes on numbers. For example, here we define a sine oscillator of a period of 1 beat (in green), and a pulse oscillator with a period of 5s and a pulse width of 0.5s (in grey):

By default, keyframe positions are defined in terms of their frame number, which will remain fixed even if the FPS or BPM changes. For example, if you start at 10fps and create a keyframe on the 4th beat of a 120BPM track, the keyframe will be at frame 20. But if you decide the change to 20fps and update your track to be 140BPM, your animation will be out-of-sync because the 4th beat should now be on frame 34!

To solve this, you can lock your keyframes to their beat (or second) position. After doing this, they will remain in-sync even when you change FPS or BPM.

You can quickly create keyframes aligned with regular events by using the "at intervals" tab of the Add keyframe dialog. For example, here we are creating a keyframe at every beat position for the first 8 beats. The keyframe positions will be determined by using the document's BPM and FPS. Note that only 6 keyframes will be created, because keyframes already exist for beats 0 and 4:

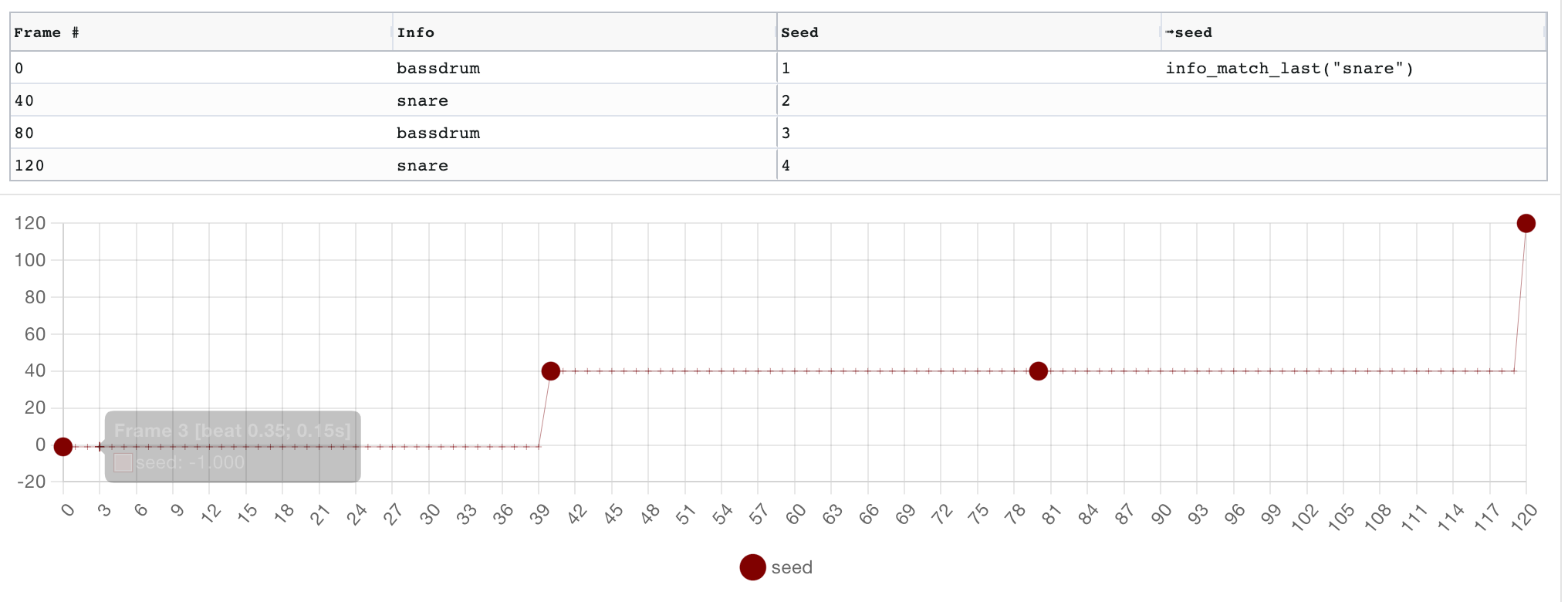

A common practice is to label keyframes to indicate the audio event they represent (e.g. "bassdrum", "snare", etc...). You can then reference all such keyframes in interpolation formulae with functions like info_match_last(), info_match_next() and info_match_count(), as well as in the bulk edit dialog.

To help you align your keyframes and formula with audio, you can load an audio file to view its waveform alongside the your parameter graph. Zooming and panning the graph will apply to the audio (click and drag to pan, hold alt/option and mouse wheel to zoom). Scrolling the audio will pan the graph. A viewport control is available between the graph and audio. Prompt, beat and cursor markers are displayed on both visualisations.

Sequence.02.mp4

Manually creating and labelling keyframes for audio events can be tedious. Parseq improves this with an audio event detection feature that uses AubioJS:

After loading a reference audio file, head to the event detection tab under the waveform and hit detect events. Markers will appear on the waveform indicating detected event positions.

You can tweak the event detection by controlling the following:

- Filtering: above the waveform and to the right is a biquad filter that allows you to apply a low/band/high-pass filter to your audio. This will enable you, for example, to isolate bassdrums from snares.

- Method: a range of event detection algorithms to choose from. Experiment with these, as they can produce vastly different results. See the aubioonset CLI docs for more details.

- Threshold: defines how picky to be when identifying onset events. Lower threshold values result in more events, high values result in fewer events.

- Silence: from aubio docs: "volume in dB under which the onset will not be detected. A value of -20.0 would eliminate most onsets but the loudest ones. A value of -90.0 would select all onsets."

Once you're satisfied with the detected events, you can create keyframes by switching to the keyframe generation tab. You can pick a custom label that will be assigned to all newly generated keyframes. If a keyframe already exists at an event position, the label will be combined with the existing label.

See event detection and keyframe generation in action in this tutorial.

Time series are a powerful feature of Parseq that allows you to import any series of numbers and reference them from Parseq formulas. A primary use case for this is to sync parameter changes to audio pitch or amplitude.

Click 'Add time series' and select an audio file to work with. You can then choose whether to extract pitch or amplitude. When extracting pitch, you can choose from a range of methods (see the aubiopitch CLI docs for details).

A biquad filter that allows you to apply a low/band/high-pass filter to your audio is available if you wish to pre-process it before performing the pitch/amplitude extraction.

After extracting the data, you can post-process it to take the absolute value, exclude datapoints outside a given range, clamp datapoints outside a given range, and normalise to a target range.

Using absolute value is particularly valuable for amplitude, where the unprocessed amplitude will oscillate between positive and negative.

The final timeseries will be decimated to a maximum of 2000 points. The green dots represent where frames land on those points.

Once the time series are created, you can chose an alias for them, and then reference them in your formula.

There are two ways to reference timeseries:

See pitch detection in action in this tutorial.

This feature is deprecated. See its archived documentation on the Parseq wiki.

In addition to audio pitch and amplitude data, you can also load any CSV as a timeseries. This means you can import essentially any series of numbers for use in Parseq.

The format of the CSV file must be timestamp,value on each row. You can choose whether the timestamp represents milliseconds or frames before you import the file.

For example, here we have imported data given to us by ChatGPT 4 the after asking the following:

Please generate CSV output in the format x,y , where x is a number increasing by 1 from 0 to 100, and the y value draws a simple city skyline. Output only the CSV data, do not provide any explanation.

Parseq has an experimental feature that enables you to visualise your camera movements in real time. It is inspired by AnimationPreview by @pharmapsychotic .

It currently has a few caveats:

- It is a rough reference only: the image warping algorithm is not identical to Deforum's.

- It currently only works for 3D animation params (x, y, z translation and 3d rotation).

- It does not factor in FPS or perspective params (fov, near, far).

Nonetheless, it is quite useful to get a general sense of what your movement params are going to do:

Sequence.02_1.mp4

Parseq can be used with all Deforum animation modes (2D, 3D, prompt interpolation, video etc...). You don't need to do anything special in Parseq to switch between modes: simply tweak the parameters you're interested in.

Here are the parameters that Parseq can control. You can select which ones are controlled by your document in the 'Managed Fields' section. Any 'Managed Fields' for which you also set values in the A1111 UI for Deforum will be overridden by Parseq. On the other hand, fields you don't select in 'Managed fields' can be controlled from the Deforum UI as normal. So you can 'mix & match' Parseq-controlled values with Deforum-controlled values.

Stable diffusion generation parameters:

- seed

- scale (CFG)

- noise: additional noise to add during generation, can help

- strength: how much the last generated image should influence the current generation

2D animation parameters:

- angle (ignored in 3D animation mode, use rotation 3D z axis)

- zoom (ignored in 3D animation mode, use rotation translation z)

- translation x axis

- translation y axis

Pseudo-3D animation parameters (ignored in 3D animation mode):

- perspective theta angle

- perspective phi angle

- perspective gamma angle

- perspective field of view

3D animation parameters (all ignored in 2D animation mode):

- translation z axis

- rotation 3d x axis

- rotation_3d y axis

- rotation_3d z axis

- field of view

- near point

- far point

Anti-blur parameters:

- antiblur_kernel

- antiblur_sigma

- antiblur_amount

- antiblur_threshold

Hybrid video parameters:

- hybrid_comp_alpha

- hybrid_comp_mask_blend_alpha

- hybrid_comp_mask_contrast

- hybrid_comp_mask_auto_contrast_cutoff_low

- hybrid_comp_mask_auto_contrast_cutoff_high

Other parameters:

- contrast: factor by which to adjust the previous last generated image's contrast before feeding to the current generation. 1 is no change, <1 lowers contrast, >1 increases contract.

Parseq provides a further 8 keyframable parameters (prompt_weight_1 to prompt_weight_8) that you can reference in your prompts, and can therefore be used as prompts weights. You can use any prompt format that will be recognised by a1111, keeping in mind that anything enclosed in ${...} will be evaluated as a Parseq expression.

For example, here's a positive prompt that uses Composable Diffusion to interpolate between faces:

Jennifer Aniston, centered, high detail studio photo portrait :${prompt_weight_1} AND

Brad Pitt, centered, high detail studio photo portrait :${prompt_weight_2} AND

Ben Affleck, centered, high detail studio photo portrait :${prompt_weight_3} AND

Gwyneth Paltrow, centered, high detail studio photo portrait :${prompt_weight_4} AND

Zac Efron, centered, high detail :${prompt_weight_5} AND

Clint Eastwood, centered, high detail studio photo portrait :${prompt_weight_6} AND

Jennifer Lawrence, centered, high detail studio photo portrait :${prompt_weight_7} AND

Jude Law, centered, high detail studio photo portrait :${prompt_weight_8}And here's an example using term weighting:

(Jennifer Aniston:${prompt_weight_1}), (Brad Pitt:${prompt_weight_2}), (Ben Affleck:${prompt_weight_3}), (Gwyneth Paltrow:${prompt_weight_4}), (Zac Efron:${prompt_weight_5}), (Clint Eastwood:${prompt_weight_6}), (Jennifer Lawrence:${prompt_weight_7}), (Jude Law:${prompt_weight_8}), centered, high detail studio photo portrait A corresponding parameter flow could look like this:

Note that any Parseq expression can be used, so for example the following will alternate between cat and dog on each beat:

Detailed photo of a ${if (floor(b%2)==0) "cat" else "dog"} Some important notes:

- Remember that unless you set

strengthto0, prior frames influence the current frame in addition to the prompt, so previous items won't disappear immediately even if they are removed from the prompt on a given frame. - To counter-act this, you may wish to put terms you don't wish to see at a given frame in your negative prompt too, with inverted weights.

So the prior example might look like this:

| Positive | Negative |

|---|---|

Detailed photo of a ${if (floor(b%2)==0) "cat" else "dog"} |

${if (floor(b%2)==0) "dog" else "cat"} |

You can add additional prompts and assign each one to a frame range:

If the ranges overlap, Parseq will combine the overlapping prompts with composable diffusion. You can decide whether the composable diffusion weights should be fixed, slide linearly in and out, or be defined by a custom Parseq expression.

Note that if overlapping prompts already use composable diffusion (... AND ...), this may lead to unexpected results, because only the last section of the original prompt will be weighted against the overlapping prompt. Parseq will warn you if this is happening.

For a great description of seed travelling, see Yownas' script. In summary, you can interpolate between the latent noise generated by 2 seeds by setting the first as the (main) seed, the second as the subseed, and fluctuating the subseed strength. A subseed strength of 0 will just use the main seed, and 1 will just use the subseed as the seed. Any value in between will interpolate between the two noise patterns using spherical linear interpolation (SLERP).

Parseq does not currently expose the subseed and subseed strength parameters explicitly. Instead, it takes fractional seed values and uses them to control the subseed and subseed strength values. For example, if on a given frame your seed value is 10.5, Parseq will send 10 as the seed, 11 as the subseed, and 0.5 as the subseed strength.

The downside is you can only interpolate between adjacent seeds. The benefit is seed travelling is very intuitive. If you'd like to have full control over the subseed and subseed strength, feel free to raise a feature request!

Note that the results of seed travelling are best seen with no input image (Interpolation animation mode) or with a very low strength. Else, the low input variations will likely result in artifacts / deep-frying.

Otherwise it's best to change the seed by at least 1 on each frame (you can also experiment with seed oscillation, for less variation).

Parseq aims to let you set absolute values for all parameters. So if you want to progressively rotate 180 degrees over 4 frames, you specify the following values for each frame: 45, 90, 135, 180.

However, because Stable Diffusion animations are made by feeding the last generated frame into the current generation step, some animation parameters become relative if there is enough loopback strength. So if you want to rotate 180 degrees over 4 frames, the animation engine expects the values 45, 45, 45, 45.

This is not the case for all parameters: for example, the seed value and field-of-view settings have no dependency on prior frames, so the animation engine expects absolute values.

To reconcile this, Parseq supplies delta values to Deforum for certain parameters. This is enabled by default, but you can toggle if off in the A111 Deforum extension UI if you want to see the difference.

For most parameters the delta value for a given field is simply the difference between the current and previous frame's value for that field. However, a few parameters such as 2D zoom (which is actually a scale factor) are multiplicative, so the delta is the ratio between the previous and current value.

Parseq can become slow when working with a large number of frames. If you see performance degradations, try the following:

- In the Managed Fields section, select only the fields you want to control with Parseq.

- Close the sections you're not using. For example, if you're not using Sparklines or the test Output view, close them using the ^ next to the section title.

- Hide the fields you're not actively working with by unselecting them in the "show/hide fields" selection box (or toggle them by clicking their sparklines).

- Disable autorender (and remember to manually hit the render button every few changes).

- Note that the graph becomes uneditable when displaying more than 1000 frames, and does not show keyframes. To restore editing, zoom in to a section less than 1000 frames.

Parseq is currently a front-end React app. It is part way through a conversion from Javascript to Typescript. There is currently very little back-end: by default, persistence is entirely in browser indexdb storage via Dexie.js. Signed-in users can optionally upload data to a Firebase-backed datastore.

You'll need node and npm on your system before you get started.

If you want to dive in:

- Run

npm installto pull dependencies. - Run

npm startto run the Parseq UI locally in dev mode on port 3000. You can now access the UI onlocalhost:3000. Code changes should be hot-reloaded.

Hosting & deployment is done using Firebase. Merges to master are automatically deployed to the staging channel. PRs are automatically deployed to the dev channel. There is currently no automated post-deployment verification or promotion to prod.

Assuming you have the right permissions, you can view active deployements with:

firebase hosting:channel:listAnd promote from staging to prod with:

firebase hosting:clone sd-parseq:staging sd-parseq:liveThis script includes ideas and code sourced from many other scripts. Thanks in particular to the following sources of support and inspiration:

- Everyone supporting Parseq on Patreon, including: Adam Sinclair, MJ, AndyXT, King Kush, Ben Del Vacchio, Zirteq, Kewk, BinaryLady at TheTechMargin, ascendant, Sasha Agafonoff, Brandon Glasgow, Koshi Mazaki, lexvesseur, Andreas Lewitzki, veryVANYA, Nenad Kuzmanovic, Stash, Sinneys, wildpusa, Chris Hughes, Desmond Grealy, HackerPrime, Lottery Discountz, Ronny Khalil

- Everyone who has bought me a coffee!

- Everyone who has contributed to the A1111 web UI

- Everyone who has contributed to Deforum

- Everyone trying out Parseq and giving feedback on Discord

- Everyone behind Aubio, AubioJS, Wavesurfer, react-timeline-editor, ag-grid (community edition), p5, chart.js and recharts.

- The following scripts and their authors from whom I picked up some good ideas when I was starting out:

- Filarus for their vid2vid script.

- Animator-Anon for their animation script.

- Yownas for their seed travelling script

- feffy380 for the prompt-morph script

- eborboihuc for the clear implementation of 3d rotations using

cv2.warpPerspective()