diff --git a/.dev/md2yml.py b/.dev/md2yml.py

index 7fce17a02e..af7b8f2f73 100755

--- a/.dev/md2yml.py

+++ b/.dev/md2yml.py

@@ -162,8 +162,9 @@ def parse_md(md_file):

model_name = fn[:-3]

fps = els[fps_id] if els[fps_id] != '-' and els[

fps_id] != '' else -1

- mem = els[mem_id] if els[mem_id] != '-' and els[

- mem_id] != '' else -1

+ mem = els[mem_id].split(

+ '\\'

+ )[0] if els[mem_id] != '-' and els[mem_id] != '' else -1

crop_size = els[crop_size_id].split('x')

assert len(crop_size) == 2

method = els[method_id].split()[0].split('-')[-1]

diff --git a/README.md b/README.md

index 117e3230ed..226b60f2fa 100644

--- a/README.md

+++ b/README.md

@@ -84,6 +84,7 @@ Supported backbones:

- [x] [Vision Transformer (ICLR'2021)](configs/vit)

- [x] [Swin Transformer (ICCV'2021)](configs/swin)

- [x] [Twins (NeurIPS'2021)](configs/twins)

+- [x] [ConvNeXt (ArXiv'2022)](configs/convnext)

Supported methods:

diff --git a/README_zh-CN.md b/README_zh-CN.md

index 480cf96385..852459fa82 100644

--- a/README_zh-CN.md

+++ b/README_zh-CN.md

@@ -83,6 +83,7 @@ MMSegmentation 是一个基于 PyTorch 的语义分割开源工具箱。它是 O

- [x] [Vision Transformer (ICLR'2021)](configs/vit)

- [x] [Swin Transformer (ICCV'2021)](configs/swin)

- [x] [Twins (NeurIPS'2021)](configs/twins)

+- [x] [ConvNeXt (ArXiv'2022)](configs/convnext)

已支持的算法:

diff --git a/configs/_base_/datasets/ade20k_640x640.py b/configs/_base_/datasets/ade20k_640x640.py

new file mode 100644

index 0000000000..14a4bb092f

--- /dev/null

+++ b/configs/_base_/datasets/ade20k_640x640.py

@@ -0,0 +1,54 @@

+# dataset settings

+dataset_type = 'ADE20KDataset'

+data_root = 'data/ade/ADEChallengeData2016'

+img_norm_cfg = dict(

+ mean=[123.675, 116.28, 103.53], std=[58.395, 57.12, 57.375], to_rgb=True)

+crop_size = (640, 640)

+train_pipeline = [

+ dict(type='LoadImageFromFile'),

+ dict(type='LoadAnnotations', reduce_zero_label=True),

+ dict(type='Resize', img_scale=(2560, 640), ratio_range=(0.5, 2.0)),

+ dict(type='RandomCrop', crop_size=crop_size, cat_max_ratio=0.75),

+ dict(type='RandomFlip', prob=0.5),

+ dict(type='PhotoMetricDistortion'),

+ dict(type='Normalize', **img_norm_cfg),

+ dict(type='Pad', size=crop_size, pad_val=0, seg_pad_val=255),

+ dict(type='DefaultFormatBundle'),

+ dict(type='Collect', keys=['img', 'gt_semantic_seg']),

+]

+test_pipeline = [

+ dict(type='LoadImageFromFile'),

+ dict(

+ type='MultiScaleFlipAug',

+ img_scale=(2560, 640),

+ # img_ratios=[0.5, 0.75, 1.0, 1.25, 1.5, 1.75],

+ flip=False,

+ transforms=[

+ dict(type='Resize', keep_ratio=True),

+ dict(type='RandomFlip'),

+ dict(type='Normalize', **img_norm_cfg),

+ dict(type='ImageToTensor', keys=['img']),

+ dict(type='Collect', keys=['img']),

+ ])

+]

+data = dict(

+ samples_per_gpu=4,

+ workers_per_gpu=4,

+ train=dict(

+ type=dataset_type,

+ data_root=data_root,

+ img_dir='images/training',

+ ann_dir='annotations/training',

+ pipeline=train_pipeline),

+ val=dict(

+ type=dataset_type,

+ data_root=data_root,

+ img_dir='images/validation',

+ ann_dir='annotations/validation',

+ pipeline=test_pipeline),

+ test=dict(

+ type=dataset_type,

+ data_root=data_root,

+ img_dir='images/validation',

+ ann_dir='annotations/validation',

+ pipeline=test_pipeline))

diff --git a/configs/_base_/models/upernet_convnext.py b/configs/_base_/models/upernet_convnext.py

new file mode 100644

index 0000000000..36b882f683

--- /dev/null

+++ b/configs/_base_/models/upernet_convnext.py

@@ -0,0 +1,44 @@

+norm_cfg = dict(type='SyncBN', requires_grad=True)

+custom_imports = dict(imports='mmcls.models', allow_failed_imports=False)

+checkpoint_file = 'https://download.openmmlab.com/mmclassification/v0/convnext/downstream/convnext-base_3rdparty_32xb128-noema_in1k_20220301-2a0ee547.pth' # noqa

+model = dict(

+ type='EncoderDecoder',

+ pretrained=None,

+ backbone=dict(

+ type='mmcls.ConvNeXt',

+ arch='base',

+ out_indices=[0, 1, 2, 3],

+ drop_path_rate=0.4,

+ layer_scale_init_value=1.0,

+ gap_before_final_norm=False,

+ init_cfg=dict(

+ type='Pretrained', checkpoint=checkpoint_file,

+ prefix='backbone.')),

+ decode_head=dict(

+ type='UPerHead',

+ in_channels=[128, 256, 512, 1024],

+ in_index=[0, 1, 2, 3],

+ pool_scales=(1, 2, 3, 6),

+ channels=512,

+ dropout_ratio=0.1,

+ num_classes=19,

+ norm_cfg=norm_cfg,

+ align_corners=False,

+ loss_decode=dict(

+ type='CrossEntropyLoss', use_sigmoid=False, loss_weight=1.0)),

+ auxiliary_head=dict(

+ type='FCNHead',

+ in_channels=384,

+ in_index=2,

+ channels=256,

+ num_convs=1,

+ concat_input=False,

+ dropout_ratio=0.1,

+ num_classes=19,

+ norm_cfg=norm_cfg,

+ align_corners=False,

+ loss_decode=dict(

+ type='CrossEntropyLoss', use_sigmoid=False, loss_weight=0.4)),

+ # model training and testing settings

+ train_cfg=dict(),

+ test_cfg=dict(mode='whole'))

diff --git a/configs/convnext/README.md b/configs/convnext/README.md

new file mode 100644

index 0000000000..b70f2c62c6

--- /dev/null

+++ b/configs/convnext/README.md

@@ -0,0 +1,71 @@

+# ConvNeXt

+

+[A ConvNet for the 2020s](https://arxiv.org/abs/2201.03545)

+

+## Introduction

+

+

+

+Official Repo

+

+Code Snippet

+

+## Abstract

+

+

+

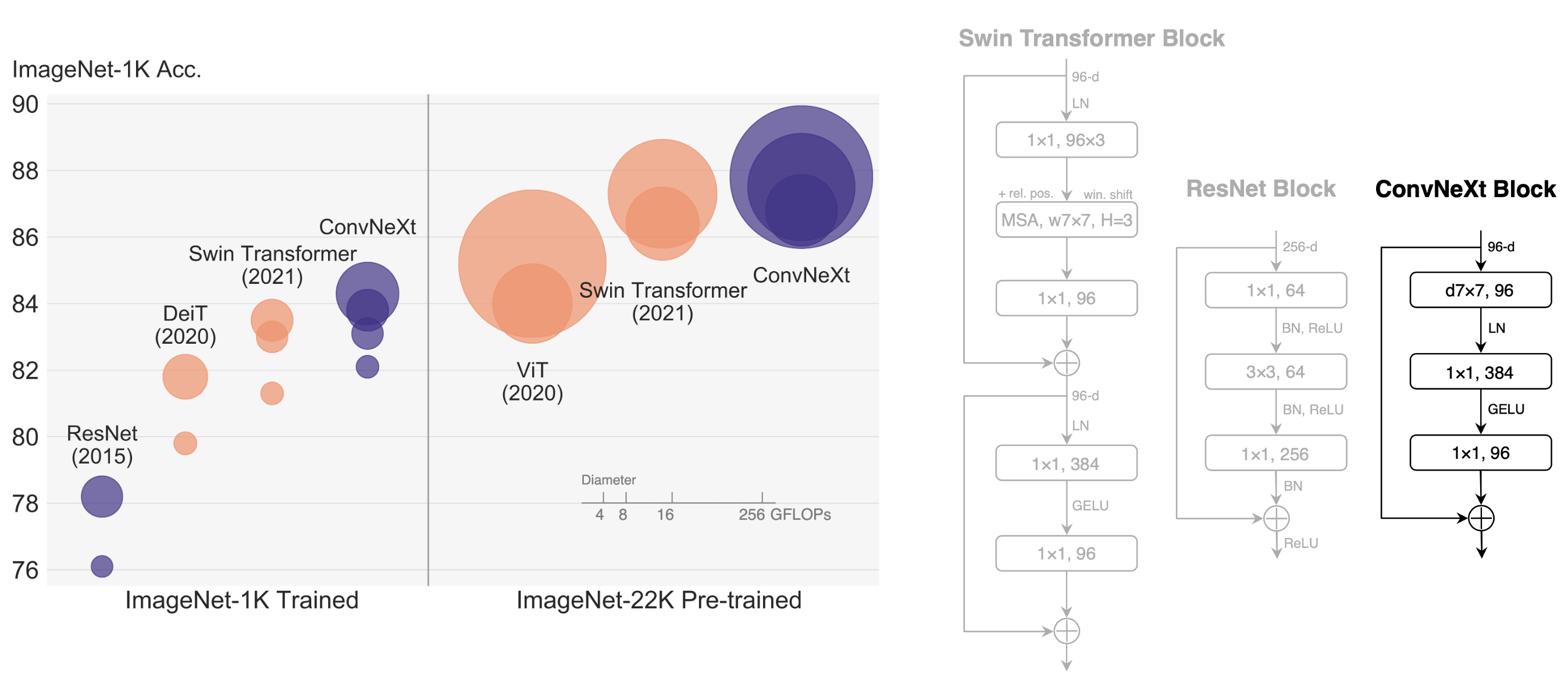

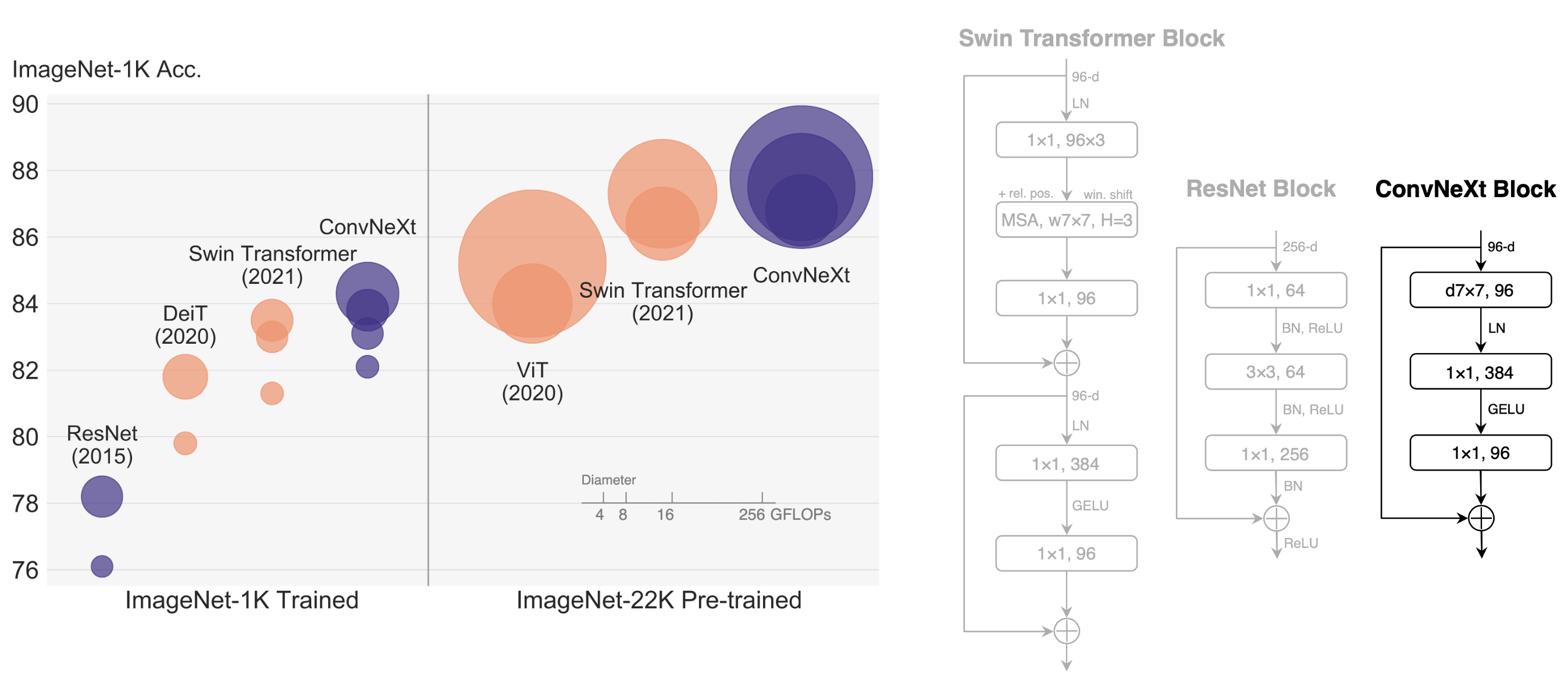

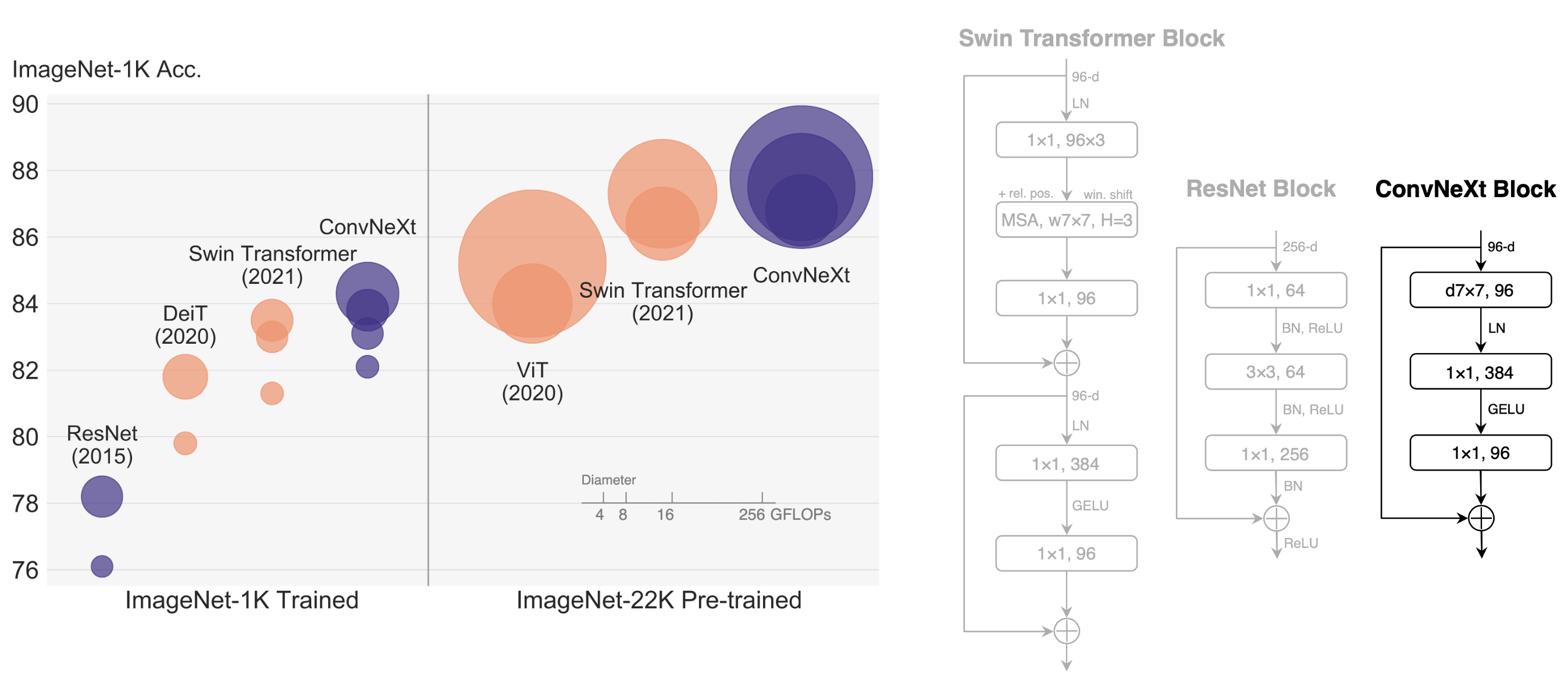

+The "Roaring 20s" of visual recognition began with the introduction of Vision Transformers (ViTs), which quickly superseded ConvNets as the state-of-the-art image classification model. A vanilla ViT, on the other hand, faces difficulties when applied to general computer vision tasks such as object detection and semantic segmentation. It is the hierarchical Transformers (e.g., Swin Transformers) that reintroduced several ConvNet priors, making Transformers practically viable as a generic vision backbone and demonstrating remarkable performance on a wide variety of vision tasks. However, the effectiveness of such hybrid approaches is still largely credited to the intrinsic superiority of Transformers, rather than the inherent inductive biases of convolutions. In this work, we reexamine the design spaces and test the limits of what a pure ConvNet can achieve. We gradually "modernize" a standard ResNet toward the design of a vision Transformer, and discover several key components that contribute to the performance difference along the way. The outcome of this exploration is a family of pure ConvNet models dubbed ConvNeXt. Constructed entirely from standard ConvNet modules, ConvNeXts compete favorably with Transformers in terms of accuracy and scalability, achieving 87.8% ImageNet top-1 accuracy and outperforming Swin Transformers on COCO detection and ADE20K segmentation, while maintaining the simplicity and efficiency of standard ConvNets.

+

+

+

+

+

+

+ +

+