中文 | 한국어 | 日本語 | Русский | Deutsch | Français | Español | Português | Türkçe | Tiếng Việt | العربية

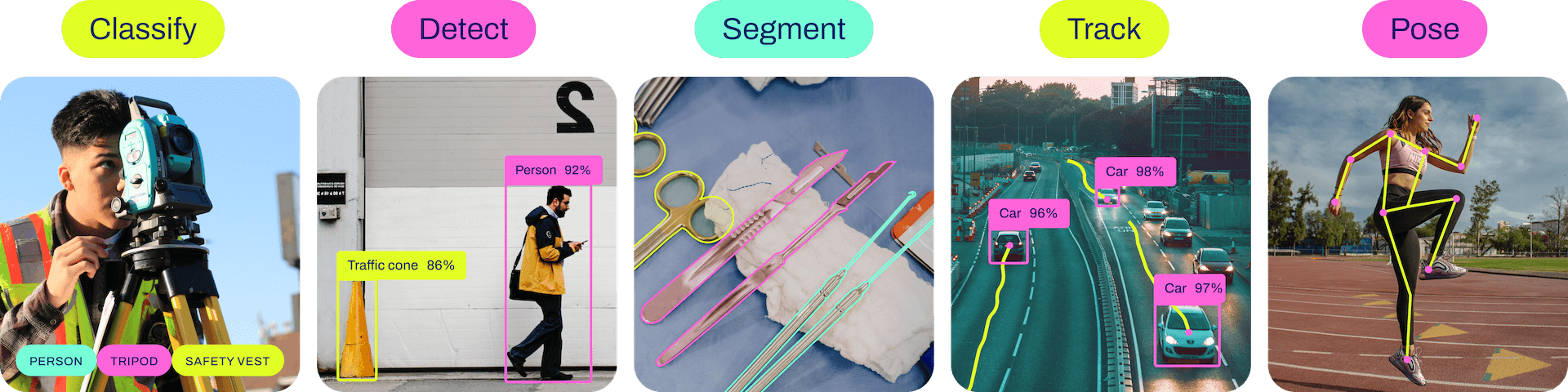

Ultralytics YOLO11 is a cutting-edge, state-of-the-art (SOTA) model that builds upon the success of previous YOLO versions and introduces new features and improvements to further boost performance and flexibility. YOLO11 is designed to be fast, accurate, and easy to use, making it an excellent choice for a wide range of object detection and tracking, instance segmentation, image classification and pose estimation tasks.

We hope that the resources here will help you get the most out of YOLO. Please browse the Ultralytics Docs for details, raise an issue on GitHub for support, questions, or discussions, become a member of the Ultralytics Discord, Reddit and Forums!

To request an Enterprise License please complete the form at Ultralytics Licensing.

See below for a quickstart install and usage examples, and see our Docs for full documentation on training, validation, prediction and deployment.

Install

Pip install the ultralytics package including all requirements in a Python>=3.8 environment with PyTorch>=1.8.

pip install ultralyticsFor alternative installation methods including Conda, Docker, and Git, please refer to the Quickstart Guide.

Usage

YOLO may be used directly in the Command Line Interface (CLI) with a yolo command:

yolo predict model=yolo11n.pt source='https://ultralytics.com/images/bus.jpg'yolo can be used for a variety of tasks and modes and accepts additional arguments, i.e. imgsz=640. See the YOLO CLI Docs for examples.

YOLO may also be used directly in a Python environment, and accepts the same arguments as in the CLI example above:

from ultralytics import YOLO

# Load a model

model = YOLO("yolo11n.pt")

# Train the model

train_results = model.train(

data="coco8.yaml", # path to dataset YAML

epochs=100, # number of training epochs

imgsz=640, # training image size

device="cpu", # device to run on, i.e. device=0 or device=0,1,2,3 or device=cpu

)

# Evaluate model performance on the validation set

metrics = model.val()

# Perform object detection on an image

results = model("path/to/image.jpg")

results[0].show()

# Export the model to ONNX format

path = model.export(format="onnx") # return path to exported modelSee YOLO Python Docs for more examples.

YOLO11 Detect, Segment and Pose models pretrained on the COCO dataset are available here, as well as YOLO11 Classify models pretrained on the ImageNet dataset. Track mode is available for all Detect, Segment and Pose models.

All Models download automatically from the latest Ultralytics release on first use.

Detection (COCO)

See Detection Docs for usage examples with these models trained on COCO, which include 80 pre-trained classes.

| Model | size (pixels) |

mAPval 50-95 |

Speed CPU ONNX (ms) |

Speed T4 TensorRT10 (ms) |

params (M) |

FLOPs (B) |

|---|---|---|---|---|---|---|

| YOLO11n | 640 | 39.5 | 56.1 ± 0.8 | 1.5 ± 0.0 | 2.6 | 6.5 |

| YOLO11s | 640 | 47.0 | 90.0 ± 1.2 | 2.5 ± 0.0 | 9.4 | 21.5 |

| YOLO11m | 640 | 51.5 | 183.2 ± 2.0 | 4.7 ± 0.1 | 20.1 | 68.0 |

| YOLO11l | 640 | 53.4 | 238.6 ± 1.4 | 6.2 ± 0.1 | 25.3 | 86.9 |

| YOLO11x | 640 | 54.7 | 462.8 ± 6.7 | 11.3 ± 0.2 | 56.9 | 194.9 |

- mAPval values are for single-model single-scale on COCO val2017 dataset.

Reproduce byyolo val detect data=coco.yaml device=0 - Speed averaged over COCO val images using an Amazon EC2 P4d instance.

Reproduce byyolo val detect data=coco.yaml batch=1 device=0|cpu

Segmentation (COCO)

See Segmentation Docs for usage examples with these models trained on COCO-Seg, which include 80 pre-trained classes.

| Model | size (pixels) |

mAPbox 50-95 |

mAPmask 50-95 |

Speed CPU ONNX (ms) |

Speed T4 TensorRT10 (ms) |

params (M) |

FLOPs (B) |

|---|---|---|---|---|---|---|---|

| YOLO11n-seg | 640 | 38.9 | 32.0 | 65.9 ± 1.1 | 1.8 ± 0.0 | 2.9 | 10.4 |

| YOLO11s-seg | 640 | 46.6 | 37.8 | 117.6 ± 4.9 | 2.9 ± 0.0 | 10.1 | 35.5 |

| YOLO11m-seg | 640 | 51.5 | 41.5 | 281.6 ± 1.2 | 6.3 ± 0.1 | 22.4 | 123.3 |

| YOLO11l-seg | 640 | 53.4 | 42.9 | 344.2 ± 3.2 | 7.8 ± 0.2 | 27.6 | 142.2 |

| YOLO11x-seg | 640 | 54.7 | 43.8 | 664.5 ± 3.2 | 15.8 ± 0.7 | 62.1 | 319.0 |

- mAPval values are for single-model single-scale on COCO val2017 dataset.

Reproduce byyolo val segment data=coco-seg.yaml device=0 - Speed averaged over COCO val images using an Amazon EC2 P4d instance.

Reproduce byyolo val segment data=coco-seg.yaml batch=1 device=0|cpu

Classification (ImageNet)

See Classification Docs for usage examples with these models trained on ImageNet, which include 1000 pretrained classes.

| Model | size (pixels) |

acc top1 |

acc top5 |

Speed CPU ONNX (ms) |

Speed T4 TensorRT10 (ms) |

params (M) |

FLOPs (B) at 640 |

|---|---|---|---|---|---|---|---|

| YOLO11n-cls | 224 | 70.0 | 89.4 | 5.0 ± 0.3 | 1.1 ± 0.0 | 1.6 | 3.3 |

| YOLO11s-cls | 224 | 75.4 | 92.7 | 7.9 ± 0.2 | 1.3 ± 0.0 | 5.5 | 12.1 |

| YOLO11m-cls | 224 | 77.3 | 93.9 | 17.2 ± 0.4 | 2.0 ± 0.0 | 10.4 | 39.3 |

| YOLO11l-cls | 224 | 78.3 | 94.3 | 23.2 ± 0.3 | 2.8 ± 0.0 | 12.9 | 49.4 |

| YOLO11x-cls | 224 | 79.5 | 94.9 | 41.4 ± 0.9 | 3.8 ± 0.0 | 28.4 | 110.4 |

- acc values are model accuracies on the ImageNet dataset validation set.

Reproduce byyolo val classify data=path/to/ImageNet device=0 - Speed averaged over ImageNet val images using an Amazon EC2 P4d instance.

Reproduce byyolo val classify data=path/to/ImageNet batch=1 device=0|cpu

Pose (COCO)

See Pose Docs for usage examples with these models trained on COCO-Pose, which include 1 pre-trained class, person.

| Model | size (pixels) |

mAPpose 50-95 |

mAPpose 50 |

Speed CPU ONNX (ms) |

Speed T4 TensorRT10 (ms) |

params (M) |

FLOPs (B) |

|---|---|---|---|---|---|---|---|

| YOLO11n-pose | 640 | 50.0 | 81.0 | 52.4 ± 0.5 | 1.7 ± 0.0 | 2.9 | 7.6 |

| YOLO11s-pose | 640 | 58.9 | 86.3 | 90.5 ± 0.6 | 2.6 ± 0.0 | 9.9 | 23.2 |

| YOLO11m-pose | 640 | 64.9 | 89.4 | 187.3 ± 0.8 | 4.9 ± 0.1 | 20.9 | 71.7 |

| YOLO11l-pose | 640 | 66.1 | 89.9 | 247.7 ± 1.1 | 6.4 ± 0.1 | 26.2 | 90.7 |

| YOLO11x-pose | 640 | 69.5 | 91.1 | 488.0 ± 13.9 | 12.1 ± 0.2 | 58.8 | 203.3 |

- mAPval values are for single-model single-scale on COCO Keypoints val2017 dataset.

Reproduce byyolo val pose data=coco-pose.yaml device=0 - Speed averaged over COCO val images using an Amazon EC2 P4d instance.

Reproduce byyolo val pose data=coco-pose.yaml batch=1 device=0|cpu

OBB (DOTAv1)

See OBB Docs for usage examples with these models trained on DOTAv1, which include 15 pre-trained classes.

| Model | size (pixels) |

mAPtest 50 |

Speed CPU ONNX (ms) |

Speed T4 TensorRT10 (ms) |

params (M) |

FLOPs (B) |

|---|---|---|---|---|---|---|

| YOLO11n-obb | 1024 | 78.4 | 117.6 ± 0.8 | 4.4 ± 0.0 | 2.7 | 17.2 |

| YOLO11s-obb | 1024 | 79.5 | 219.4 ± 4.0 | 5.1 ± 0.0 | 9.7 | 57.5 |

| YOLO11m-obb | 1024 | 80.9 | 562.8 ± 2.9 | 10.1 ± 0.4 | 20.9 | 183.5 |

| YOLO11l-obb | 1024 | 81.0 | 712.5 ± 5.0 | 13.5 ± 0.6 | 26.2 | 232.0 |

| YOLO11x-obb | 1024 | 81.3 | 1408.6 ± 7.7 | 28.6 ± 1.0 | 58.8 | 520.2 |

- mAPtest values are for single-model multiscale on DOTAv1 dataset.

Reproduce byyolo val obb data=DOTAv1.yaml device=0 split=testand submit merged results to DOTA evaluation. - Speed averaged over DOTAv1 val images using an Amazon EC2 P4d instance.

Reproduce byyolo val obb data=DOTAv1.yaml batch=1 device=0|cpu

Our key integrations with leading AI platforms extend the functionality of Ultralytics' offerings, enhancing tasks like dataset labeling, training, visualization, and model management. Discover how Ultralytics, in collaboration with Roboflow, ClearML, Comet, Neural Magic and OpenVINO, can optimize your AI workflow.

| Roboflow | ClearML ⭐ NEW | Comet ⭐ NEW | Neural Magic ⭐ NEW |

|---|---|---|---|

| Label and export your custom datasets directly to YOLO11 for training with Roboflow | Automatically track, visualize and even remotely train YOLO11 using ClearML (open-source!) | Free forever, Comet lets you save YOLO11 models, resume training, and interactively visualize and debug predictions | Run YOLO11 inference up to 6x faster with Neural Magic DeepSparse |

Experience seamless AI with Ultralytics HUB ⭐, the all-in-one solution for data visualization, YOLO11 🚀 model training and deployment, without any coding. Transform images into actionable insights and bring your AI visions to life with ease using our cutting-edge platform and user-friendly Ultralytics App. Start your journey for Free now!

We love your input! Ultralytics YOLO would not be possible without help from our community. Please see our Contributing Guide to get started, and fill out our Survey to send us feedback on your experience. Thank you 🙏 to all our contributors!

Ultralytics offers two licensing options to accommodate diverse use cases:

- AGPL-3.0 License: This OSI-approved open-source license is ideal for students and enthusiasts, promoting open collaboration and knowledge sharing. See the LICENSE file for more details.

- Enterprise License: Designed for commercial use, this license permits seamless integration of Ultralytics software and AI models into commercial goods and services, bypassing the open-source requirements of AGPL-3.0. If your scenario involves embedding our solutions into a commercial offering, reach out through Ultralytics Licensing.

For Ultralytics bug reports and feature requests please visit GitHub Issues. Become a member of the Ultralytics Discord, Reddit, or Forums for asking questions, sharing projects, learning discussions, or for help with all things Ultralytics!