A fork of so-vits-svc with realtime support and greatly improved interface. Based on branch 4.0 (v1) (or 4.1) and the models are compatible. 4.1 models are not supported. Other models are also not supported.

- Within a year, the technology has evolved enormously and there are many better alternatives

- Was hoping to create a more Modular, easy-to-install repository, but didn't have the skills, time, money to do so

- PySimpleGUI is no longer LGPL

- Using Typer is getting more popular than directly using Click

Always beware of the very few influencers who are quite overly surprised about any new project/technology. You need to take every social networking post with semi-doubt.

The voice changer boom that occurred in 2023 has come to an end, and many developers, not just those in this repository, have been not very active for a while.

There are too many alternatives to list here but:

- RVC family: IAHispano/Applio (MIT), fumiama's RVC (AGPL) and original RVC (MIT)

- VCClient (MIT etc.) is quite actively maintained and offers web-based GUI for real-time conversion.

- fish-diffusion tried to be quite modular but not quite actively maintained.

- yxlllc/DDSP-SVC - new releases are issued occasionally. yxlllc/ReFlow-VAE-SVC

- coqui-ai/TTS was for TTS but was partially modular. However, it is not maintained anymore, unfortunately.

Elsewhere, several start-ups have improved and marketed voice changers (probably for profit).

Updates to this repository have been limited to maintenance since Spring 2023.

It is difficult to narrow the list of alternatives here, but please consider trying other projects if you are looking for a voice changer with even better performance (especially in terms of latency other than quality).>However, this project may be ideal for those who want to try out voice conversion for the moment (because it is easy to install).

- Realtime voice conversion (enhanced in v1.1.0)

- Partially integrates

QuickVC - Fixed misuse of

ContentVecin the original repository.1 - More accurate pitch estimation using

CREPE. - GUI and unified CLI available

- ~2x faster training

- Ready to use just by installing with

pip. - Automatically download pretrained models. No need to install

fairseq. - Code completely formatted with black, isort, autoflake etc.

This BAT file will automatically perform the steps described below.

Windows (development version required due to pypa/pipx#940):

py -3 -m pip install --user git+https://github.com/pypa/pipx.git

py -3 -m pipx ensurepathLinux/MacOS:

python -m pip install --user pipx

python -m pipx ensurepathpipx install so-vits-svc-fork --python=3.11

pipx inject so-vits-svc-fork torch torchaudio --pip-args="--upgrade" --index-url=https://download.pytorch.org/whl/cu121 # https://download.pytorch.org/whl/nightly/cu121Creating a virtual environment

Windows:

py -3.11 -m venv venv

venv\Scripts\activateLinux/MacOS:

python3.11 -m venv venv

source venv/bin/activateAnaconda:

conda create -n so-vits-svc-fork python=3.11 pip

conda activate so-vits-svc-forkInstalling without creating a virtual environment may cause a PermissionError if Python is installed in Program Files, etc.

Install this via pip (or your favourite package manager that uses pip):

python -m pip install -U pip setuptools wheel

pip install -U torch torchaudio --index-url https://download.pytorch.org/whl/cu121 # https://download.pytorch.org/whl/nightly/cu121

pip install -U so-vits-svc-forkNotes

- If no GPU is available or using MacOS, simply remove

pip install -U torch torchaudio --index-url https://download.pytorch.org/whl/cu121. MPS is probably supported. - If you are using an AMD GPU on Linux, replace

--index-url https://download.pytorch.org/whl/cu121with--index-url https://download.pytorch.org/whl/nightly/rocm5.7. AMD GPUs are not supported on Windows (#120).

Please update this package regularly to get the latest features and bug fixes.

pip install -U so-vits-svc-fork

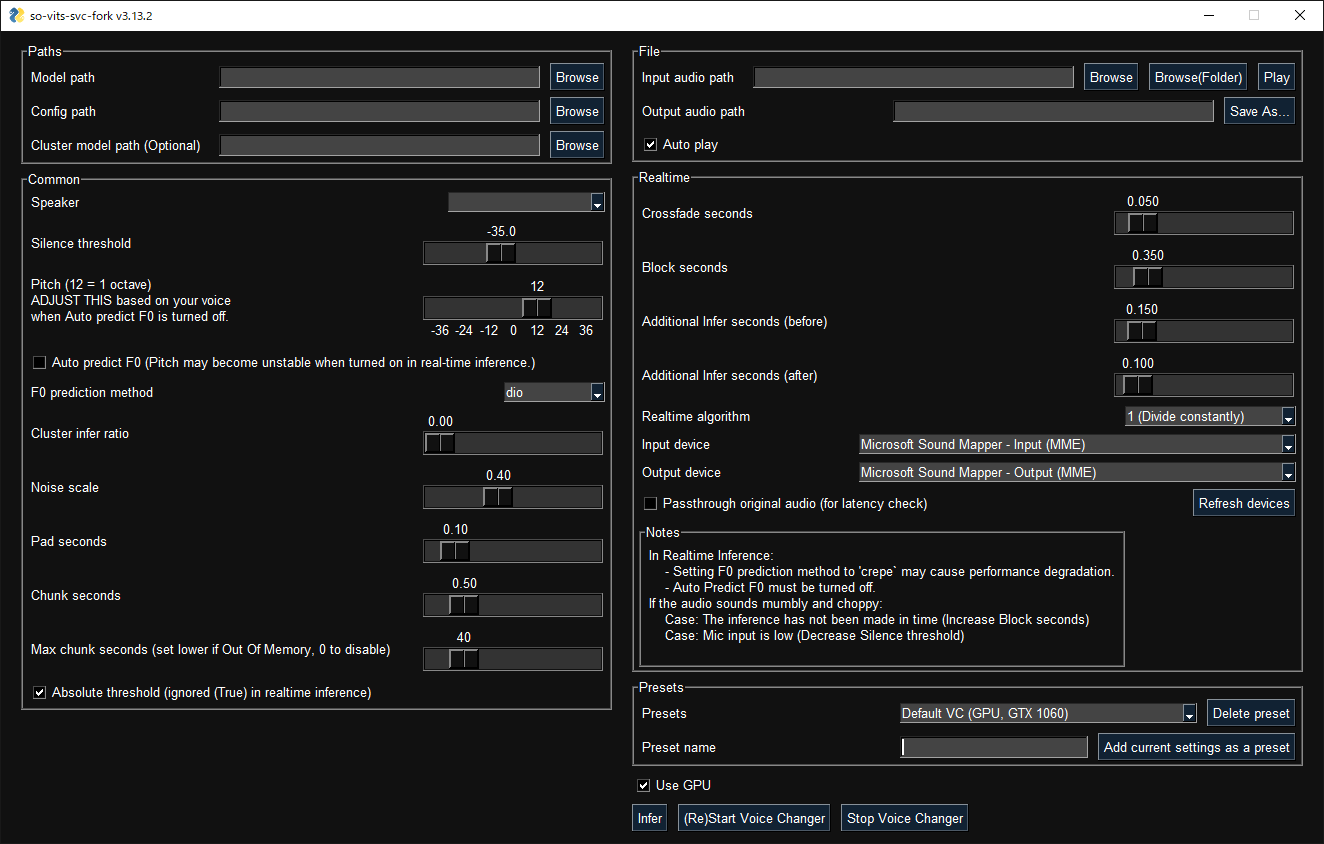

# pipx upgrade so-vits-svc-forkGUI launches with the following command:

svcg- Realtime (from microphone)

svc vc- File

svc infer source.wavPretrained models are available on Hugging Face or CIVITAI.

- If using WSL, please note that WSL requires additional setup to handle audio and the GUI will not work without finding an audio device.

- In real-time inference, if there is noise on the inputs, the HuBERT model will react to those as well. Consider using realtime noise reduction applications such as RTX Voice in this case.

- Models other than for 4.0v1 or this repository are not supported.

- GPU inference requires at least 4 GB of VRAM. If it does not work, try CPU inference as it is fast enough. 2

- If your dataset has BGM, please remove the BGM using software such as Ultimate Vocal Remover.

3_HP-Vocal-UVR.pthorUVR-MDX-NET Mainis recommended. 3 - If your dataset is a long audio file with a single speaker, use

svc pre-splitto split the dataset into multiple files (usinglibrosa). - If your dataset is a long audio file with multiple speakers, use

svc pre-sdto split the dataset into multiple files (usingpyannote.audio). Further manual classification may be necessary due to accuracy issues. If speakers speak with a variety of speech styles, set --min-speakers larger than the actual number of speakers. Due to unresolved dependencies, please installpyannote.audiomanually:pip install pyannote-audio. - To manually classify audio files,

svc pre-classifyis available. Up and down arrow keys can be used to change the playback speed.

If you do not have access to a GPU with more than 10 GB of VRAM, the free plan of Google Colab is recommended for light users and the Pro/Growth plan of Paperspace is recommended for heavy users. Conversely, if you have access to a high-end GPU, the use of cloud services is not recommended.

Place your dataset like dataset_raw/{speaker_id}/**/{wav_file}.{any_format} (subfolders and non-ASCII filenames are acceptable) and run:

svc pre-resample

svc pre-config

svc pre-hubert

svc train -t- Dataset audio duration per file should be <~ 10s.

- Need at least 4GB of VRAM. 5

- It is recommended to increase the

batch_sizeas much as possible inconfig.jsonbefore thetraincommand to match the VRAM capacity. Settingbatch_sizetoauto-{init_batch_size}-{max_n_trials}(or simplyauto) will automatically increasebatch_sizeuntil OOM error occurs, but may not be useful in some cases. - To use

CREPE, replacesvc pre-hubertwithsvc pre-hubert -fm crepe. - To use

ContentVeccorrectly, replacesvc pre-configwith-t so-vits-svc-4.0v1. Training may take slightly longer because some weights are reset due to reusing legacy initial generator weights. - To use

MS-iSTFT Decoder, replacesvc pre-configwithsvc pre-config -t quickvc. - Silence removal and volume normalization are automatically performed (as in the upstream repo) and are not required.

- If you have trained on a large, copyright-free dataset, consider releasing it as an initial model.

- For further details (e.g. parameters, etc.), you can see the Wiki or Discussions.

For more details, run svc -h or svc <subcommand> -h.

> svc -h

Usage: svc [OPTIONS] COMMAND [ARGS]...

so-vits-svc allows any folder structure for training data.

However, the following folder structure is recommended.

When training: dataset_raw/{speaker_name}/**/{wav_name}.{any_format}

When inference: configs/44k/config.json, logs/44k/G_XXXX.pth

If the folder structure is followed, you DO NOT NEED TO SPECIFY model path, config path, etc.

(The latest model will be automatically loaded.)

To train a model, run pre-resample, pre-config, pre-hubert, train.

To infer a model, run infer.

Options:

-h, --help Show this message and exit.

Commands:

clean Clean up files, only useful if you are using the default file structure

infer Inference

onnx Export model to onnx (currently not working)

pre-classify Classify multiple audio files into multiple files

pre-config Preprocessing part 2: config

pre-hubert Preprocessing part 3: hubert If the HuBERT model is not found, it will be...

pre-resample Preprocessing part 1: resample

pre-sd Speech diarization using pyannote.audio

pre-split Split audio files into multiple files

train Train model If D_0.pth or G_0.pth not found, automatically download from hub.

train-cluster Train k-means clustering

vc Realtime inference from microphoneThanks goes to these wonderful people (emoji key):

This project follows the all-contributors specification. Contributions of any kind welcome!

Footnotes

-

If you register a referral code and then add a payment method, you may save about $5 on your first month's monthly billing. Note that both referral rewards are Paperspace credits and not cash. It was a tough decision but inserted because debugging and training the initial model requires a large amount of computing power and the developer is a student. ↩

-9VJN74I-blue?style=flat-square&logo=paperspace)